Haven't installed OpenClaw yet? Click here for one-line install commands

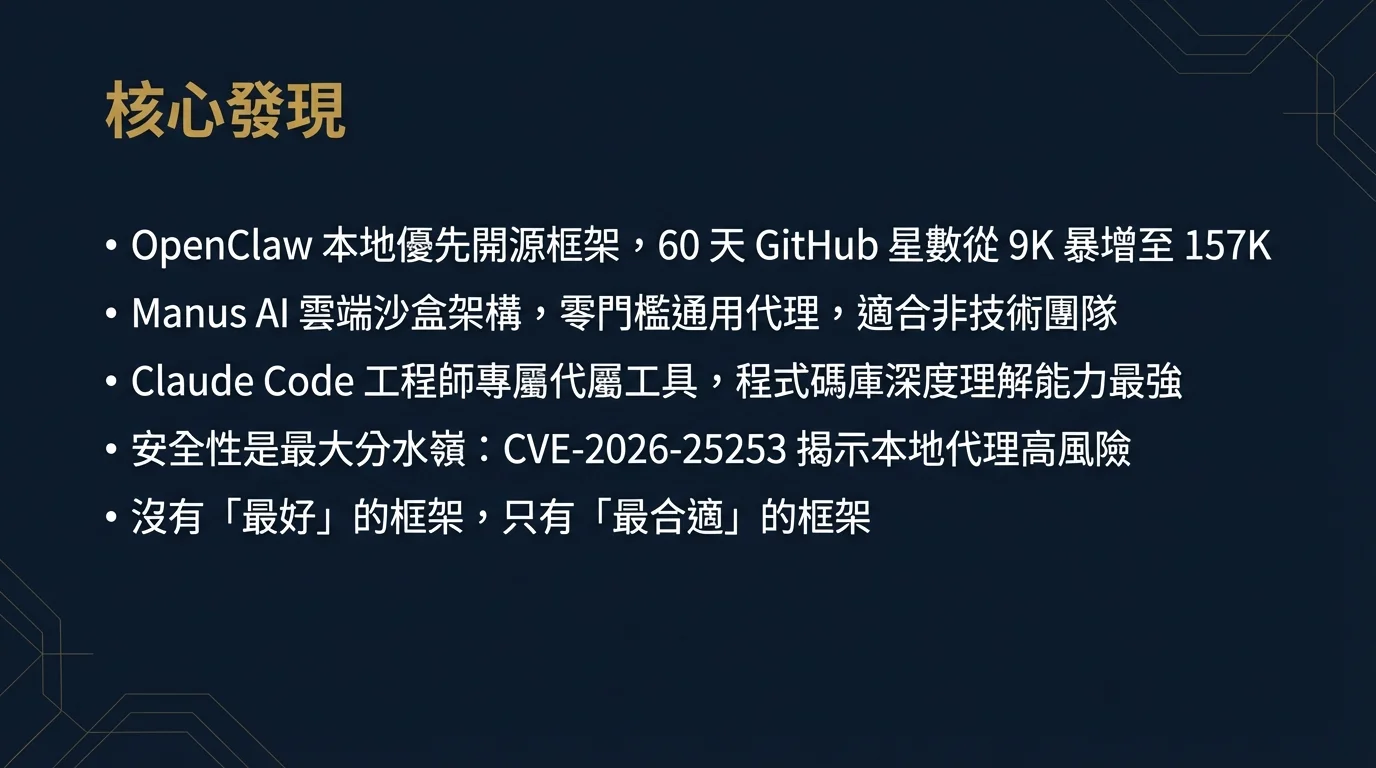

curl -fsSL https://openclaw.ai/install.sh | bashiwr -useb https://openclaw.ai/install.ps1 | iexcurl -fsSL https://openclaw.ai/install.cmd -o install.cmd && install.cmd && del install.cmd- OpenClaw Tutorial is currently the most community-watched local-first open-source AI agent framework, surging from 9,000 to 157,000 stars in 60 days, suited for technical teams who value data sovereignty and need deep messaging platform integration.

- Manus AI provides the lowest-barrier general AI agent experience through a cloud sandbox architecture, requiring no local installation, suited for non-technical business teams seeking rapid deployment, though all data flows through overseas servers.

- Claude Code is the official agent tool released by Anthropic for software engineers, deeply integrated with Claude's model capabilities, designed around a CLI-first approach, and is the top choice for software engineers and DevOps teams, though unsuitable for non-technical scenarios.

- Security is the biggest differentiator:

CVE-2026-25253(an OpenClaw one-click remote code execution vulnerability) reveals the high risks of local agent frameworks; Manus's cloud data flow raises enterprise compliance concerns; Claude Code's sandbox mechanism currently provides the most rigorous operational isolation. - There is no "best" framework, only the "most suitable" one -- the key selection criteria are: data sovereignty requirements, technical team capabilities, integration complexity, and budget model (one-time vs. subscription).

1. Why AI Agent Framework Selection Matters

In early 2026, AI agents have evolved from proof-of-concept experiments in the tech community to a core deployment strategy for enterprise AI digital transformation. According to Gartner's research, by the end of 2026, over 40% of large enterprises will deploy autonomous AI agents in at least one business process; in the Asia-Pacific region, this percentage is expected to be reached earlier due to accelerated digitization in manufacturing and services.[8]

However, the term "AI agent" is already severely overloaded. From OpenClaw, which can autonomously operate an entire computer desktop, to Manus AI, which completes complex multi-step tasks in cloud sandboxes, to Claude Code, which focuses on helping engineers write and refactor code -- while all three are called "AI agents," their design philosophies, applicable scenarios, deployment costs, and security risks are fundamentally different.

The cost of choosing the wrong framework is significant. A team building a customer service automation system on Claude Code will discover on day one that its CLI architecture is entirely unsuitable for non-technical users; a medical institution deploying Manus to process sensitive medical records may encounter problems at its first security audit. Moreover, the cost of migrating from one agent framework to another is often severely underestimated -- involving not just the technical rebuild, but also user retraining and organizational inertia friction.

The purpose of this article is to provide technical decision-makers with a systematic selection guide. Starting from architectural design, we will deeply compare functionality matrices, deployment costs, security, and ecosystem maturity, ultimately providing specific selection recommendations for eight typical enterprise scenarios.

1.1 Three-Framework Market Positioning Snapshot

Before diving into the details, let's establish an intuitive understanding of the three major frameworks:

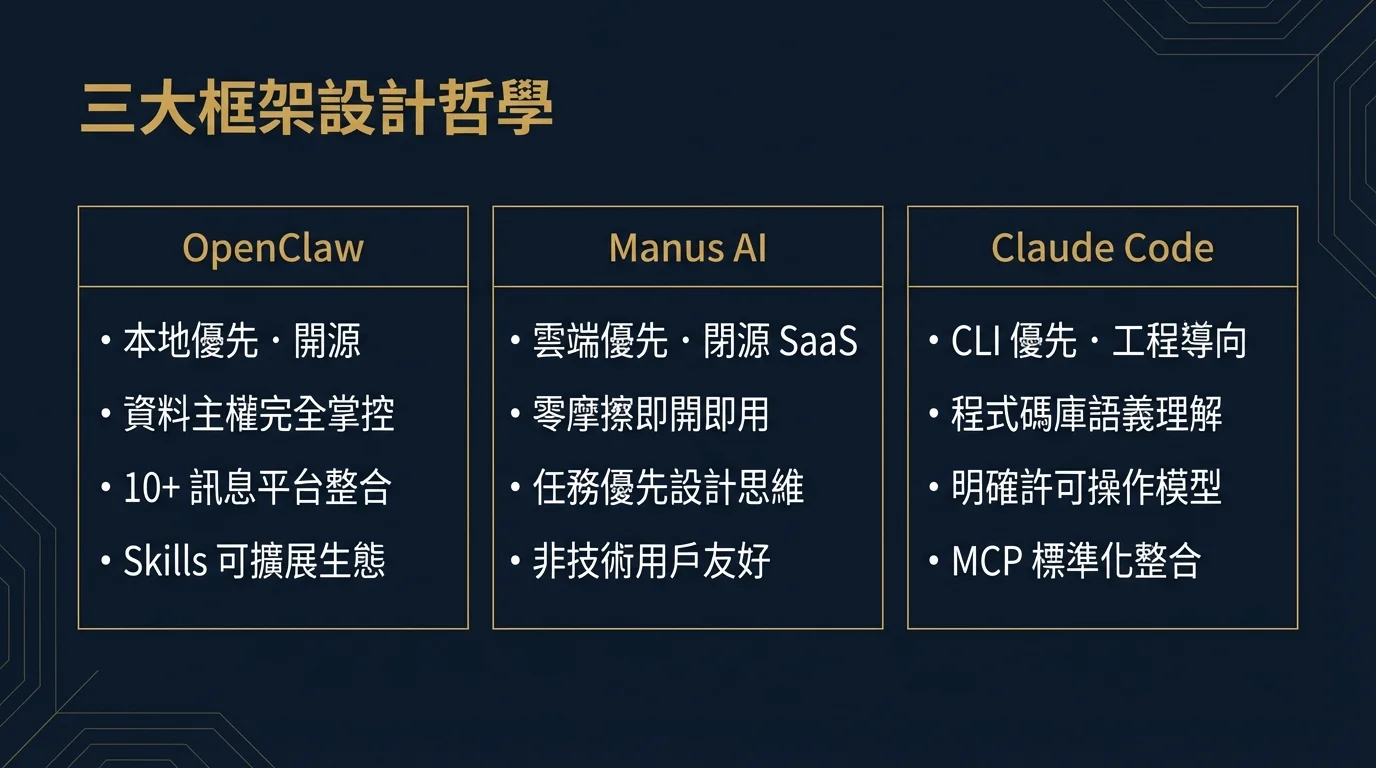

OpenClaw's core proposition is "your computer, your agent, your data." It runs on the local machine, connecting to various messaging platforms (WhatsApp, Telegram, Slack, etc.) via a WebSocket gateway, and can control browsers, read/write files, execute code, and even coordinate multiple AI sub-agents to work collaboratively. It is a true "full-stack personal agent," but also brings the highest technical barrier and security responsibility.[1]

Manus AI is positioned as a "zero-friction general AI agent." Users simply open a browser, enter a task description, and Manus autonomously completes web browsing, data collection, report generation, and other complex tasks in its cloud sandbox. It deliberately hides all technical details, allowing non-technical business personnel to get started immediately. This design decision is both its greatest advantage and its greatest limitation.[4]

Claude Code is the official agent tool built by Anthropic specifically for software engineers. With a command-line interface (CLI) at its core, it deeply understands codebase context, can read, write, test, and refactor code, and execute system commands through the bash tool. Its design philosophy is "seamlessly embed into the engineer's workflow" rather than "replace the engineer's judgment."[5]

2. Three-Framework Positioning & Design Philosophy

2.1 OpenClaw: The Local-First Open-Source Agent Revolution

OpenClaw was born with a distinct personal touch. Its creator, Peter Steinberger, is the founder of PSPDFKit (now Nutrient), an influential engineer in the iOS development community. According to an in-depth report by The Pragmatic Engineer, Steinberger extensively used AI-assisted code generation while building OpenClaw and frankly stated "I ship code I don't fully read myself" -- a statement that later sparked heated discussion in the software engineering community about AI-assisted development and code quality.[10]

OpenClaw's design philosophy can be summarized in three core principles:

First, Data Sovereignty. All inference computation occurs locally (or via a user-selected AI model API); message history, the memory store (Supermemory), and workflow definitions are all stored on the user's own machine. For any organization with data compliance requirements, this is a very compelling proposition.

Second, Platform-Agnostic Messaging. OpenClaw supports over 10 mainstream messaging platforms, including WhatsApp, Telegram, Discord, Slack, Signal, iMessage (via the Mac version), and more. This means users don't need to install a new application to summon the AI agent from within their preferred communication tools.

Third, an Extensible Skills Marketplace. OpenClaw has over 100 official and community-contributed Skills (skill plugins), covering everything from GitHub operations, calendar management, and email automation to web scraping. Technical users can also develop custom skills using Python or JavaScript.

OpenClaw's viral spread was equally remarkable. Its predecessor underwent multiple renames: from the original ClawdBot, to MoltBot, to the current OpenClaw (its original codename opencode is still visible in some community documentation).[2] On GitHub, it surpassed 100,000 stars within two days of its first public release, peaking at 710 new stars per hour, making it one of the fastest-growing open-source projects in GitHub history.[3] Within 60 days of release, it grew from 9,000 to 157,000 stars, becoming the most watched open-source project of 2026.

2.2 Manus AI: Cloud-First General Agent Service

Manus AI was built by a team with a Chinese background, and its design philosophy is almost diametrically opposed to OpenClaw's. Manus's goal is not to provide maximum flexibility for technical users, but to provide the lowest-barrier AI agent experience for everyone.

Manus's core architecture is a "cloud sandbox execution environment." When a user submits a task (e.g., "Research Taiwan's top 10 SaaS tools in 2026 and generate a competitive analysis report"), Manus launches an isolated sandbox environment on its cloud servers, where the agent autonomously browses the web, collects data, writes reports, and delivers the results back to the user. The entire process is completely transparent to the user, requiring no software installation or API key management.

Manus's design philosophy embodies "Task-First" thinking: users only need to describe "what they want," not "how to achieve it." This design resonated strongly in the market, especially among non-technical roles in marketing, sales, and research. However, this "black box" design also means users have almost no visibility into the agent's behavior process, which is a clear limitation for enterprise scenarios requiring compliance auditing.

From a pricing model perspective, Manus uses a subscription model, offering a free plan (with task limits) and a paid Pro plan. For individual users and SMBs, this model has an extremely low entry cost; but for large enterprises requiring many parallel tasks, costs can escalate quickly.

2.3 Claude Code: The Official Agent Tool for Engineers

Claude Code is the official agent coding tool released by Anthropic in 2025, representing Anthropic's core vision for "how AI agents should collaborate with human engineers." It is not a standalone application, but a CLI tool deeply embedded in the engineer's workflow.

Claude Code's design philosophy can be summarized as "Trustworthy Collaborator." It does not attempt to replace the engineer's judgment, but rather completes tedious implementation work under the engineer's supervision. This is reflected in several specific design decisions:

First, clear operational boundaries. Claude Code explains and requests confirmation from the user before executing any operation that may have side effects (such as writing files, executing bash commands, or making git commits). While this design adds interaction friction, it significantly reduces the risk of agent runaway.

Second, codebase context understanding. Claude Code can read the entire codebase's structure, understand cross-file dependency relationships, and provide modification suggestions with a global perspective. This is the most significant difference compared to traditional code completion tools (like GitHub Copilot).

Third, seamless integration with existing toolchains. Claude Code is designed to work in any terminal environment, naturally collaborating with git, npm, pytest, docker, and other developer tools, without forcing users to switch to a new work environment.

Claude Code's limitations are equally clear: it is primarily designed for code-related tasks, and for browser automation, messaging platform integration, and general office automation scenarios, its capabilities fall far short of OpenClaw or Manus.

3. Architecture Design Comparison

3.1 OpenClaw's Four-Layer Architecture

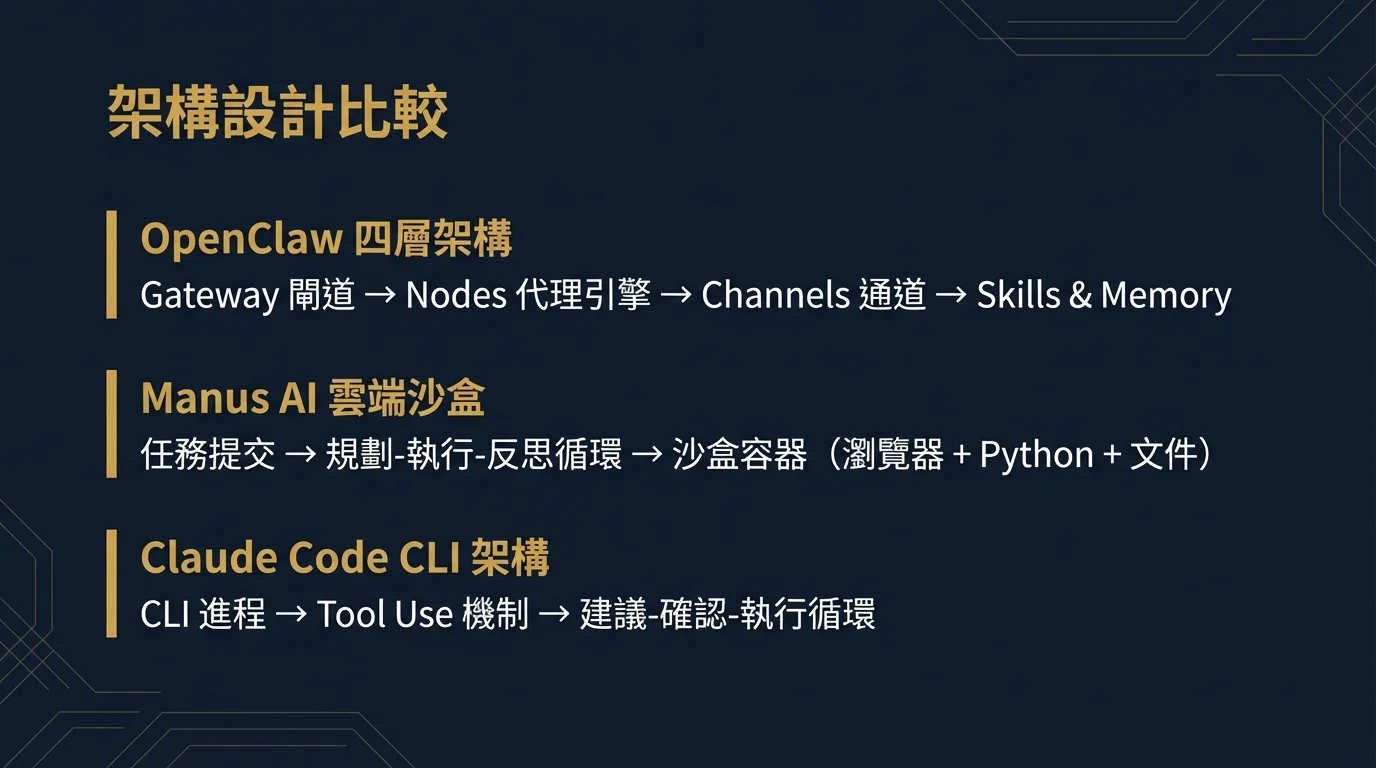

OpenClaw employs a carefully designed four-layer architecture, where each layer serves a distinct purpose, collectively supporting a complete local agent ecosystem:[9]

Layer 1: Gateway Layer

OpenClaw launches a WebSocket server on the local machine, listening at ws://127.0.0.1:18789. This gateway is the nerve center of the entire system, responsible for receiving messages from various messaging platform Bridges, and dispatching tasks to the underlying Node execution engine. The Gateway Layer also handles session management, rate limiting, and auth token verification.

Layer 2: Nodes Layer

Nodes are OpenClaw's core execution units; each Node represents an independently running AI agent instance. Nodes are responsible for communicating with the selected AI model (Claude, GPT-4, DeepSeek, or local Ollama models), maintaining conversation context, and coordinating Skill invocations. OpenClaw supports "Agent Teams," allowing multiple Nodes to form a collaborative team, each handling different sub-tasks of a complex task.

Layer 3: Channels Layer

Channels define the agent's communication interfaces. OpenClaw provides independent Bridge plugins for each supported messaging platform, including WhatsApp Bridge, Telegram Bridge, Discord Bridge, Slack Bridge, Signal Bridge, and more. Each Bridge runs as an independent process, communicating with the Gateway Layer and converting platform-native message formats to OpenClaw's internal standard format. This design makes adding new platform support relatively easy -- developers only need to implement the corresponding Bridge interface.

Layer 4: Skills & Memory Layer

This is OpenClaw's richest capability layer. Skills are composable capability plugins, ranging from basic web search, code execution, and file I/O to advanced GitHub operations, calendar management, and image generation. Supermemory is OpenClaw's persistent memory system, storing user preferences, historical interaction summaries, and important context in Markdown format, ensuring the agent maintains memory continuity across different sessions. The Hooks mechanism supports event-driven, zero-polling automation, allowing the agent to respond to external events (such as receiving an email or file changes) to automatically trigger workflows.

3.2 Manus's Cloud Sandbox Architecture

Manus's architecture is designed with "task isolation" as its core principle. Each user task launches an independent sandbox container on Manus's cloud infrastructure, containing: a controlled browser instance (for web operations), a Python execution environment (for data processing), and a document editing environment (for report generation).

Manus's agent engine uses a "Plan-Execute-Reflect" loop architecture. After receiving a task, the agent first generates an execution plan (visible to the user), then executes step by step, evaluating after each step whether the results meet expectations and adjusting subsequent plans as needed. This architecture makes Manus particularly effective at handling long-tail tasks requiring multi-step reasoning.

However, Manus's cloud architecture also brings inherent limitations: sandbox environment resource quotas limit the number of parallel tasks; cross-task memory persistence is relatively limited; and accessing enterprise private data requires additional integration configuration (typically providing API keys or OAuth authorization).

3.3 Claude Code's CLI-First Architecture

Claude Code's architecture is relatively simple, but this simplicity is a deliberate design decision. It runs as a local CLI process, communicating with the Claude model via the Anthropic API (or enterprise-deployed private endpoints). The local process manages conversation context, reads the local file system, executes bash commands, and presents results to the user.

Claude Code introduces a "Tool Use" mechanism, allowing the Claude model to request specific operations such as reading files (Read), writing files (Write), executing commands (Bash), and searching code (Grep). Each tool invocation is executed only after user confirmation, forming a "suggest-confirm-execute" interaction loop.

3.4 Three-Framework Architecture Comparison Table

| Architecture Dimension | OpenClaw | Manus AI | Claude Code |

|---|---|---|---|

| Deployment Location | Local | Cloud | Local CLI + Cloud API |

| Core Protocol | WebSocket |

HTTPS / SSE | HTTPS / API |

| Execution Architecture | Four-layer (Gateway/Nodes/Channels/Skills) | Cloud Sandbox Container | CLI Process + Tool Use |

| Multi-Agent Collaboration | Native Support (Agent Teams) | Single Agent (Multi-step) | Experimental Support |

| Memory Persistence | Supermemory (Local Markdown) | Limited (Cross-task) | CLAUDE.md Context File |

| Extensibility | Skills Plugin System | Limited (Official Integrations) | Tool Definitions / MCP |

| Open-Source Level | Fully Open Source (MIT) | Closed-Source SaaS | Partially Open Source (SDK) |

| Data Sovereignty | Fully Local | Cloud Processing | Code Local, Inference Cloud |

4. Functionality Matrix Deep Comparison

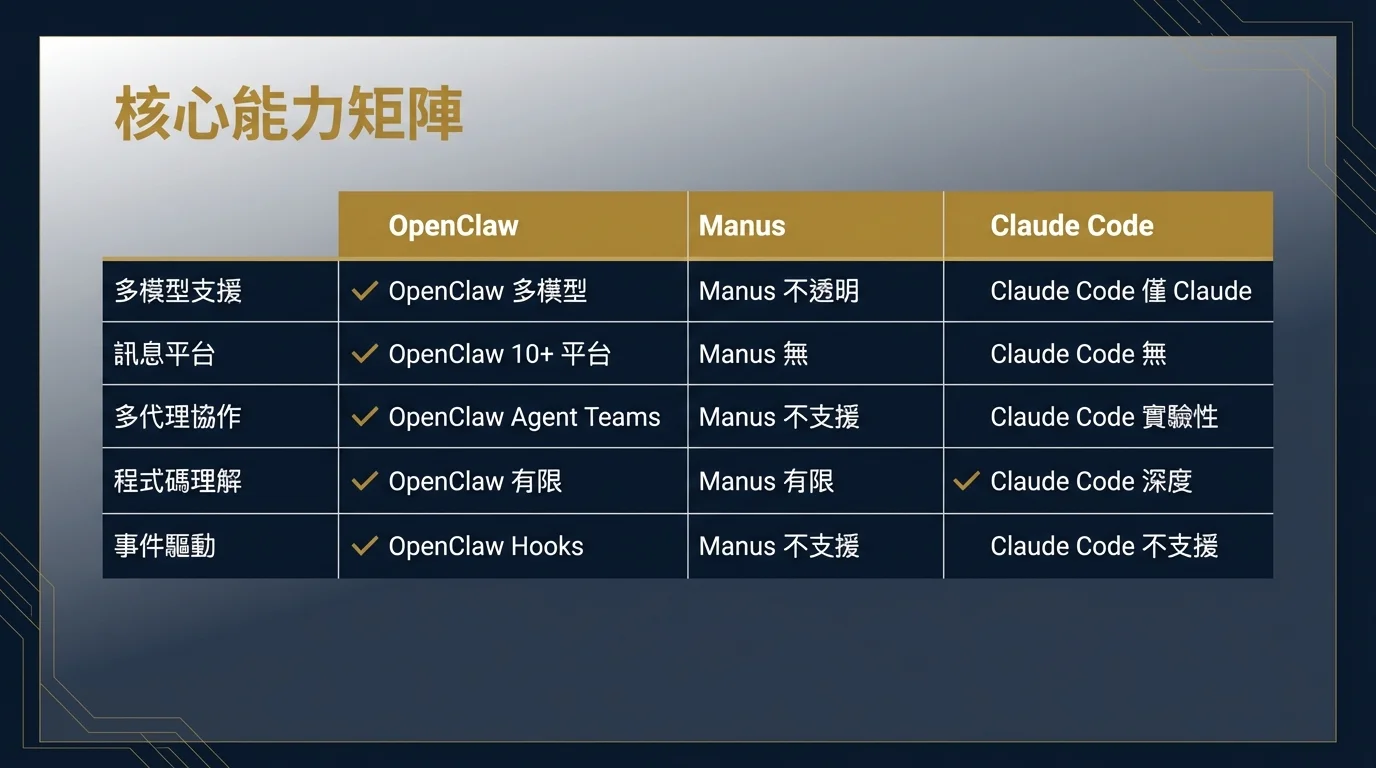

4.1 Core Capability Matrix

Before analyzing the functional differences of the three frameworks, we need to first establish a common evaluation framework. AI agent core capabilities can be divided into seven dimensions: multi-model support, browser automation, messaging platform integration, memory persistence, multi-agent collaboration, code execution, and file system access. Below is a comprehensive assessment based on each framework's official documentation and community testing:

| Capability Dimension | OpenClaw | Manus AI | Claude Code |

|---|---|---|---|

| Multi-Model Support | Claude / GPT / DeepSeek / Ollama (Local Models) | Internal Model (Opaque) | Claude Series (Opus / Sonnet / Haiku) |

| Browser Automation | Playwright Integration (Skills) | Native Support (Cloud) | Via bash / MCP |

| Messaging Platform Integration | 10+ Platforms (WhatsApp / Telegram / Slack / Discord / Signal, etc.) | No Native Integration | None |

| Memory Persistence | Supermemory (Complete) | Limited (Task-level) | CLAUDE.md (Manually Maintained) |

| Multi-Agent Collaboration | Agent Teams (Native) | Not Supported | Experimental (Sub-agents) |

| Code Execution | Shell / Python (Local) | Python (Cloud Sandbox) | Any Language (Local bash) |

| File System Access | Full Local Access | Sandbox Access + Upload | Full Local Access |

| Event-Driven Automation | Hooks (Zero-Polling) | Not Supported | Not Supported |

| GUI Operations | Via Skill Plugins | Native (Cloud Desktop) | Not Supported |

| API Integration | Skills + Custom Development | Limited (Official Integrations) | MCP (Model Context Protocol) |

| Codebase Understanding | Limited | Limited | Deep (Cross-file Semantic Understanding) |

| Multilingual Interface | Depends on Underlying Model | Multilingual Support | Depends on Underlying Model |

4.2 Key Differences Deep Dive

Messaging Platform Integration: OpenClaw's Absolute Advantage

In the messaging platform integration dimension, OpenClaw has an unmatched advantage over the other two frameworks. For Asian enterprises, WhatsApp and LINE integration capabilities are critical -- many SMBs in Taiwan conduct customer communication primarily on LINE and WhatsApp. OpenClaw's Channel architecture allows the same AI agent to simultaneously monitor multiple messaging platforms and route tasks across different platforms, which is extremely valuable in enterprise customer service automation scenarios.

Manus and Claude Code are virtually absent in this dimension. Manus's design assumes users submit tasks through its web interface and does not support direct messaging platform integration (though the enterprise version may provide API endpoints for secondary development). Claude Code is entirely focused on command-line workflows, with no design intent for messaging platform integration.

Codebase Understanding: Claude Code's Core Competitive Advantage

In software engineering scenarios, Claude Code's codebase understanding capability is the strongest among the three. Claude Code can read an entire project's directory structure, understand import relationships and module dependencies, and provide refactoring suggestions with a global perspective on this basis. Its CLAUDE.md design -- allowing developers to describe project architecture, coding conventions, and special considerations -- enables the agent to maintain project context understanding throughout the entire work session.

While OpenClaw also has code execution capabilities, its design focus is not on deeply understanding specific codebases but on broad task automation. Manus's code capabilities are similarly limited, mainly suitable for generating standalone scripts rather than performing fine-grained operations in complex existing codebases.

Multi-Agent Collaboration: OpenClaw's Agent Teams

OpenClaw's Agent Teams feature currently offers the most complete multi-agent collaboration support among the three frameworks. Users can define an agent team composed of multiple specialized Nodes, for example: a "research agent" for web collection, an "analysis agent" for data processing, and a "writing agent" for report generation. These agents are coordinated through OpenClaw's Gateway layer, can work in parallel, and exchange intermediate results.

Claude Code's multi-agent capabilities are currently experimental, allowing the main agent to launch sub-agents to handle specific sub-tasks, but the collaboration model is relatively limited. Manus currently does not support multi-agent architecture; all tasks are completed linearly by a single agent.

5. Deployment Models & Operational Costs

5.1 OpenClaw's Local Deployment

OpenClaw's local deployment requires a certain technical foundation. Minimum system requirements include: modern macOS (13.0+) or Linux (Ubuntu 20.04+), 8GB RAM (16GB recommended), and a stable network connection (for accessing AI model APIs). Windows support is currently through WSL2; a native Windows version is still in development.[9]

The installation process involves Node.js environment configuration, OAuth authorization setup for various messaging platform Bridges, and AI model API key configuration. For non-technical users, this process may take 1-3 hours. For enterprise environments needing multiple Bridges (e.g., simultaneously enabling WhatsApp and Slack), process management tools (such as pm2 or systemd) also need to be configured.

OpenClaw's operational cost structure is relatively unique: the framework itself is free, with primary costs coming from AI model API usage. Using the Claude API, the current Sonnet 4.5 model costs approximately $3 per million input tokens and $15 per million output tokens; with heavy usage, monthly costs may range from several hundred to several thousand dollars. Using local Ollama models can significantly reduce API costs but requires higher local hardware specifications (NVIDIA GPU recommended).

For enterprise deployment, OpenClaw supports deploying the Gateway on internal servers, allowing multiple employees to share the same agent infrastructure. This "Enterprise Gateway" model is a direction the community is actively exploring, but official documentation support remains relatively limited, requiring a degree of custom engineering investment.

5.2 Manus's SaaS Subscription Model

Manus uses a standard SaaS subscription model with virtually zero operational costs. Users only need a browser and a Manus account to start using it immediately. This makes Manus extremely competitive when "time is cost" -- for SMBs without a technical team to support deployment, Manus's 0-hour time-to-value is a strong argument.

However, Manus's pricing model can bring surprises at scale. The free plan's task limits (approximately X credits per month) are quickly exhausted by frequent users; the Pro plan's monthly fee is reasonable for individual users, but if an enterprise needs to pay for multiple seats, the total cost of ownership (TCO) can quickly exceed a self-hosted solution. Additionally, Manus currently does not offer enterprise private deployment options; all data is processed in Manus's cloud, which is a fundamental barrier for organizations with data sovereignty requirements.

5.3 Claude Code's API Consumption Model

Claude Code's cost structure is the most transparent: users directly pay Anthropic API token costs with no additional framework licensing fees. Claude Code itself can be installed and used for free (npm install -g @anthropic-ai/claude-code), with costs entirely dependent on conversation token consumption.

For a software engineer's daily use, Claude Code's monthly cost typically ranges from $50-$200 (depending on usage frequency and task complexity). Anthropic offers an enterprise plan (Claude for Enterprise) that allows organizations to deploy with private endpoints, where data does not flow to Anthropic's training pipeline, and supports private deployment on Amazon Bedrock and Google Cloud Vertex AI, meeting enterprise data residency requirements.

5.4 Three-Framework Deployment Cost Comparison

| Cost Dimension | OpenClaw | Manus AI | Claude Code |

|---|---|---|---|

| Framework License Fee | Free (Open Source) | Subscription (Monthly) | Free (CLI Tool) |

| AI Model Cost | User-selected API (Variable) | Included in Subscription | Anthropic API (Variable) |

| Initial Setup Time | 1-8 hours | < 5 minutes | 15-30 minutes |

| Ongoing Maintenance | Medium-High | Very Low | Low |

| Enterprise Private Deployment | Supported (Custom Engineering) | Not Supported | Supported (Bedrock / Vertex) |

| Individual Monthly Cost Estimate | $20-$200 (by API usage) | $20-$50 (Pro plan) | $50-$200 (by usage) |

| 10-Person Team Monthly Estimate | $200-$2,000+ | $200-$500 | $500-$2,000 |

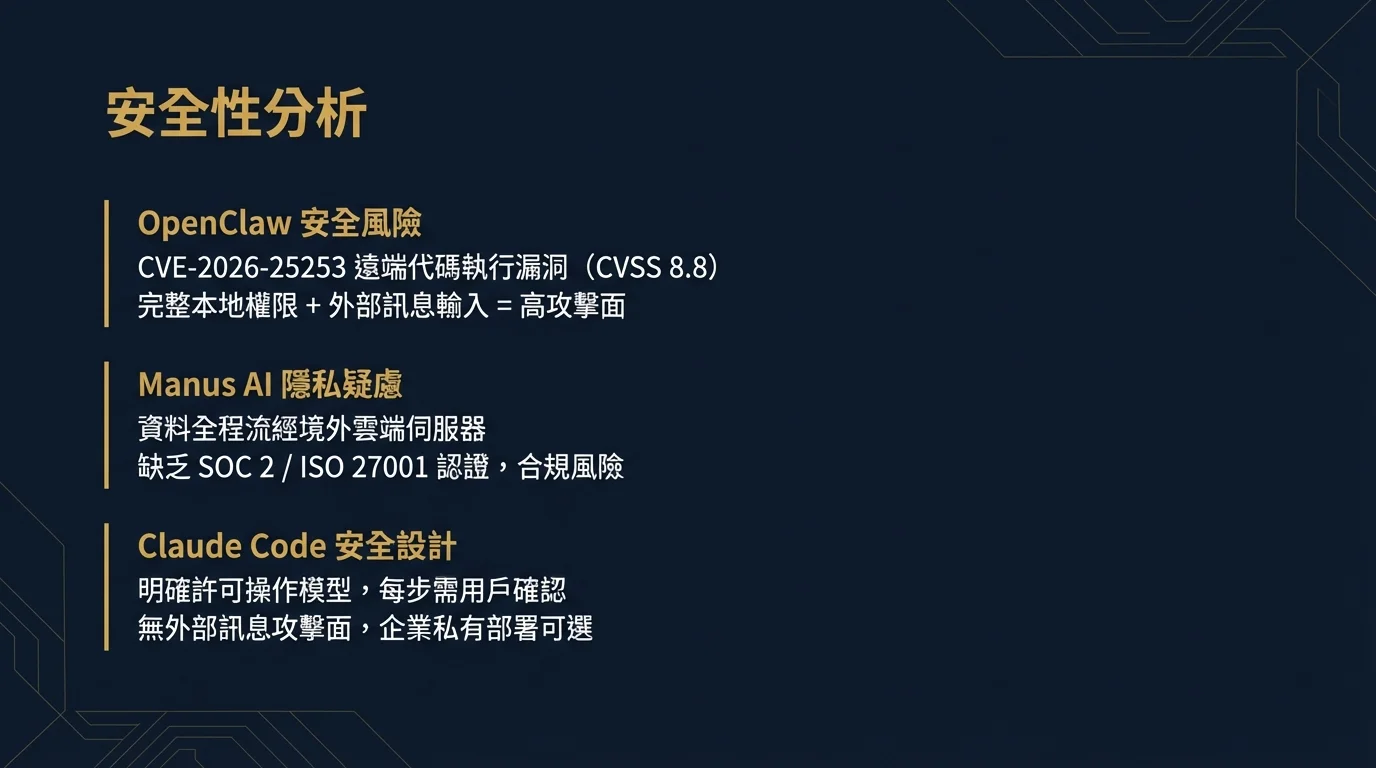

6. Security & Privacy Analysis

Among all comparison dimensions of the three frameworks, security and privacy is the one enterprise decision-makers should least overlook. Each framework has its unique security risk profile, and understanding these risks is essential for making responsible selection decisions.

6.1 OpenClaw's Security Risks: CVE-2026-25253

OpenClaw's security issues are currently the most widely discussed among the three frameworks. In early 2026, security researchers discovered CVE-2026-25253 -- a critical vulnerability allowing attackers to achieve remote code execution (RCE) on the OpenClaw host machine through a maliciously crafted message.[6]

The attack vector for this vulnerability was surprisingly simple: an attacker only needed to send a message containing a specially formatted payload to any messaging platform account monitored by the OpenClaw agent to potentially trigger arbitrary code execution on the host machine. Given that OpenClaw typically runs with the user's full permissions (not in a restricted sandbox environment), the potential damage from this vulnerability was extremely high.

Cisco's security blog raised a profound systemic critique: the root of the problem was not just an isolated vulnerability, but a fundamental concern with OpenClaw's entire design philosophy. When an agent has "full local computer access" and simultaneously "accepts input from internet messaging platforms," any security flaw in the message path could lead to catastrophic consequences.[7]

The OpenClaw team patched this specific vulnerability in subsequent versions and introduced message source verification and payload sandboxing mechanisms. However, CrowdStrike's security research report pointed out that this vulnerability reveals a broader class of "Prompt Injection attack surfaces" that are fundamentally difficult to eliminate under OpenClaw's architecture.[6]

Recommended mitigation measures for enterprise OpenClaw deployment include:

- Run OpenClaw in an isolated virtual machine or container, not directly on employees' work machines

- Strictly limit OpenClaw agent's file system access scope (using

chrootor Docker Volume mounts) - Regularly update to the latest version; subscribe to OpenClaw's security advisories

- Only enable business-essential Skill plugins to minimize the attack surface

- Set additional manual confirmation requirements for high-sensitivity operations (such as executing shell commands or accessing specific directories)

6.2 Manus's Privacy & Compliance Concerns

Manus's security risks stem from the nature of its cloud architecture: all task descriptions, uploaded files, and all intermediate data generated during execution are processed on Manus's cloud servers.

For enterprises in Taiwan and the EU, this raises compliance concerns at two levels:

First, Personal Data Protection Act (PDPA/GDPR) compliance. If Manus tasks involve personally identifiable information (PII), transmitting this data to overseas servers may violate local data protection regulations. Manus's privacy policy requires careful legal review, particularly regarding data storage location, retention periods, and whether data is used for model training.

Second, trade secret leakage risk. If enterprises submit documents containing trade secrets in Manus tasks (such as financial forecasts, unreleased product specifications, or customer lists), the security of this data in Manus's cloud environment needs to be evaluated. Manus currently does not publicly provide SOC 2 Type II or ISO 27001 certification, which is a concern for organizations with strict information security governance requirements.

6.3 Claude Code's Sandbox Mechanism

By comparison, Claude Code's security design is the most rigorous among the three. It employs an "Explicit Permission" operational model -- the agent must pass explicit user confirmation before executing any operation with side effects (writing files, executing bash commands, or performing git operations).

Claude Code's security boundaries are clear: code and local data are not automatically sent to Anthropic (only context needed for inference is transmitted); operation confirmation requirements prevent agent runaway execution; and there is no external messaging platform attack surface (no WhatsApp or Telegram receiving ports means no injection attack vectors from messaging platforms).

Anthropic's Constitutional AI research also continuously strengthens the Claude model's ability to refuse dangerous instructions at the model level, forming model-layer security protection. This makes Claude Code's security more predictable compared to the other two frameworks.

7. Ecosystem & Community Activity

7.1 OpenClaw's Explosive Community Growth

OpenClaw's community growth rate is nothing short of phenomenal in open-source history. From 9,000 GitHub stars at first public release to 157,000 stars 60 days later, this growth was driven by extensive tech media coverage (Scientific American, CNBC, The Verge, etc.), viral Twitter/X sharing, and the inherently sensational concept of "AI agents taking over your computer."[1]

However, explosive community growth also brings quality challenges. The issue tracker has accumulated a large number of unresolved bug reports; the quality of third-party plugins in the Skills marketplace varies; and official documentation often lags behind actual features during rapid version iterations. These are inevitable problems for any fast-growing open-source project but are also maturity risks that enterprises should factor into their evaluation of adopting OpenClaw.

OpenClaw's plugin ecosystem currently has over 100 official and community plugins (Skills), covering:

- Productivity tools: Google Calendar, Notion, Todoist, Jira

- Communication integrations: Gmail, Outlook, Slack (as a Skill rather than a Channel)

- Development tools: GitHub, GitLab, Linear, Sentry

- Data tools: Google Sheets, Airtable, PostgreSQL (via MCP)

- AI tools: DALL-E 3, Diffusion Models, ElevenLabs

7.2 Manus's Closed-Source Ecosystem Challenge

As a closed-source SaaS, Manus's concept of "ecosystem" is fundamentally different from open-source frameworks. Manus's extensibility relies entirely on officially provided integrations; third parties cannot develop plugins or extend its core functionality. In the short term, this ensures consistent user experience and quality control, but from a long-term competitiveness perspective, it is a clear moat gap -- open-source framework communities will accumulate far broader ecosystems over time than closed-source products.

Manus's community activity mainly manifests in unofficial communities (like Reddit and Twitter) where users share task examples, rather than in developer-contributed technical ecosystem. This is sufficient for general users but is a clear limitation for enterprise users requiring deep customization.

7.3 Claude Code's MCP Ecosystem Building

Claude Code's ecosystem building revolves around the Model Context Protocol (MCP), introduced by Anthropic in late 2024. MCP is an open standard that allows third-party service developers to provide structured tool interfaces for Claude -- essentially a protocol that lets external systems "teach" Claude how to operate them.

An increasing number of SaaS services (including GitHub, Figma, Linear, Stripe, etc.) now offer official MCP integrations, expanding Claude Code's tool ecosystem continuously. Anthropic's enterprise relationships and brand recognition also attract large software vendors to prioritize Claude integration support -- an advantage in quality consistency that OpenClaw's community-driven ecosystem finds difficult to match.

In terms of documentation quality, Anthropic's official documentation is consistently known for its systematicness and accuracy, and Claude Code's documentation is no exception. Compared to OpenClaw's documentation lagging behind version updates, Claude Code users can typically find accurate and clear reference materials.

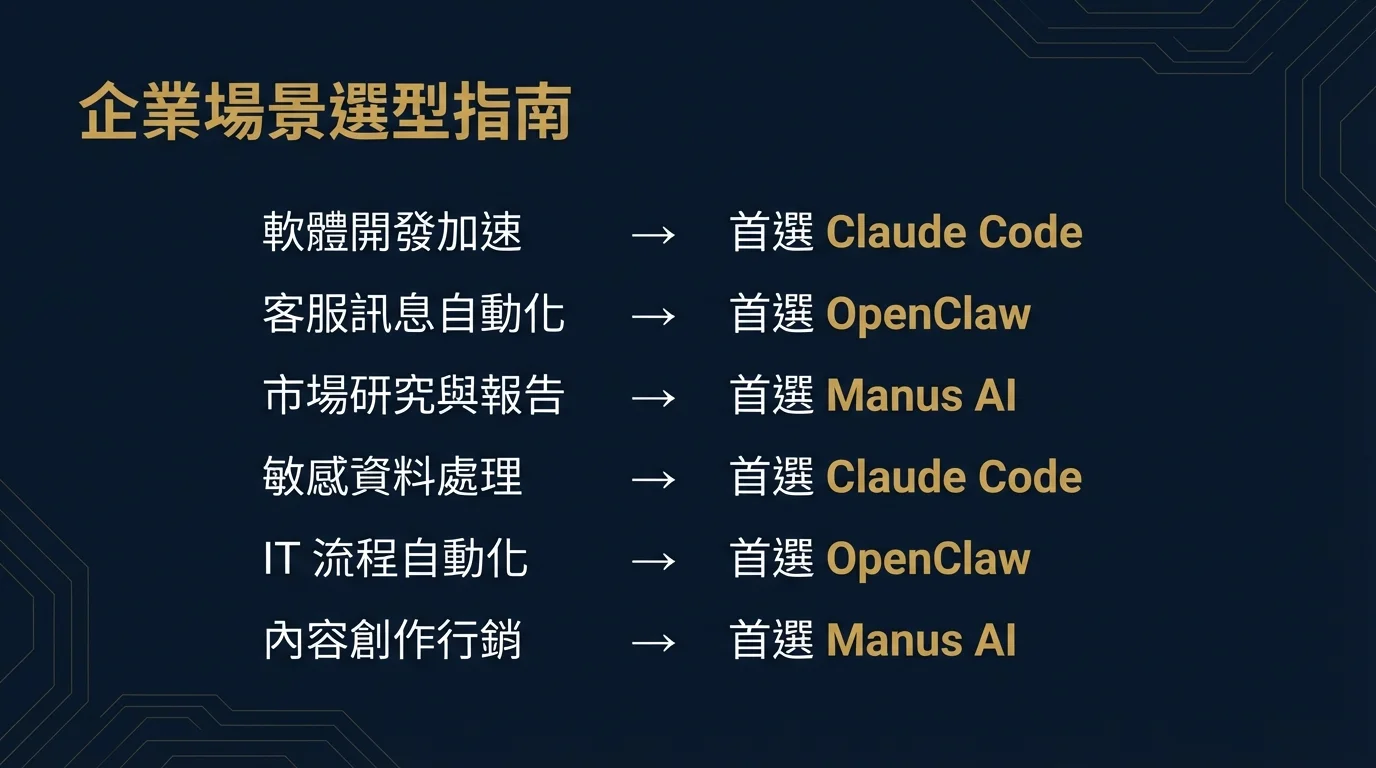

8. Enterprise Scenario Selection Guide

8.1 Selection Decision Matrix

No single framework suits all scenarios. The following decision matrix provides selection recommendations based on this article's analysis for eight typical enterprise use cases:

| Use Case | Primary Choice | Secondary Choice | Key Rationale |

|---|---|---|---|

| Software Development Acceleration | Claude Code | OpenClaw | Codebase semantic understanding, git integration, test execution far exceed other frameworks |

| Customer Service Messaging Automation | OpenClaw | -- | WhatsApp / LINE / Telegram native integration; Manus and Claude Code do not support this |

| Market Research & Competitive Analysis | Manus AI | OpenClaw | Integrated web browsing, data aggregation, and report generation experience; easiest for non-technical users |

| Sensitive Data Processing (Finance/Healthcare) | Claude Code | OpenClaw (Self-hosted) | Enterprise private deployment support (Bedrock/Vertex), data stays on-premises; avoid Manus cloud transmission |

| IT Process Automation | OpenClaw | Claude Code | Hooks event-driven, full shell access, multi-platform integration suited for complex IT workflows |

| Content Creation & Marketing | Manus AI | OpenClaw | One-stop web research + copywriting + formatted output experience, suited for non-technical marketers |

| Data Analysis & Reporting | Manus AI | Claude Code | Python sandbox execution + visualization output; Claude Code suited for complex data engineering tasks |

| Open-Source Tech Validation & PoC | OpenClaw | Claude Code | Fully open-source and auditable, highly customizable, no license fees, suited for technical team proof-of-concept |

8.2 Selection Recommendations by Organization Type

Technical Startups (Engineer-Led)

If your core use case is accelerating software development, Claude Code is the clear first choice. It integrates immediately into existing git workflows without requiring any process redesign. For automating development-adjacent tasks (CI/CD triggers, issue triage, documentation generation), you can extend capabilities by pairing Claude Code with MCP integrations.

If your scenarios are broader -- including customer communication automation and multi-platform integration, and your team can handle deployment and maintenance -- OpenClaw's flexibility will deliver greater long-term returns.

Mid-Size Enterprises (Business-Oriented)

For mid-size enterprises without strong technical teams who want to quickly extract business value from AI agents, Manus AI provides the shortest path to value realization. We recommend starting with specific business pain points (such as market research automation or report generation) for a PoC, validating results before evaluating whether to migrate to a more complex framework.

Important note: if the business involves any personal data or trade secrets, a legal team compliance review of Manus's privacy policy must be completed before adoption.

Large Enterprises (Compliance-Driven)

For large enterprises with strict information security compliance requirements (finance, healthcare, government-related), Claude Code's Anthropic Enterprise plan (paired with Amazon Bedrock or Google Cloud Vertex AI private deployment) is currently the option among the three that best meets enterprise information security standards. It provides SOC 2 certification, GDPR compliance, and guarantees that data is not used for training.

If broader automation capabilities are needed (beyond code), evaluate self-hosting an OpenClaw Enterprise Gateway in an isolated environment, but invest in corresponding security architecture design and ongoing operational resources.

8.3 Hybrid Strategy: Not Either/Or

It is worth noting that the three frameworks are not mutually exclusive choices. Many mature technology organizations are exploring hybrid strategies:

- Engineering teams use Claude Code to accelerate daily development

- Business and marketing teams use Manus AI to complete research and content tasks

- IT and operations teams use OpenClaw (in controlled environments) to automate system maintenance and communication workflows

This "toolbox strategy" increases management complexity initially (different account systems, cost tracking, security policies), but allows each scenario to use the most suitable tool, avoiding the anti-pattern of "using a hammer for every problem."

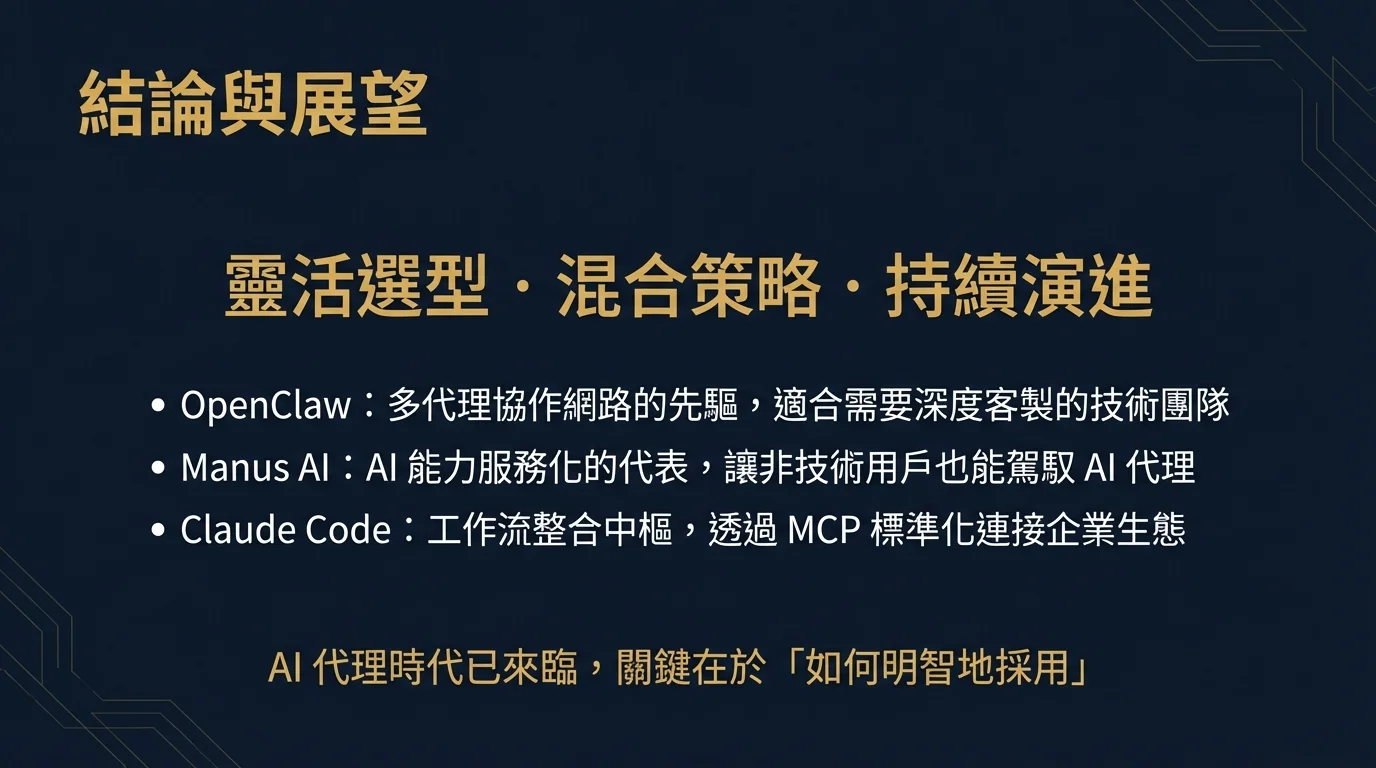

9. Conclusions & Recommendations

The 2026 AI agent market is at a critical transition from "early adopter experimentation" to "mainstream enterprise deployment." OpenClaw, Manus AI, and Claude Code represent three distinctly different technical routes and value propositions in this transition.

9.1 Core Advantage Summary for Each Framework

OpenClaw's core advantage lies in its unmatched flexibility and extensibility. Its fully open-source architecture means no vendor lock-in risk; its local-first design provides the strongest data sovereignty guarantees; native integration with 10+ messaging platforms makes it nearly irreplaceable in customer service automation and personal productivity scenarios; and its Agent Teams multi-agent collaboration architecture demonstrates strong potential for complex business process automation. However, the price of these advantages is a higher technical barrier, non-negligible security risks, and quality fluctuation in its community-driven ecosystem.

Manus AI's core advantage lies in its ultimate ease of use and zero operational burden. For non-technical business users, Manus is currently the path that most directly lets them feel the value of AI agents. Its cloud sandbox execution model performs reliably on general tasks like web research, data aggregation, and report generation. However, its closed-source architecture, limited customization capabilities, and cloud data flow privacy concerns make it unsuitable for enterprise scenarios requiring deep customization or strict compliance.

Claude Code's core advantage lies in its deep adaptation to software engineering scenarios. Cross-file semantic understanding of codebases, seamless git workflow integration, clear operation confirmation mechanisms, and Anthropic Enterprise's private deployment options make it the best choice for technical organizations in code-related scenarios. The continued expansion of the MCP ecosystem also supports its long-term competitiveness.

9.2 Final Selection Decision Framework

Before making a selection decision, we recommend organizations answer the following five key questions:

Question 1: What is your primary use case? If it's code-related tasks, choose Claude Code; if it's messaging platform integration, choose OpenClaw; if it's general research and reporting tasks, choose Manus.

Question 2: How strict are your data sovereignty requirements? If personal data or trade secrets are involved, Manus's cloud architecture is a red line; OpenClaw's local deployment or Claude Code's enterprise private deployment are compliant choices.

Question 3: How many technical resources can you invest in deployment and maintenance? If technical resources are limited, Manus is the lowest-friction choice; if you can self-host, OpenClaw delivers the highest ROI; Claude Code falls between the two.

Question 4: Does your budget model prefer fixed or variable costs? Manus's subscription model provides predictable fixed costs; OpenClaw and Claude Code's API consumption models scale variably with usage.

Question 5: What is your tolerance for vendor lock-in? If concerned about future migration costs, OpenClaw's fully open-source and multi-model support provides maximum flexibility; Manus's closed-source SaaS model carries the highest lock-in risk; Claude Code falls between the two (CLI tool itself is open, but deeply dependent on the Claude API).

9.3 Outlook: The Evolution of AI Agent Frameworks

From a longer time horizon, the three frameworks also each represent different directions in AI agent evolution. OpenClaw's Agent Teams and Hooks mechanisms foreshadow a future of "multi-agent collaboration networks" -- complex tasks will be completed collaboratively by dynamic teams of specialized agents, rather than by a single all-capable agent working alone. Manus represents the trend of "AI capability as a service (AI as a Service)" -- democratizing AI agent capabilities to non-technical users through an extremely user-friendly interface. Claude Code's combination with MCP represents the direction of "AI agents as workflow integration hubs" -- enabling different tools and systems to collaborate with AI agents through standardized protocols.

Gartner predicts that by 2027, AI agents with autonomous decision-making capabilities will move from today's technology labs into the core business processes of enterprises, becoming indispensable digital infrastructure like CRM and ERP.[8] Today's selection decisions are not just about choosing a tool -- they are shaping the foundation of your organization's AI agent capabilities for the next several years. Evaluate carefully, but don't miss the first-mover advantage in pursuit of perfection.

The AI agent era has arrived. The question is not "whether to adopt," but "how to adopt wisely."