- Diffusion Models first comprehensively surpassed GANs on FID metrics in 2021[7], becoming the new champion of image generation — and the open-sourcing of Stable Diffusion ignited an industry revolution in generative AI[8]

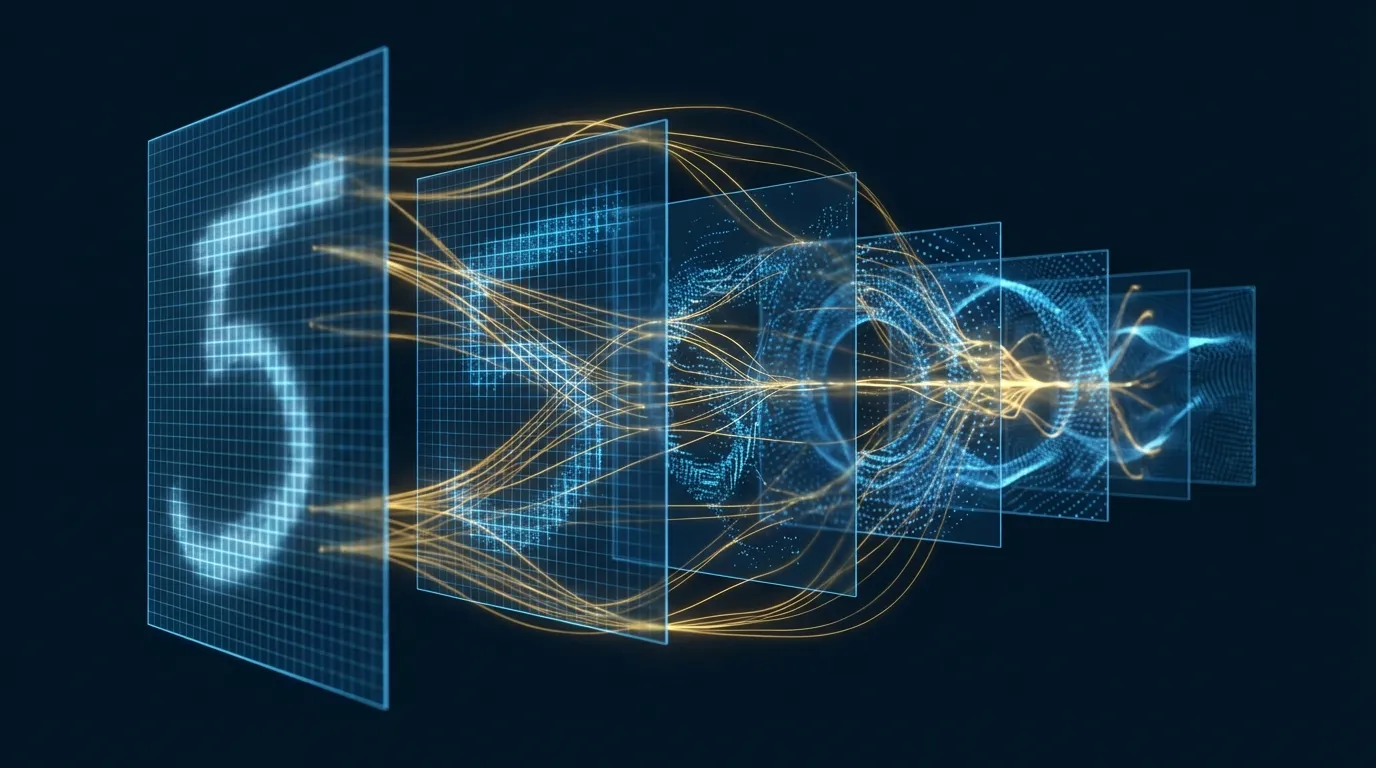

- The core principle is remarkably elegant: the forward process gradually adds noise to transform an image into pure noise, while the reverse process learns to denoise — the training objective is simply to "predict the noise," yet it produces high-quality images[1]

- The diffusion framework extends beyond images — Discrete Diffusion Models (D3PM)[14] and Diffusion-LM[15] have expanded this paradigm to text generation, enabling controllable language generation

- This article includes two Google Colab hands-on labs: Discrete Diffusion x BERT Text Generation (CPU) and Stable Diffusion x Text-to-Image Generation with Denoising Process Visualization (GPU)

1. Why Diffusion Models Changed the Game for Generative AI

Before 2020, the reigning champion of image generation was GANs (Generative Adversarial Networks). GANs could produce strikingly realistic images, but they suffered from three fatal flaws: extremely unstable training (mode collapse), insufficient diversity (tendency to learn only a few "patterns"), and inability to compute exact log-likelihood.

In 2020, Ho et al. published DDPM (Denoising Diffusion Probabilistic Models)[1] at NeurIPS, repackaging the non-equilibrium thermodynamics-inspired framework proposed by Sohl-Dickstein et al. in 2015[2] into an elegant and effective training scheme. The results shocked the entire community: diffusion models approached GAN-level image quality while enjoying stable training and a complete log-likelihood framework.

A year later, Dhariwal and Nichol[7] experimentally proved that diffusion models comprehensively surpass GANs on FID metrics — achieving FID 3.94 on ImageNet 256x256, compared to 6.95 for the then-best BigGAN-deep. The paper's title directly declared a changing of the guard: "Diffusion Models Beat GANs on Image Synthesis."

The development of diffusion models has since exploded: in 2022, Stability AI open-sourced Stable Diffusion[8], enabling anyone to generate high-quality images on consumer-grade GPUs; OpenAI released DALL·E 2[10]; Google published Imagen[11]; in 2023, SDXL[12] pushed resolution and aesthetic quality to new heights; and DiT[13] (Diffusion Transformer) signaled the architectural evolution toward replacing U-Net with Transformer, catalyzing next-generation models like Sora and FLUX.

But the influence of diffusion models extends far beyond images. Discrete diffusion models[14] have brought this framework into text generation, protein design, molecular generation, and other discrete data domains. Understanding the principles of diffusion models is equivalent to holding the key that opens the entire door to generative AI.

2. Mathematical Intuition: Forward Noising and Reverse Denoising

The core idea of diffusion models can be summarized in a single sentence: learning how to recover data from noise is equivalent to learning how to generate data.

2.1 Forward Process

Given a real image x₀, the forward process gradually adds Gaussian noise over T timesteps:

q(xₜ | xₜ₋₁) = N(xₜ; √(1-βₜ) · xₜ₋₁, βₜI)

where βₜ is the noise schedule, typically increasing linearly or via cosine from a very small value (e.g., β₁ = 0.0001) to a larger value (e.g., β_T = 0.02). When T is sufficiently large (typically T = 1000), the final x_T is nearly pure Gaussian noise.

A key trick in DDPM is that there is no need to add noise step by step — you can jump directly from x₀ to the noisy version at any timestep t:

q(xₜ | x₀) = N(xₜ; √ᾱₜ · x₀, (1-ᾱₜ)I)

where ᾱₜ = ∏ᵢ₌₁ᵗ (1-βᵢ). This allows randomly sampling any timestep during training, greatly improving efficiency.

2.2 Reverse Process

The reverse process attempts to "undo" the noise addition — progressively denoising from x_T (pure noise) back to x₀ (clean image). This process is parameterized by a neural network ε_θ:

p_θ(xₜ₋₁ | xₜ) = N(xₜ₋₁; μ_θ(xₜ, t), σₜ²I)

where the mean μ_θ is computed from the model-predicted noise ε_θ(xₜ, t).

2.3 Training Objective: Predicting Noise

The key contribution of Ho et al.[1] was proving that a surprisingly simple training objective suffices:

L_simple = E[‖ε - ε_θ(xₜ, t)‖²]

In plain language: take a random image, add a random amount of noise, and have the model predict how much noise was added — that's it. This simplified version drops the weighting terms from the ELBO derivation, yet in practice produces better generation quality.

Song et al.[4] later unified DDPM and score-based models[3] under the SDE (Stochastic Differential Equations) framework at ICLR 2021, proving that the two are merely different discretizations of the same continuous process. This unified perspective laid the theoretical foundation for subsequent sampling acceleration methods.

3. Milestone Evolution: From DDPM to Stable Diffusion to DiT

| Year | Milestone | Core Contribution | Impact |

|---|---|---|---|

| 2015 | Sohl-Dickstein et al.[2] | Non-equilibrium thermodynamics-inspired diffusion framework | Theoretical foundation |

| 2019 | Score Matching[3] | Score-based generative model | Alternative theoretical perspective |

| 2020 | DDPM[1] | Simple noise prediction training objective | The "GPT moment" for diffusion models |

| 2021 | DDIM[5] | Non-Markovian sampling, 1000 steps to 50 | First practical acceleration |

| 2021 | Improved DDPM[6] | Cosine noise schedule, learnable variance | Quality and log-likelihood improvements |

| 2021 | Diffusion Beats GANs[7] | Classifier guidance + architecture optimization | FID surpasses GANs for the first time |

| 2021 | Score SDE[4] | Unified DDPM and score-based models | Theoretical framework unification |

| 2022 | LDM / Stable Diffusion[8] | Diffusion in latent space, efficiency gains of tens of times | Open source ignites industry revolution |

| 2022 | DALL·E 2[10] | CLIP-guided + cascaded diffusion | Commercialization of text-to-image |

| 2022 | Imagen[11] | T5-XXL text encoder + cascaded super-resolution | Proves the importance of language model understanding |

| 2022 | CFG[17] | Classifier-free guidance, unified conditional/unconditional generation | Becomes the standard for conditional generation |

| 2023 | SDXL[12] | Larger U-Net + dual text encoders | Leap in aesthetic quality |

| 2023 | DiT[13] | Transformer replaces U-Net | Opens the Sora / FLUX era |

| 2023 | LCM[16] | Consistency distillation, 2–4 step generation | Inference efficiency breakthrough |

4. Core Architecture of Image Diffusion Models

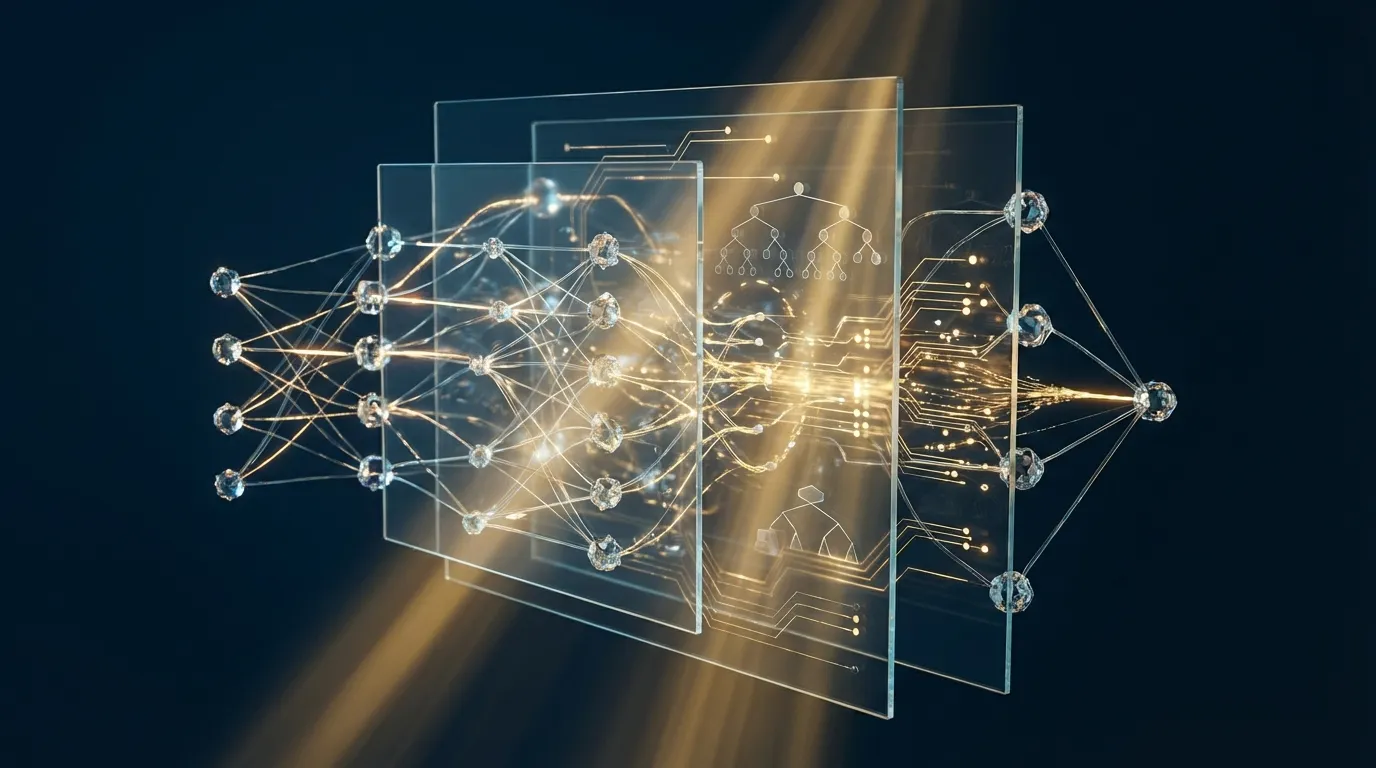

4.1 U-Net: From Pixel Space to Latent Space

The original DDPM architecture operates directly in pixel space — for a 256x256 image, each denoising step processes 256x256x3 = 196,608 dimensions. This makes both training and inference extremely expensive.

The Latent Diffusion Model (LDM) proposed by Rombach et al.[8] solved this problem: first, a pretrained VAE compresses the image into a low-dimensional latent space, then diffusion is performed in that latent space. A 512x512 image is compressed to a 64x64x4 latent representation — a 48x reduction in dimensionality. The diffusion model only needs to learn to denoise in this compressed space, dramatically reducing computation and memory requirements.

Stable Diffusion is the open-source implementation of LDM, and its architecture consists of three core components:

- VAE Encoder/Decoder: Compresses images into latent space / reconstructs images from latent space

- U-Net: Performs denoising in latent space, containing residual blocks, self-attention layers, and cross-attention layers

- Text Encoder (CLIP / T5): Converts text prompts into conditioning vectors, injected into U-Net through cross-attention

4.2 Classifier-Free Guidance: Controlling Generation Quality

Classifier-Free Guidance (CFG), proposed by Ho and Salimans[17], is the standard method for conditional generation in diffusion models. The core idea: simultaneously train conditional and unconditional generation (randomly dropping the text condition), then at inference use the difference between the two to "amplify" the influence of the text condition:

ε̃ = ε_unconditional + w · (ε_conditional - ε_unconditional)

The guidance scale w controls the strength of text influence on the image: w = 1 is equivalent to no guidance, w = 7–12 is the commonly used range, and higher w produces images that more closely match the text description but with reduced diversity.

4.3 DiT: Transformers Take Over Diffusion Models

DiT (Diffusion Transformer) by Peebles and Xie[13] replaced U-Net with a standard Vision Transformer as the denoising network. They serialized latent space patches into a sequence, processed them with a Transformer, and injected timestep and conditioning information through adaLN-Zero (adaptive layer normalization).

The significance of DiT lies in the fact that Transformer scaling laws are clearer than those for U-Net — larger models trained longer yield better quality. This discovery directly led to OpenAI's Sora (video generation) and Black Forest Labs' FLUX, among other next-generation large-scale diffusion models.

5. The Intersection of Text and Diffusion: From CLIP Guidance to Discrete Text Diffusion

The relationship between diffusion models and text AI has two dimensions: first, text as a condition guiding image generation; second, diffusion applied directly to text itself.

5.1 Text Conditioning: How CLIP Enables Diffusion Models to "Understand" Language

Stable Diffusion uses CLIP[9] (Contrastive Language-Image Pretraining) as its text encoder. CLIP was trained on 400 million image-text pairs using contrastive learning, learning to map text and images into a shared representation space — semantically similar text and images are close in this space.

In Stable Diffusion, CLIP's text encoder converts prompts into a series of token vectors, which are injected into the denoising process through the U-Net's cross-attention layers. Each attention layer in the U-Net "queries" the text condition: "What should be drawn at this position?" — the text vectors provide the answer.

Google's Imagen[11] chose a different approach: using a frozen T5-XXL (a purely text-based large language model) as the text encoder. Imagen's experimental results revealed an important insight: the language understanding capability of the text encoder matters more than the size of the diffusion model itself. A larger, stronger text encoder produced quality improvements far exceeding those from increasing the U-Net size.

5.2 Discrete Diffusion: Bringing the Diffusion Paradigm to Text Generation

Standard diffusion models operate in continuous space (pixel values are continuous), but text is discrete (composed of token IDs). D3PM (Discrete Denoising Diffusion Probabilistic Models), proposed by Austin et al.[14], extended the diffusion framework to discrete state spaces.

The forward process in D3PM does not add Gaussian noise but instead gradually "corrupts" tokens — randomly replacing tokens with [MASK] or other tokens. The most intuitive variant is Absorbing State Diffusion: at each timestep, each token has a certain probability of being replaced with [MASK], until eventually all tokens are masked. The reverse process starts from an all-[MASK] sequence and progressively "fills in" the correct tokens.

Diffusion-LM by Li et al.[15] took a different approach: performing diffusion in the continuous token embedding space, then using a rounding step to map continuous vectors back to discrete tokens. Diffusion-LM's unique advantage is controllability — gradient guidance can be used to make generated text satisfy specific constraints (such as syntactic structure or semantic attributes), which is difficult for autoregressive language models to achieve.

Notably, BERT's Masked Language Modeling is essentially a single-step denoiser for discrete diffusion — given a partially masked sequence, it predicts the tokens at the masked positions. Embedding BERT within an iterative diffusion sampling framework produces a practical discrete text diffusion system.

6. Hands-on Lab 1: Discrete Diffusion x BERT Text Generation (Google Colab)

This lab demonstrates how to use BERT as a denoiser to implement discrete diffusion text generation — starting from an all-[MASK] sequence and progressively "denoising" into coherent text. This is a simplified implementation of D3PM[14] absorbing state diffusion.

Open Google Colab (runs on CPU), create a new Notebook, and paste the following code blocks in order:

6.1 Environment Setup

# ★ Install required packages ★

!pip install transformers torch -q

6.2 Load BERT and Understand Discrete Diffusion

import torch

import torch.nn.functional as F

from transformers import BertTokenizer, BertForMaskedLM

import numpy as np

# ★ Load pretrained BERT (Masked Language Model = single-step denoiser for discrete diffusion) ★

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertForMaskedLM.from_pretrained('bert-base-uncased').eval()

MASK_ID = tokenizer.mask_token_id

CLS_ID = tokenizer.cls_token_id

SEP_ID = tokenizer.sep_token_id

print(f"BERT vocab size: {tokenizer.vocab_size}")

print(f"[MASK] token ID: {MASK_ID}")

print(f"Model parameters: {sum(p.numel() for p in model.parameters())/1e6:.0f}M")

6.3 Forward Process: Progressively Corrupting Text

# ★ Forward diffusion process: progressively replace tokens with [MASK] ★

def forward_diffusion(text, num_steps=10):

"""

Simulate the forward process of absorbing state discrete diffusion.

At each step, replace tokens with [MASK] with increasing probability.

"""

tokens = tokenizer.encode(text, return_tensors='pt')[0] # [seq_len]

seq_len = len(tokens) - 2 # Exclude [CLS] and [SEP]

content_tokens = tokens[1:-1].clone()

print(f"Original text: {text}")

print(f"Token count: {seq_len}")

print("-" * 60)

for step in range(num_steps + 1):

mask_prob = step / num_steps

mask = torch.rand(seq_len) < mask_prob

noisy = content_tokens.clone()

noisy[mask] = MASK_ID

decoded = tokenizer.decode(noisy)

n_masked = mask.sum().item()

print(f"t={step:2d} (mask {mask_prob:.0%}, {n_masked:2d}/{seq_len} masked): {decoded}")

return tokens

# ★ Demonstrate the forward process ★

print("【Forward Diffusion Process: Text → Noise】")

print("=" * 60)

forward_diffusion("The quick brown fox jumps over the lazy dog", num_steps=8)

6.4 Reverse Process: Generating Text from Noise

# ★ Reverse diffusion sampling: progressively denoise from all-[MASK] to text ★

@torch.no_grad()

def reverse_diffusion_step(token_ids, temperature=1.0):

"""

One reverse diffusion step: use BERT to predict all [MASK] positions,

unmask the position with the highest confidence.

"""

outputs = model(token_ids.unsqueeze(0))

logits = outputs.logits[0] # [seq_len, vocab_size]

# Find all [MASK] positions

mask_positions = (token_ids == MASK_ID).nonzero(as_tuple=True)[0]

if len(mask_positions) == 0:

return token_ids, 0

# Compute prediction confidence at each [MASK] position

probs = F.softmax(logits[mask_positions] / temperature, dim=-1)

max_probs, predictions = probs.max(dim=-1)

return token_ids, mask_positions, max_probs, predictions

def generate_text(seq_length=12, num_steps=15, temperature=1.0, top_k=0):

"""

Complete discrete diffusion sampling: progressively denoise from all-[MASK].

Each step unmasks the positions with highest confidence.

"""

# Initial state: [CLS] + all [MASK] + [SEP]

ids = torch.full((seq_length + 2,), MASK_ID, dtype=torch.long)

ids[0] = CLS_ID

ids[-1] = SEP_ID

print(f"\n【Reverse Diffusion Process: Noise → Text】(length={seq_length}, steps={num_steps})")

print("=" * 60)

for step in range(num_steps):

# Compute predictions for each [MASK]

with torch.no_grad():

logits = model(ids.unsqueeze(0)).logits[0]

mask_positions = (ids == MASK_ID).nonzero(as_tuple=True)[0]

# Exclude [CLS] and [SEP]

mask_positions = mask_positions[(mask_positions > 0) & (mask_positions < len(ids)-1)]

if len(mask_positions) == 0:

break

# Predict each mask position

probs = F.softmax(logits[mask_positions] / temperature, dim=-1)

if top_k > 0:

# Top-k sampling

topk_probs, topk_indices = probs.topk(top_k, dim=-1)

topk_probs = topk_probs / topk_probs.sum(dim=-1, keepdim=True)

sampled = torch.multinomial(topk_probs, 1).squeeze(-1)

predictions = topk_indices.gather(1, sampled.unsqueeze(-1)).squeeze(-1)

confidence = topk_probs.gather(1, sampled.unsqueeze(-1)).squeeze(-1)

else:

# Greedy selection (maximum probability)

confidence, predictions = probs.max(dim=-1)

# Number to unmask per step: linearly decreasing

total_masks = len(mask_positions)

n_unmask = max(1, int(total_masks * (1.0 / (num_steps - step))))

# Unmask the highest-confidence positions

_, top_indices = confidence.topk(min(n_unmask, len(mask_positions)))

chosen_positions = mask_positions[top_indices]

chosen_tokens = predictions[top_indices]

ids[chosen_positions] = chosen_tokens

# Display current state

text = tokenizer.decode(ids[1:-1])

remaining = (ids == MASK_ID).sum().item()

print(f"Step {step+1:2d} ({remaining:2d} masks left): {text}")

final_text = tokenizer.decode(ids[1:-1])

print(f"\nFinal: {final_text}")

return final_text

# ★ Greedy generation (deterministic) ★

generate_text(seq_length=10, num_steps=12, temperature=1.0)

6.5 Top-k Sampling and Temperature Control

# ★ Top-k sampling (increases diversity) ★

print("\n" + "=" * 60)

print("Top-k Sampling (k=5, temperature=1.2) — multiple runs produce different results")

print("=" * 60)

for i in range(4):

result = generate_text(seq_length=10, num_steps=12, temperature=1.2, top_k=5)

print()

# ★ Key observations ★

# 1. Greedy mode produces the same result each time, top-k sampling varies

# 2. Higher temperature yields more diverse but potentially less coherent output

# 3. Note that BERT tends to generate common phrase patterns

6.6 Conditional Generation: Text Infilling and Continuation

# ★ Conditional generation: given partial text, let diffusion fill in the rest ★

def conditional_generate(template, num_steps=12, temperature=1.0, top_k=0):

"""

Conditional discrete diffusion: preserve known tokens, only denoise [MASK] positions.

Similar to Diffusion-LM's infilling capability.

"""

ids = tokenizer.encode(template, return_tensors='pt')[0]

print(f"\n【Conditional Generation】Template: {template}")

print("=" * 60)

for step in range(num_steps):

with torch.no_grad():

logits = model(ids.unsqueeze(0)).logits[0]

mask_positions = (ids == MASK_ID).nonzero(as_tuple=True)[0]

if len(mask_positions) == 0:

break

probs = F.softmax(logits[mask_positions] / temperature, dim=-1)

if top_k > 0:

topk_probs, topk_indices = probs.topk(top_k, dim=-1)

topk_probs = topk_probs / topk_probs.sum(dim=-1, keepdim=True)

sampled = torch.multinomial(topk_probs, 1).squeeze(-1)

predictions = topk_indices.gather(1, sampled.unsqueeze(-1)).squeeze(-1)

confidence = topk_probs.gather(1, sampled.unsqueeze(-1)).squeeze(-1)

else:

confidence, predictions = probs.max(dim=-1)

total_masks = len(mask_positions)

n_unmask = max(1, int(total_masks / max(1, num_steps - step)))

_, top_idx = confidence.topk(min(n_unmask, len(mask_positions)))

ids[mask_positions[top_idx]] = predictions[top_idx]

text = tokenizer.decode(ids[1:-1])

remaining = (ids == MASK_ID).sum().item()

print(f"Step {step+1:2d} ({remaining:2d} masks): {text}")

final = tokenizer.decode(ids[1:-1])

print(f"Result: {final}")

return final

# ★ Example 1: Sentence infilling ★

conditional_generate(

"The [MASK] [MASK] [MASK] is the most [MASK] [MASK] in the world.",

num_steps=8

)

# ★ Example 2: Topic-guided ★

conditional_generate(

"In machine learning, [MASK] [MASK] [MASK] [MASK] are used to [MASK] [MASK] [MASK].",

num_steps=8

)

# ★ Example 3: Sentiment-guided ★

conditional_generate(

"I absolutely love [MASK] [MASK] [MASK] because [MASK] [MASK] [MASK] [MASK].",

num_steps=8, temperature=1.1, top_k=5

)

# ★ Core Observations ★

# Discrete Diffusion vs. Autoregressive (GPT):

# - Autoregressive: generates left-to-right, one token at a time, cannot go back to correct

# - Discrete Diffusion: considers all positions globally, fills high-confidence first then low-confidence

# - Diffusion advantages: naturally supports infilling, controllable generation, global consistency

7. Hands-on Lab 2: Stable Diffusion x Text-to-Image Generation (Google Colab)

This lab uses HuggingFace Diffusers to load Stable Diffusion, demonstrating text-to-image generation, guidance scale control, and step-by-step visualization of the diffusion denoising process.

Open Google Colab, ensure you have switched to a GPU runtime (Runtime > Change runtime type > T4 GPU), create a new Notebook, and paste the following code blocks in order:

7.1 Environment Setup

# ★ Install Diffusers ecosystem ★

!pip install diffusers transformers accelerate safetensors -q

7.2 Load Stable Diffusion Pipeline

import torch

from diffusers import StableDiffusionPipeline

import matplotlib.pyplot as plt

import numpy as np

# ★ Load Stable Diffusion 1.5 (float16, ~3.5GB VRAM) ★

pipe = StableDiffusionPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5",

torch_dtype=torch.float16,

safety_checker=None # For acceleration; enable for production deployment

).to("cuda")

# Enable memory optimization

pipe.enable_attention_slicing()

print(f"Pipeline loaded on: {pipe.device}")

print(f"U-Net parameters: {sum(p.numel() for p in pipe.unet.parameters())/1e6:.0f}M")

print(f"Text encoder: {sum(p.numel() for p in pipe.text_encoder.parameters())/1e6:.0f}M")

print(f"VAE: {sum(p.numel() for p in pipe.vae.parameters())/1e6:.0f}M")

7.3 Basic Text-to-Image Generation

# ★ Text-to-image generation ★

prompt = "A serene Japanese garden with cherry blossoms, koi pond, wooden bridge, soft morning light, photorealistic"

negative_prompt = "blurry, low quality, distorted, deformed"

image = pipe(

prompt=prompt,

negative_prompt=negative_prompt,

num_inference_steps=30,

guidance_scale=7.5,

width=512,

height=512,

generator=torch.Generator("cuda").manual_seed(42)

).images[0]

plt.figure(figsize=(8, 8))

plt.imshow(image)

plt.title("Stable Diffusion 1.5 — Text-to-Image", fontsize=14)

plt.axis("off")

plt.show()

7.4 Guidance Scale Comparison

# ★ Impact of Classifier-Free Guidance Scale ★

# w=1 (no guidance) → w=7.5 (standard) → w=15 (strong guidance) → w=25 (excessive guidance)

guidance_scales = [1.0, 5.0, 7.5, 15.0]

prompt = "A futuristic cyberpunk city at night, neon lights, rain reflections"

seed = 123

fig, axes = plt.subplots(1, 4, figsize=(24, 6))

for i, scale in enumerate(guidance_scales):

img = pipe(

prompt=prompt,

num_inference_steps=25,

guidance_scale=scale,

width=512, height=512,

generator=torch.Generator("cuda").manual_seed(seed)

).images[0]

axes[i].imshow(img)

axes[i].set_title(f"guidance_scale = {scale}", fontsize=13)

axes[i].axis("off")

plt.suptitle("Classifier-Free Guidance Scale Comparison", fontsize=16)

plt.tight_layout()

plt.show()

# ★ Key observations ★

# w=1.0: Almost ignores text, images are random and blurry

# w=5-7.5: Best balance between text guidance and diversity

# w=15+: Strongly follows text, but colors become oversaturated and details distorted

7.5 Step-by-Step Denoising Process Visualization

# ★ Visualize the reverse denoising process of diffusion ★

# Use callback to capture intermediate states at each step

from diffusers import DDIMScheduler

# Switch to DDIM scheduler (supports fewer steps and is deterministic)

pipe.scheduler = DDIMScheduler.from_config(pipe.scheduler.config)

intermediates = []

def callback_fn(pipe, step, timestep, callback_kwargs):

"""Record latent variables at each step, decode for visualization"""

latents = callback_kwargs["latents"]

# Decode latent variables to images

with torch.no_grad():

decoded = pipe.vae.decode(latents / pipe.vae.config.scaling_factor)

img = decoded.sample[0].cpu().float()

img = (img / 2 + 0.5).clamp(0, 1).permute(1, 2, 0).numpy()

intermediates.append((step, timestep.item(), img))

return callback_kwargs

# Generate and capture intermediate steps

intermediates.clear()

prompt = "A majestic mountain landscape with aurora borealis, oil painting style"

final_image = pipe(

prompt=prompt,

num_inference_steps=20,

guidance_scale=7.5,

width=512, height=512,

generator=torch.Generator("cuda").manual_seed(77),

callback_on_step_end=callback_fn

).images[0]

# ★ Visualize the denoising process ★

n_show = min(8, len(intermediates))

indices = np.linspace(0, len(intermediates)-1, n_show, dtype=int)

fig, axes = plt.subplots(1, n_show, figsize=(n_show * 3.5, 3.5))

for i, idx in enumerate(indices):

step, timestep, img = intermediates[idx]

axes[i].imshow(img)

axes[i].set_title(f"Step {step}\nt={timestep}", fontsize=10)

axes[i].axis("off")

plt.suptitle("Diffusion Denoising Process — From Noise to Image", fontsize=14)

plt.tight_layout()

plt.show()

print(f"\nTotal {len(intermediates)} denoising steps")

print("Observation: Early stages form rough composition → Middle stages establish color and structure → Late stages refine details and textures")

7.6 Image-to-Image: Style Transfer

from diffusers import StableDiffusionImg2ImgPipeline

from PIL import Image

# ★ Load img2img pipeline (shares model weights) ★

pipe_img2img = StableDiffusionImg2ImgPipeline(**pipe.components).to("cuda")

# Use the previously generated image as input

init_image = final_image.resize((512, 512))

# ★ Style transfer at different strength levels ★

strengths = [0.3, 0.5, 0.7, 0.9]

style_prompt = "A majestic mountain landscape, watercolor painting, artistic brush strokes, vibrant colors"

fig, axes = plt.subplots(1, 5, figsize=(30, 6))

# Original image

axes[0].imshow(init_image)

axes[0].set_title("Original", fontsize=13)

axes[0].axis("off")

for i, strength in enumerate(strengths):

styled = pipe_img2img(

prompt=style_prompt,

image=init_image,

strength=strength,

num_inference_steps=25,

guidance_scale=7.5,

generator=torch.Generator("cuda").manual_seed(42)

).images[0]

axes[i+1].imshow(styled)

axes[i+1].set_title(f"strength = {strength}", fontsize=13)

axes[i+1].axis("off")

plt.suptitle("Image-to-Image Style Transfer — strength controls 'degree of change'", fontsize=15)

plt.tight_layout()

plt.show()

# ★ Key observations ★

# strength=0.3: Only fine-tunes color style, preserves original composition

# strength=0.5: Style change is noticeable, but main structure remains intact

# strength=0.7: Major redrawing, only preserving rough layout

# strength=0.9: Almost entirely new generation, only "inspired" by the original

7.7 Text Embedding Exploration

# ★ Explore how CLIP text embeddings influence generation ★

from transformers import CLIPTextModel, CLIPTokenizer

clip_tokenizer = CLIPTokenizer.from_pretrained("openai/clip-vit-large-patch14")

clip_model = CLIPTextModel.from_pretrained("openai/clip-vit-large-patch14").to("cuda")

def get_text_embedding(text):

tokens = clip_tokenizer(text, return_tensors="pt", padding=True, truncation=True).to("cuda")

with torch.no_grad():

emb = clip_model(**tokens).last_hidden_state

return emb

# ★ Compute similarity between different prompts ★

prompts = [

"A beautiful sunset over the ocean",

"A gorgeous sunrise by the sea",

"A cat sitting on a windowsill",

"Neural network architecture diagram",

]

embeddings = [get_text_embedding(p) for p in prompts]

# Use [CLS] token embedding for similarity

cls_embs = torch.stack([e[0, 0] for e in embeddings])

cls_embs = F.normalize(cls_embs, dim=-1)

sim_matrix = (cls_embs @ cls_embs.T).cpu().numpy()

fig, ax = plt.subplots(figsize=(7, 6))

im = ax.imshow(sim_matrix, cmap='RdYlBu_r', vmin=0.5, vmax=1.0)

ax.set_xticks(range(len(prompts)))

ax.set_yticks(range(len(prompts)))

short_labels = [p[:25] + "..." if len(p) > 25 else p for p in prompts]

ax.set_xticklabels(short_labels, rotation=45, ha='right', fontsize=9)

ax.set_yticklabels(short_labels, fontsize=9)

for i in range(len(prompts)):

for j in range(len(prompts)):

ax.text(j, i, f"{sim_matrix[i,j]:.2f}", ha='center', va='center', fontsize=11)

plt.colorbar(im)

plt.title("CLIP Text Embedding Cosine Similarity", fontsize=13)

plt.tight_layout()

plt.show()

# ★ Observations ★

# sunset/sunrise have high similarity → CLIP understands semantic proximity

# cat vs neural network have low similarity → large semantic distance

# This explains why similar prompts produce images with similar styles

8. Acceleration and Optimization Techniques for Diffusion Models

The biggest practical bottleneck of diffusion models is inference speed — the original DDPM requires 1000 iterative sampling steps, taking several minutes to generate a single image even on GPU. The following are multi-layered acceleration approaches spanning mathematics to engineering:

| Acceleration Method | Principle | Effect | Representative Work |

|---|---|---|---|

| Faster Samplers | Non-Markovian sampling, ODE solvers | 1000 steps → 20–50 steps | DDIM[5], DPM-Solver |

| Consistency Distillation | Distill multi-step denoising into fewer steps | 50 steps → 1–4 steps | LCM[16], SDXL-Turbo |

| Latent Space Diffusion | Operate in compressed latent space | ~48x computation reduction | LDM / Stable Diffusion[8] |

| Model Compression | Pruning + Quantization + Distillation | 30–70% model size reduction | BK-SDM, SVDQuant |

| Token Merging | Merge redundant visual tokens | Additional 1.5–2x speedup | ToMe for SD |

| Feature Caching | Cache high-level features to skip redundant computation | Additional 2–2.5x speedup | DeepCache |

| Architectural Evolution | Transformer replaces U-Net | Better scaling | DiT[13], FLUX |

These acceleration methods can be stacked. As we detailed in our article on Five-Technique Integration: BK-SDM (pruning + distillation) + LCM-LoRA (consistency distillation) + ToMe (token merging) stacked together can achieve 10x or more end-to-end speedup on a free Colab T4.

9. Enterprise Application Scenarios and Strategic Value

The industrialization of diffusion models is moving from "demonstrations" to "creating value":

- E-commerce and Retail: Virtual try-on, product background replacement, and batch marketing asset generation. The controllability of diffusion models allows brands to change backgrounds and styles while maintaining precise product representation

- Gaming and Film: Rapid concept art iteration, scene generation, and character design. Stable Diffusion + ControlNet enables artists to guide generation directly with sketches, dramatically shortening production cycles

- Medical Imaging: Synthetic training data (addressing medical image scarcity), super-resolution enhancement, and cross-modality conversion (CT to MRI). Conditional diffusion models augment datasets while maintaining medical accuracy

- Architecture and Design: Generating architectural renderings, interior design proposals, and industrial product appearances from text descriptions or sketches. The img2img feature allows designers to rapidly explore design spaces

- Materials Science and Drug Design: 3D molecular structure generation and crystal structure prediction. The flexibility of diffusion models in mixed discrete and continuous spaces makes them a new tool for scientific discovery

From a strategic perspective, enterprises should watch three key trends:

- Fine-tuning costs are plummeting: LoRA + quantization enables enterprises to train custom style models with just a few hundred domain images, reducing costs from tens of thousands of dollars to hundreds

- Controllability continues to improve: Technologies like ControlNet, IP-Adapter, and T2I-Adapter make generation results more precise and controllable, evolving from "luck of the draw" to "precise direction"

- Inference efficiency has crossed a critical threshold: LCM + quantization + cache optimization has pushed high-quality image generation from seconds into milliseconds, opening the door to real-time interactive applications

10. Conclusion and Outlook

Diffusion models have completed the leap from theoretical framework to industry infrastructure in just five years. Their success is no accident — they are built at the intersection of physics (non-equilibrium thermodynamics), statistics (score matching), and deep learning, possessing theoretical completeness and training stability that GANs lack.

Several frontier directions worth watching:

- Scaling video generation: Sora demonstrated the potential of the DiT architecture for temporally consistent video generation. Extending diffusion models from 2D to 3D spatiotemporal domains is currently the most active research direction

- 3D content generation: Generating 3D objects and scenes directly from text (DreamFusion, Zero-1-to-3), extending the success of diffusion models from 2D to 3D

- Unification of discrete diffusion: Unifying continuous and discrete diffusion under a single framework, enabling the same model to simultaneously handle text, images, code, music, and other multimodal data

- Flow Matching: As an alternative to diffusion models, directly learning optimal transport paths from noise to data, showing advantages both theoretically and practically

- The limits of inference efficiency: The journey from 1000 steps to 1 step continues — Consistency Models and Rectified Flow are exploring the quality ceiling of "single-step generation"

The core insight of diffusion models — decomposing complex generation problems into a series of simple denoising steps — has not only transformed image generation but is also reshaping our cognitive framework for generative AI. Mastering this framework means mastering the core language of AI innovation for the next decade.

If your team is evaluating enterprise application scenarios for diffusion models, or needs to build a complete pipeline from model selection, fine-tuning, to deployment optimization, we welcome an in-depth technical conversation. The research team at Meta Intelligence can help you translate the technical potential of diffusion models into measurable business value.