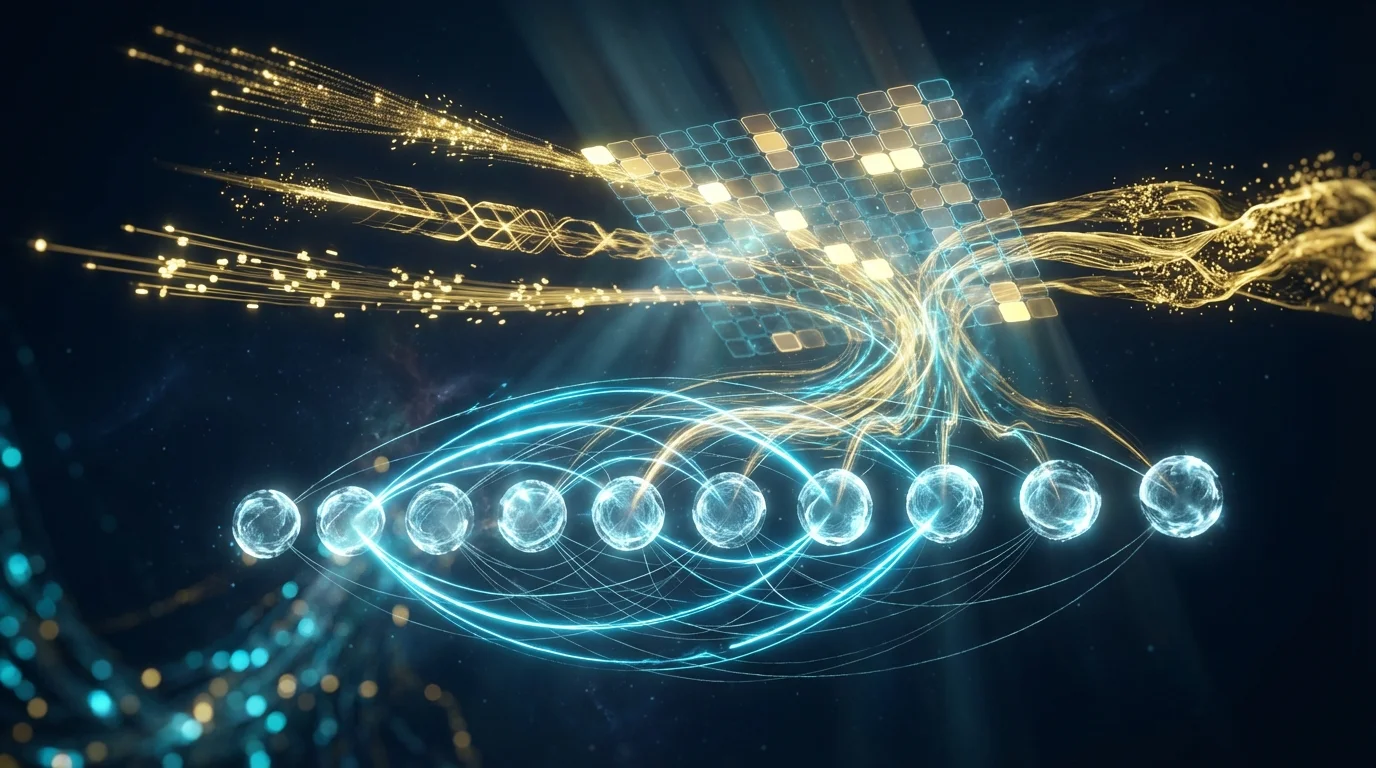

- Self-attention allows every element in a sequence to directly interact with all other elements[1], completely solving the long-range dependency bottleneck of recurrent neural networks, with fully parallelizable computation

- The Transformer architecture[1] has become the unified foundational architecture for NLP (BERT[3], GPT[4]) and computer vision (ViT[6], Swin[7])

- FlashAttention[11], Linformer[9], and other techniques break the O(n²) bottleneck of self-attention to near-linear complexity, enabling million-token context windows

- This article includes two Google Colab labs: Transformer text sentiment classification (self-implemented multi-head attention) and ViT image classification (attention heatmap visualization)

1. From Attention to Self-Attention: A Paradigm Revolution

In 2017, a Google team made a seemingly bold claim in a paper titled "Attention Is All You Need"[1]: completely abandon recurrence and convolution, and build the most powerful sequence model using attention alone. The Transformer architecture born from this paper completely reshaped the entire field of artificial intelligence within just a few years.

Before Transformer, attention mechanisms had already shown promise in Bahdanau et al.'s work[2] — serving as an auxiliary module for RNN, helping the decoder "attend to" the most relevant parts of the encoder output. But Vaswani et al. went further: they let every element in a sequence interact directly with all other elements, without needing RNN's step-by-step propagation. This is the essence of self-attention.

The significance of this transition:

| Property | RNN | Self-Attention |

|---|---|---|

| Long-range dependencies | O(n) steps to connect head and tail | O(1) direct connection between any positions |

| Parallelization | Must compute step by step, no parallelism | All positions computed simultaneously |

| Computational complexity | O(n · d²) sequential | O(n² · d) global |

| Memory bottleneck | Fixed-size hidden state | Dynamic attention weight matrix |

2. Scaled Dot-Product Attention: The Mathematical Core

Self-attention computation can be distilled into three matrix operations: Query, Key, and Value. Each input token is projected into three vectors, which are then used to compute mutual "relevance scores" via dot products.

Attention(Q, K, V) = softmax(Q · K^T / √d_k) · V

Where:

Q = X · W_Q (Query matrix, shape [n, d_k])

K = X · W_K (Key matrix, shape [n, d_k])

V = X · W_V (Value matrix, shape [n, d_v])

d_k = Dimension of Key vectors

√d_k = Scaling factor, prevents large dot products from saturating softmax

Intuitive understanding:

- Q · K^T: Computes the "similarity score" between every pair of tokens — an n×n attention matrix

- / √d_k: Scaling factor. When d_k is large, dot product variance is also large, causing softmax to produce extreme 0/1 distributions (vanishing gradients). Dividing by √d_k stabilizes the variance at 1[1]

- softmax: Converts scores to a probability distribution, representing how much each token "should attend to other tokens"

- · V: Computes attention-weighted sums of Value vectors, producing context-aware new representations

Example: In the sentence "The cat sat on the mat because it was tired," the Query vector for "it" computes dot products with Key vectors of all other tokens. Ideally, "cat"'s Key will produce the highest score with "it"'s Query, enabling the model to correctly resolve "it" as referring to "cat."

3. Multi-Head Attention: Parallel Multi-Perspective Observation

A single set of Q, K, V can only capture one type of relationship. But relationships in language are multifaceted — syntactic dependencies, semantic similarity, coreference, tense consistency... Transformer's solution is Multi-Head Attention:

MultiHead(Q, K, V) = Concat(head_1, ..., head_h) · W_O

Where head_i = Attention(Q · W_Q^i, K · W_K^i, V · W_V^i)

For d_model=512, h=8:

Each head's d_k = d_v = 512 / 8 = 64

8 heads independently perform 64-dim attention

Concatenated and projected back to 512-dim

Voita et al.'s research[12] found that different attention heads indeed learn different "roles": some focus on positional relationships, others track syntactic structure, others handle rare words. Interestingly, Michel et al.[17] found that many heads can be pruned with virtually no performance impact — suggesting that the multi-head mechanism provides beneficial redundancy and regularization.

4. Positional Encoding: Teaching Attention to Understand "Order"

Self-attention is inherently permutation invariant — shuffling the input order shuffles the output correspondingly, but each token's representation remains unchanged. This means "dog bites man" and "man bites dog" look identical to pure self-attention. Therefore, Transformer requires additional positional encoding to inject order information.

The original Transformer uses sinusoidal positional encoding[1]:

PE(pos, 2i) = sin(pos / 10000^(2i/d_model))

PE(pos, 2i+1) = cos(pos / 10000^(2i/d_model))

Where pos is the token position, i is the dimension index

In recent years, positional encoding has undergone significant evolution:

| Method | Core Idea | Advantage | Representative Model |

|---|---|---|---|

| Sinusoidal[1] | Fixed trigonometric functions | No training needed | Original Transformer |

| Learnable | Trainable vector per position | Adaptive | BERT, GPT |

| RoPE[13] | Rotation matrices for relative positions | Length extrapolation, relative awareness | LLaMA, PaLM |

| ALiBi[14] | Linear distance bias on attention scores | Zero parameters, strong extrapolation | BLOOM, MPT |

RoPE[13] has become the standard choice for current mainstream large language models. It encodes positional information as rotations in vector space — the attention score between two tokens depends only on their relative distance and has excellent length extrapolation capabilities.

5. The Complete Transformer Architecture

The full Transformer consists of Encoder and Decoder components, but different applications select different combinations:

| Architecture Type | Structure | Representative Model | Typical Tasks |

|---|---|---|---|

| Encoder-only | Bidirectional self-attention | BERT[3] | Classification, NER, QA |

| Decoder-only | Causal masked self-attention | GPT[4][5] | Text generation, dialogue |

| Encoder-Decoder | Encoder + cross-attention + decoder | T5, BART | Translation, summarization |

Each Transformer layer (block) contains two sub-layers, each with residual connections and layer normalization:

Transformer Block:

1. Multi-Head Self-Attention

→ LayerNorm(x + MultiHead(x, x, x))

2. Feed-Forward Network (FFN)

→ LayerNorm(x + FFN(x))

→ FFN(x) = max(0, x·W_1 + b_1) · W_2 + b_2

Decoder additionally includes:

- Causal Mask: masks future positions for autoregressive generation

- Cross-Attention: Query from decoder, Key/Value from encoder

6. BERT and GPT: Two Diverging Paths

The Transformer architecture gave rise to two major pre-training paradigms, differing in the "visibility range" of self-attention:

BERT (Bidirectional)[3]: Uses the Encoder architecture, where each token can see all other tokens in the sequence (including both preceding and following context). Pre-trained with Masked Language Modeling (MLM) — randomly masking 15% of tokens and having the model predict the masked words. This bidirectional context makes BERT particularly suited for understanding-oriented tasks.

GPT (Autoregressive)[4]: Uses the Decoder architecture, where each token can only see tokens before it (causal masking). Pre-trained with Next Token Prediction. GPT-3[5] scaled parameters to 175 billion, demonstrating remarkable few-shot learning — executing translation, question answering, code generation, and more through prompts alone without fine-tuning.

As scale increases, Kaplan et al.[15] discovered the famous Scaling Laws — stable power-law relationships between model performance (loss) and parameter count, data volume, and compute budget. Even more surprisingly, Wei et al.[16] documented the emergent abilities phenomenon: certain capabilities (such as chain-of-thought reasoning) are completely absent in small models but suddenly appear when models reach specific scale thresholds.

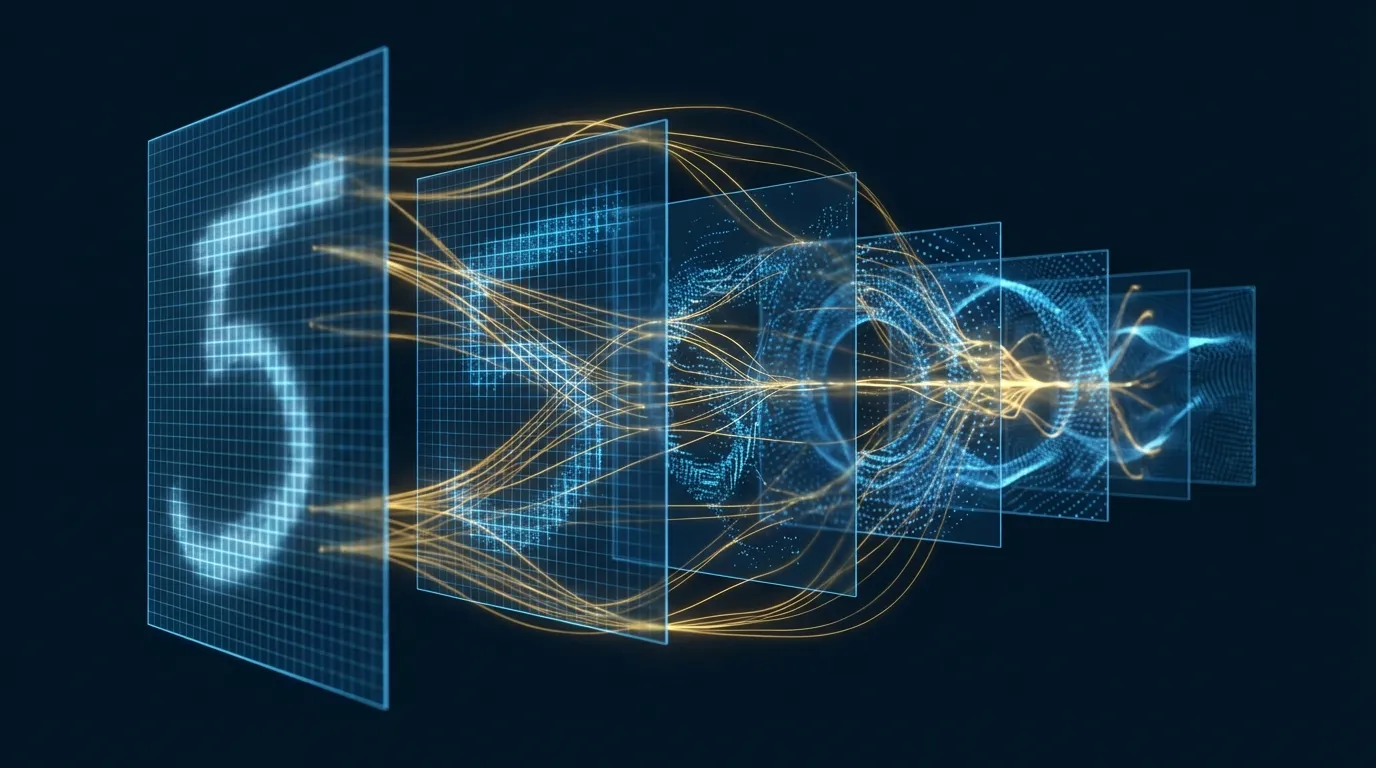

7. Vision Transformer: Self-Attention Conquers Vision

For a long time, computer vision was dominated by convolutional neural networks. In 2021, Dosovitskiy et al.[6] proposed the Vision Transformer (ViT), proving that pure self-attention architectures can match or even surpass the strongest CNNs on large-scale data.

ViT's core idea is remarkably elegant:

ViT Pipeline:

1. Split 224×224 image into 16×16 patches → 196 patches

2. Flatten each patch into a 768-dim vector (16×16×3 = 768)

3. Add learnable positional encoding + [CLS] token

4. Feed into standard Transformer Encoder

5. Use [CLS] token output for classification

"An image is worth 16×16 words" — treating image patches as tokens in a sequence

ViT's success triggered an explosion of vision Transformers. Swin Transformer[7] reduced attention complexity from O(n²) to O(n) with a shifted window strategy, becoming a general-purpose visual backbone. DETR[8] achieved end-to-end object detection with Transformer, eliminating traditional anchor boxes and NMS post-processing.

8. Efficiency Breakthroughs: Breaking Self-Attention's O(n²) Barrier

Self-attention's O(n²) time and memory complexity is its biggest bottleneck. For a sequence of length n, the attention matrix requires n² computation and storage. When n = 100,000, this means 10^10 operations — which is unacceptable from an engineering standpoint.

The academic and industrial communities have proposed multiple breakthrough strategies:

| Method | Core Strategy | Complexity | Exactness |

|---|---|---|---|

| Linformer[9] | Low-rank projection of Key/Value | O(n) | Approximate |

| Performer[10] | Random feature mapping (FAVOR+) | O(n) | Approximate |

| FlashAttention[11] | IO-aware tiling + kernel fusion | O(n²) but 2-4x faster | Exact |

| Sliding Window | Attend only to local windows | O(n · w) | Locally exact |

| Sparse Attention | Sparse attention patterns | O(n√n) | Approximate |

FlashAttention[11]'s contribution is particularly important: it doesn't change the math — the computation result is exactly identical to standard attention — but achieves 2-4x speedup through clever GPU memory management (tiled computation, avoiding HBM ↔ SRAM round trips). It has become a standard component in modern LLM training.

9. Hands-on Lab 1: Building a Transformer Text Classifier from Scratch (Google Colab)

The following experiment implements multi-head self-attention from scratch, builds a mini Transformer for IMDb movie review sentiment classification, and visualizes attention weights.

# ============================================================

# Lab 1: Transformer from Scratch — IMDb Sentiment Classification + Attention Visualization

# Environment: Google Colab (GPU)

# ============================================================

# --- 0. Installation ---

!pip install -q torchtext datasets

import torch

import torch.nn as nn

import torch.nn.functional as F

import math

import numpy as np

import matplotlib.pyplot as plt

from datasets import load_dataset

from torch.utils.data import DataLoader

from collections import Counter

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(f"Device: {device}")

# --- 1. Data Preparation ---

dataset = load_dataset("imdb")

train_data = dataset["train"].shuffle(seed=42).select(range(10000))

test_data = dataset["test"].shuffle(seed=42).select(range(2000))

# Simple tokenizer

def simple_tokenizer(text):

return text.lower().split()

# Build vocabulary

counter = Counter()

for example in train_data:

counter.update(simple_tokenizer(example["text"]))

vocab = {"<pad>": 0, "<unk>": 1}

for word, count in counter.most_common(20000):

if count >= 3:

vocab[word] = len(vocab)

vocab_size = len(vocab)

print(f"Vocab size: {vocab_size}")

MAX_LEN = 256

def encode(text):

tokens = simple_tokenizer(text)[:MAX_LEN]

ids = [vocab.get(t, 1) for t in tokens]

return ids

def collate_fn(batch):

texts = [encode(ex["text"]) for ex in batch]

labels = torch.tensor([ex["label"] for ex in batch])

max_len = min(max(len(t) for t in texts), MAX_LEN)

padded = torch.zeros(len(texts), max_len, dtype=torch.long)

for i, t in enumerate(texts):

padded[i, :len(t)] = torch.tensor(t)

return padded, labels

train_loader = DataLoader(train_data, batch_size=32, shuffle=True, collate_fn=collate_fn)

test_loader = DataLoader(test_data, batch_size=64, shuffle=False, collate_fn=collate_fn)

# --- 2. Self-Implemented Multi-Head Self-Attention ---

class MultiHeadSelfAttention(nn.Module):

def __init__(self, d_model, n_heads):

super().__init__()

assert d_model % n_heads == 0

self.d_model = d_model

self.n_heads = n_heads

self.d_k = d_model // n_heads

self.W_Q = nn.Linear(d_model, d_model)

self.W_K = nn.Linear(d_model, d_model)

self.W_V = nn.Linear(d_model, d_model)

self.W_O = nn.Linear(d_model, d_model)

self.attn_weights = None # Store attention weights for visualization

def forward(self, x, mask=None):

B, N, _ = x.shape

# Project + split heads: [B, N, d_model] → [B, h, N, d_k]

Q = self.W_Q(x).view(B, N, self.n_heads, self.d_k).transpose(1, 2)

K = self.W_K(x).view(B, N, self.n_heads, self.d_k).transpose(1, 2)

V = self.W_V(x).view(B, N, self.n_heads, self.d_k).transpose(1, 2)

# Scaled Dot-Product Attention

scores = torch.matmul(Q, K.transpose(-2, -1)) / math.sqrt(self.d_k)

if mask is not None:

scores = scores.masked_fill(mask == 0, float('-inf'))

attn = F.softmax(scores, dim=-1)

self.attn_weights = attn.detach()

out = torch.matmul(attn, V) # [B, h, N, d_k]

out = out.transpose(1, 2).contiguous().view(B, N, self.d_model)

return self.W_O(out)

# --- 3. Transformer Encoder Block ---

class TransformerBlock(nn.Module):

def __init__(self, d_model, n_heads, d_ff, dropout=0.1):

super().__init__()

self.attn = MultiHeadSelfAttention(d_model, n_heads)

self.norm1 = nn.LayerNorm(d_model)

self.norm2 = nn.LayerNorm(d_model)

self.ffn = nn.Sequential(

nn.Linear(d_model, d_ff),

nn.GELU(),

nn.Dropout(dropout),

nn.Linear(d_ff, d_model),

nn.Dropout(dropout),

)

self.dropout = nn.Dropout(dropout)

def forward(self, x, mask=None):

x = self.norm1(x + self.dropout(self.attn(x, mask)))

x = self.norm2(x + self.ffn(x))

return x

# --- 4. Complete Classification Model ---

class TransformerClassifier(nn.Module):

def __init__(self, vocab_size, d_model=128, n_heads=4,

n_layers=3, d_ff=256, max_len=256, n_classes=2, dropout=0.1):

super().__init__()

self.embedding = nn.Embedding(vocab_size, d_model, padding_idx=0)

self.pos_encoding = nn.Embedding(max_len, d_model)

self.blocks = nn.ModuleList([

TransformerBlock(d_model, n_heads, d_ff, dropout)

for _ in range(n_layers)

])

self.classifier = nn.Linear(d_model, n_classes)

self.dropout = nn.Dropout(dropout)

def forward(self, x):

B, N = x.shape

positions = torch.arange(N, device=x.device).unsqueeze(0).expand(B, N)

mask = (x != 0).unsqueeze(1).unsqueeze(2) # [B, 1, 1, N]

x = self.dropout(self.embedding(x) + self.pos_encoding(positions))

for block in self.blocks:

x = block(x, mask)

# Global average pooling (ignoring padding)

mask_float = (x != 0).any(dim=-1, keepdim=True).float()

x = (x * mask_float).sum(dim=1) / mask_float.sum(dim=1).clamp(min=1)

return self.classifier(x)

# --- 5. Training ---

model = TransformerClassifier(vocab_size).to(device)

optimizer = torch.optim.AdamW(model.parameters(), lr=3e-4, weight_decay=0.01)

criterion = nn.CrossEntropyLoss()

print(f"Parameters: {sum(p.numel() for p in model.parameters()):,}")

for epoch in range(6):

model.train()

total_loss, correct, total = 0, 0, 0

for xb, yb in train_loader:

xb, yb = xb.to(device), yb.to(device)

logits = model(xb)

loss = criterion(logits, yb)

optimizer.zero_grad()

loss.backward()

nn.utils.clip_grad_norm_(model.parameters(), 1.0)

optimizer.step()

total_loss += loss.item() * xb.size(0)

correct += (logits.argmax(1) == yb).sum().item()

total += xb.size(0)

print(f"Epoch {epoch+1}: loss={total_loss/total:.4f}, acc={correct/total:.4f}")

# --- 6. Testing ---

model.eval()

correct, total = 0, 0

with torch.no_grad():

for xb, yb in test_loader:

xb, yb = xb.to(device), yb.to(device)

logits = model(xb)

correct += (logits.argmax(1) == yb).sum().item()

total += xb.size(0)

print(f"\nTest Accuracy: {correct/total:.4f}")

# --- 7. Attention Visualization ---

def visualize_attention(text, model):

model.eval()

tokens = simple_tokenizer(text)[:50]

ids = torch.tensor([[vocab.get(t, 1) for t in tokens]]).to(device)

with torch.no_grad():

_ = model(ids)

# Get last layer attention weights

attn = model.blocks[-1].attn.attn_weights[0] # [h, N, N]

fig, axes = plt.subplots(1, 4, figsize=(20, 5))

for i in range(4):

ax = axes[i]

im = ax.imshow(attn[i, :len(tokens), :len(tokens)].cpu(),

cmap='Blues', aspect='auto')

ax.set_xticks(range(len(tokens)))

ax.set_yticks(range(len(tokens)))

ax.set_xticklabels(tokens, rotation=90, fontsize=7)

ax.set_yticklabels(tokens, fontsize=7)

ax.set_title(f'Head {i+1}')

plt.colorbar(im, ax=ax, fraction=0.046)

plt.suptitle('Multi-Head Self-Attention Weights (Last Layer)', fontsize=14)

plt.tight_layout()

plt.show()

# Visualization examples

visualize_attention("this movie was absolutely wonderful and the acting was superb", model)

visualize_attention("terrible film with awful dialogue and boring plot", model)

print("Lab 1 Complete!")

10. Hands-on Lab 2: Vision Transformer Image Classification + Attention Heatmaps (Google Colab)

The following experiment uses a pre-trained ViT model for image classification and extracts self-attention weights to generate attention heatmaps, intuitively showing what the model "sees."

# ============================================================

# Lab 2: Vision Transformer — Image Classification + Attention Heatmap Visualization

# Environment: Google Colab (GPU or CPU)

# ============================================================

# --- 0. Installation ---

!pip install -q transformers timm pillow matplotlib

import torch

import torch.nn.functional as F

import numpy as np

import matplotlib.pyplot as plt

from PIL import Image

from torchvision import transforms

from transformers import ViTForImageClassification, ViTFeatureExtractor

import requests

from io import BytesIO

import warnings

warnings.filterwarnings('ignore')

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(f"Device: {device}")

# --- 1. Load Pre-trained ViT ---

model_name = "google/vit-base-patch16-224"

model = ViTForImageClassification.from_pretrained(

model_name, output_attentions=True

).to(device).eval()

feature_extractor = ViTFeatureExtractor.from_pretrained(model_name)

print(f"Model: {model_name}")

print(f"Parameters: {sum(p.numel() for p in model.parameters()):,}")

print(f"Patch size: 16x16, Image size: 224x224 → 196 patches + 1 [CLS]")

# --- 2. Image Loading and Preprocessing ---

def load_image(url):

response = requests.get(url)

img = Image.open(BytesIO(response.content)).convert("RGB")

return img

# Classic test images

urls = {

"Golden Retriever": "https://upload.wikimedia.org/wikipedia/commons/thumb/b/bd/Golden_Retriever_Dukedestination.jpg/800px-Golden_Retriever_Dukedestination.jpg",

"Tabby Cat": "https://upload.wikimedia.org/wikipedia/commons/thumb/4/4d/Cat_November_2010-1a.jpg/800px-Cat_November_2010-1a.jpg",

"Bald Eagle": "https://upload.wikimedia.org/wikipedia/commons/thumb/1/1a/About_to_Launch_%2826075320352%29.jpg/800px-About_to_Launch_%2826075320352%29.jpg",

}

images = {}

for name, url in urls.items():

try:

images[name] = load_image(url)

print(f"Loaded: {name} ({images[name].size})")

except Exception as e:

print(f"Failed to load {name}: {e}")

# --- 3. Inference + Attention Extraction ---

def predict_with_attention(img, model, feature_extractor):

inputs = feature_extractor(images=img, return_tensors="pt").to(device)

with torch.no_grad():

outputs = model(**inputs)

logits = outputs.logits

probs = F.softmax(logits, dim=-1)

top5 = torch.topk(probs, 5)

# Attention weights: list of [B, heads, N, N] for each layer

attentions = outputs.attentions # 12 layers

return top5, attentions

# --- 4. Attention Heatmap Generation ---

def get_attention_map(attentions, layer=-1):

"""Extract [CLS] token attention from specified layer → 14x14 heatmap"""

attn = attentions[layer][0] # [heads, N, N]

attn_avg = attn.mean(dim=0) # [N, N]

# [CLS] token (position 0) attention to all patches

cls_attn = attn_avg[0, 1:] # Remove [CLS] self, [196]

cls_attn = cls_attn.reshape(14, 14).cpu().numpy()

# Normalize

cls_attn = (cls_attn - cls_attn.min()) / (cls_attn.max() - cls_attn.min() + 1e-8)

return cls_attn

def get_rollout_attention(attentions):

"""Attention Rollout: accumulate attention across all layers"""

result = torch.eye(attentions[0].size(-1)).to(device)

for attn_layer in attentions:

attn = attn_layer[0].mean(dim=0) # [N, N] average all heads

attn = attn + torch.eye(attn.size(0)).to(device) # Residual connection

attn = attn / attn.sum(dim=-1, keepdim=True) # Normalize

result = torch.matmul(attn, result)

cls_attn = result[0, 1:].reshape(14, 14).cpu().numpy()

cls_attn = (cls_attn - cls_attn.min()) / (cls_attn.max() - cls_attn.min() + 1e-8)

return cls_attn

# --- 5. Visualization ---

def visualize_vit(name, img, model, feature_extractor):

top5, attentions = predict_with_attention(img, model, feature_extractor)

print(f"\n{'='*50}")

print(f"Image: {name}")

print(f"{'='*50}")

for i in range(5):

idx = top5.indices[0][i].item()

prob = top5.values[0][i].item()

label = model.config.id2label[idx]

print(f" {i+1}. {label}: {prob:.4f}")

attn_first = get_attention_map(attentions, layer=0)

attn_mid = get_attention_map(attentions, layer=5)

attn_last = get_attention_map(attentions, layer=-1)

attn_rollout = get_rollout_attention(attentions)

img_np = np.array(img.resize((224, 224)))

fig, axes = plt.subplots(1, 5, figsize=(25, 5))

axes[0].imshow(img_np)

axes[0].set_title(f"Original\n{model.config.id2label[top5.indices[0][0].item()]}", fontsize=12)

axes[0].axis('off')

titles = ['Layer 1 Attention', 'Layer 6 Attention',

'Layer 12 Attention', 'Attention Rollout']

maps = [attn_first, attn_mid, attn_last, attn_rollout]

for i, (title, attn_map) in enumerate(zip(titles, maps)):

ax = axes[i + 1]

ax.imshow(img_np)

attn_resized = np.array(Image.fromarray(

(attn_map * 255).astype(np.uint8)

).resize((224, 224), Image.BICUBIC)) / 255.0

ax.imshow(attn_resized, alpha=0.6, cmap='jet')

ax.set_title(title, fontsize=12)

ax.axis('off')

plt.suptitle(f'ViT Attention Maps — {name}', fontsize=16, fontweight='bold')

plt.tight_layout()

plt.show()

# --- 6. Run Visualization ---

for name, img in images.items():

visualize_vit(name, img, model, feature_extractor)

# --- 7. Multi-Head Attention Comparison ---

def visualize_heads(name, img, model, feature_extractor, layer=-1):

"""Visualize attention patterns of different heads in a specified layer"""

inputs = feature_extractor(images=img, return_tensors="pt").to(device)

with torch.no_grad():

outputs = model(**inputs, output_attentions=True)

attn = outputs.attentions[layer][0] # [12, 197, 197]

img_np = np.array(img.resize((224, 224)))

fig, axes = plt.subplots(2, 6, figsize=(24, 8))

for head_idx in range(12):

ax = axes[head_idx // 6][head_idx % 6]

head_attn = attn[head_idx, 0, 1:].reshape(14, 14).cpu().numpy()

head_attn = (head_attn - head_attn.min()) / (head_attn.max() - head_attn.min() + 1e-8)

attn_resized = np.array(Image.fromarray(

(head_attn * 255).astype(np.uint8)

).resize((224, 224), Image.BICUBIC)) / 255.0

ax.imshow(img_np)

ax.imshow(attn_resized, alpha=0.6, cmap='inferno')

ax.set_title(f'Head {head_idx+1}', fontsize=10)

ax.axis('off')

plt.suptitle(f'12 Attention Heads (Last Layer) — {name}', fontsize=16, fontweight='bold')

plt.tight_layout()

plt.show()

# Visualize 12 heads for the first image

first_name = list(images.keys())[0]

visualize_heads(first_name, images[first_name], model, feature_extractor)

print("\nLab 2 Complete!")

11. Decision Framework: How Enterprises Choose Attention Architectures

| Scenario | Recommended Architecture | Rationale |

|---|---|---|

| Text classification / NER | BERT-base fine-tuning | Bidirectional context, optimal for classification tasks |

| Text generation / dialogue | GPT series / LLaMA | Autoregressive generation, causal attention |

| Image classification | ViT / Swin Transformer | Surpasses CNN on large-scale data |

| Object detection | DETR / Swin + FPN | End-to-end, no anchor boxes needed |

| Ultra-long sequences | FlashAttention + RoPE | 128K+ token context |

| Edge devices | Distilled ViT / MobileViT | Lightweight self-attention |

| Multimodal | Cross-attention Transformer | Unified image-text representation |

12. Conclusion and Outlook

Self-attention is one of the most important breakthroughs in artificial intelligence over the past decade. From 2017's "Attention Is All You Need"[1] to today's GPT-4, Claude, and Gemini, self-attention has become the computational core of virtually all frontier AI systems.

Reviewing this revolution:

- Unification of NLP: From BERT[3] to GPT[5], Transformer unified understanding and generation

- Vision breakthrough: ViT[6] proved self-attention works not only for sequences but also for two-dimensional structures

- Efficiency leap: FlashAttention[11] and sparse attention made million-token context windows a reality

- Scale magic: Scaling Laws[15] and emergent abilities[16] revealed the principle that "bigger = qualitative change"

Looking ahead, the evolutionary directions of self-attention include: state space models (SSM / Mamba) as sub-linear complexity alternatives, mixture of experts (MoE) for sparsely activated ultra-large models, and multimodal unified architectures that fuse text, images, audio, and video in the same attention space. Regardless of how the specific form evolves, the core idea of "letting every element dynamically attend to all relevant information" will continue to lead the next decade of AI.