- The core design of CNNs was inspired by Hubel and Wiesel's research on the cat visual cortex[1] -- the concepts of local receptive fields and hierarchical feature extraction have endured since 1962

- From LeNet-5[2] to ResNet[6] to EfficientNet[7], the evolution of CNN architectures reflects a central question: how to find the optimal balance between depth, width, and efficiency

- CNNs are not limited to images -- TextCNN[8] demonstrated that 1D convolutions are both fast and effective for text classification, and remain a cost-effective choice for industrial NLP classification to this day

- This article includes two Google Colab hands-on labs: MNIST handwritten digit recognition with feature map visualization, and TextCNN for text sentiment classification

1. From Biological Vision to Artificial Vision: The Origins of CNNs

In 1962, neuroscientists David Hubel and Torsten Wiesel conducted a history-changing experiment on an anesthetized cat[1]. They discovered two types of cells in the cat's visual cortex: simple cells (sensitive to edges of specific orientations) and complex cells (exhibiting a degree of position invariance). More importantly, visual information is processed hierarchically -- lower layers detect edges, middle layers combine them into shapes, and higher layers recognize objects.

This discovery directly inspired the three core design principles of convolutional neural networks:

- Local Receptive Fields: Each neuron observes only a small region of the input, corresponding to the CNN's convolutional kernel

- Weight Sharing: The same feature detector slides across the entire image, corresponding to the CNN's convolution operation

- Spatial Subsampling: Resolution is progressively reduced layer by layer, corresponding to the CNN's pooling operation

In 1998, Yann LeCun et al.[2] engineered these principles into LeNet-5 -- a convolutional network with only 5 layers that achieved 99.2% accuracy on handwritten digit recognition and was used by the U.S. Postal Service to process 10% of the nation's handwritten checks. LeNet-5 demonstrated a profound insight: good inductive bias matters more than more parameters.

However, the true explosion of CNNs would not come until 2012. Krizhevsky, Sutskever, and Hinton's AlexNet[3] won the ImageNet Large Scale Visual Recognition Challenge by a dominant margin -- a Top-5 error rate of 16.4%, compared to 26.2% for the second-place traditional method. This 10-percentage-point gap shocked the entire computer vision community and officially ushered in the era of deep learning[16].

2. Core Mechanisms: Convolution, Pooling, and Feature Hierarchies

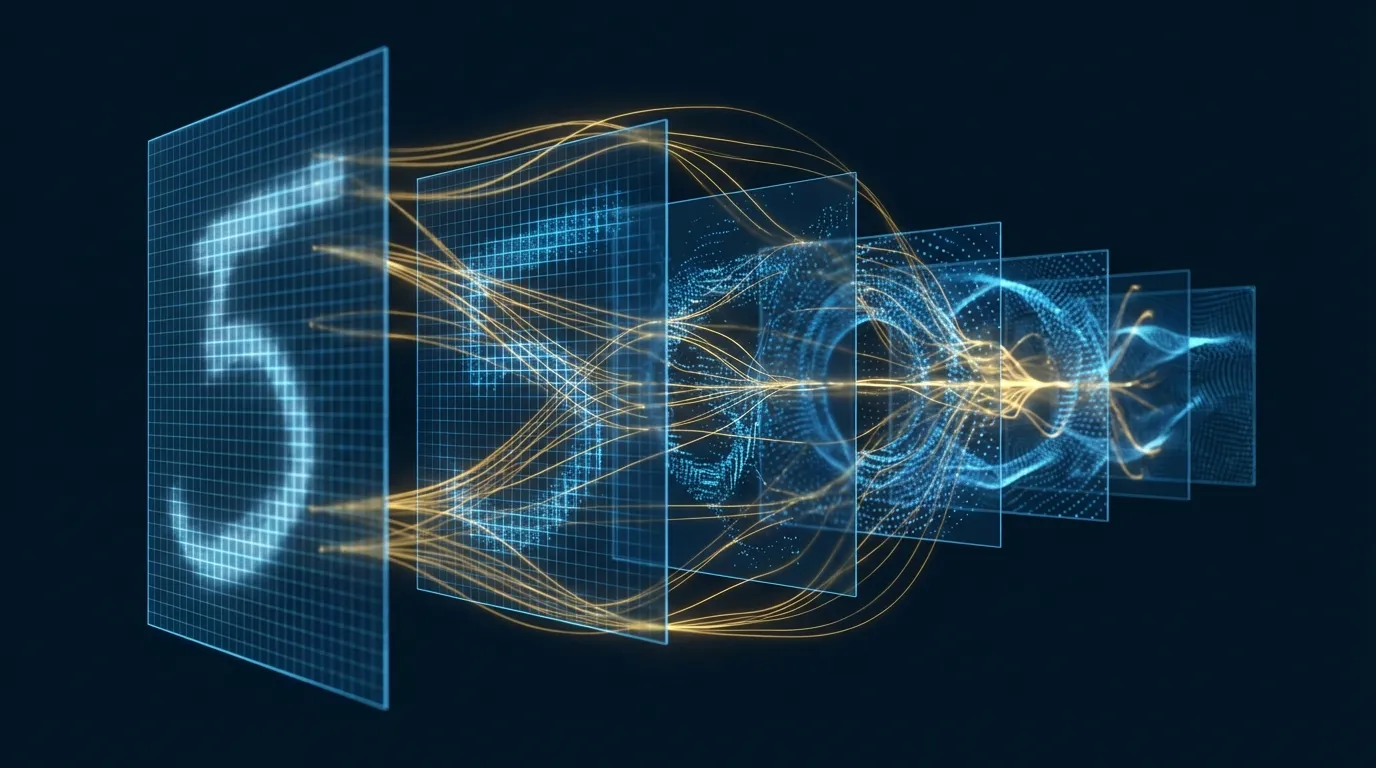

2.1 The Convolution Operation: Local Feature Detection

At its essence, convolution is a sliding-window pattern matching operation. A 3x3 convolutional kernel (also called a filter) slides across the input image, computing the dot product between the kernel and the corresponding region at each position, producing an output value. Once the kernel has traversed the entire image, the result is a feature map.

A convolutional layer typically contains multiple kernels -- each one learns to detect a different pattern. For example, the 6 kernels in the first layer might learn to detect horizontal edges, vertical edges, 45-degree diagonals, 135-degree diagonals, corners, and curves, respectively.

Why is convolution more effective than fully connected layers? Goodfellow et al.'s seminal textbook[10] identifies three key advantages:

- Sparse Interactions: Each output depends only on local inputs, reducing the number of parameters from O(n^2) to O(k^2) (where k is the kernel size)

- Weight Sharing: The same kernel is reused across all positions -- regardless of image size, the number of kernel parameters remains constant

- Translation Equivariance: Whether a cat appears on the left or right side of the image, the same kernel can detect it -- CNNs inherently possess translation invariance

2.2 The Pooling Operation: Spatial Compression and Invariance

Pooling performs spatial downsampling on feature maps -- the most common variant, 2x2 Max Pooling, takes the maximum value from every 4 pixels, reducing the height and width of the feature map by half.

The significance of pooling extends beyond data compression -- it introduces local translation invariance. Regardless of the exact position within a 2x2 region where a feature is detected, the pooled output remains the same. This gives CNNs robustness to small shifts and deformations.

2.3 Hierarchical Features: From Edges to Objects

The seminal visualization study by Zeiler and Fergus[9] revealed that the features learned by each CNN layer form a clear hierarchical structure:

- Layer 1: Edges and color gradients -- the most fundamental visual elements

- Layer 2: Corners, textures, and simple shapes -- combinations of edges

- Layer 3: Object parts -- eyes, wheels, windows

- Layers 4-5: Complete objects and scenes -- faces, cars, buildings

This hierarchical structure aligns remarkably with the layered visual processing mechanism discovered by Hubel and Wiesel -- CNNs have, in a sense, "reinvented" the information processing strategy of biological vision.

3. Architectural Evolution: From LeNet to ConvNeXt

| Year | Architecture | Depth | Key Innovation | ImageNet Top-5 Error |

|---|---|---|---|---|

| 1998 | LeNet-5[2] | 5 | Basic convolution + pooling paradigm | -- (MNIST 0.8%) |

| 2012 | AlexNet[3] | 8 | ReLU, Dropout, GPU training | 16.4% |

| 2014 | VGGNet[4] | 16-19 | Stacking small 3x3 kernels uniformly | 7.3% |

| 2014 | GoogLeNet[5] | 22 | Inception multi-scale parallel convolutions | 6.7% |

| 2015 | ResNet[6] | 152 | Residual connections (skip connections) | 3.6% |

| 2017 | MobileNet[12] | 28 | Depthwise separable convolutions | -- (efficient deployment) |

| 2019 | EfficientNet[7] | Auto-scaled | Compound scaling (depth x width x resolution) | 2.9% (B7) |

| 2022 | ConvNeXt[15] | Variable | Modernizing CNN with Transformer design principles | On par with ViT |

Several key turning points deserve deeper examination:

VGGNet's Insight[4]: Two stacked 3x3 kernels have the same effective receptive field as a single 5x5 kernel, but with fewer parameters (18 vs. 25) and an additional nonlinear activation in between. The conclusion: deeper and narrower is better than shallower and wider.

ResNet's Breakthrough[6]: As networks exceeded 20 layers, training error actually increased -- not due to overfitting, but due to the degradation problem. ResNet's residual connections (F(x) + x) solved this: by learning "residuals" rather than direct mappings, gradients could flow smoothly through 152 layers. This is one of the most important architectural innovations in deep learning.

1x1 Convolutions[14]: Seemingly paradoxical -- a 1x1 kernel has no spatial receptive field. But it performs linear combinations along the channel dimension, enabling dimensionality reduction or expansion. Both GoogLeNet's Inception modules and ResNet's bottleneck structures rely on 1x1 convolutions to control computational cost.

Batch Normalization[13]: Normalizing the output after each layer stabilizes the training process and permits the use of larger learning rates. BN is so effective that virtually all modern CNNs include it as a standard component.

4. Core Applications of CNNs in Computer Vision

CNN applications in computer vision have permeated every subdomain:

- Image Classification: From ImageNet's 1000-class classification to medical imaging lesion detection, CNNs remain the most mature solution

- Object Detection: The R-CNN family, YOLO, and SSD add region proposals and bounding box regression on top of CNN backbones

- Semantic Segmentation: FCN (Fully Convolutional Networks) and U-Net extend classification from the image level to the pixel level

- Image Generation: The discriminators and generators in Generative Adversarial Networks and the U-Net backbones in diffusion models are built on CNNs at their core

- Visual Feature Extraction: Intermediate-layer features from pretrained CNNs (ResNet, EfficientNet) are widely used for transfer learning

5. CNNs for Text AI: TextCNN

In 2014, Yoon Kim[8] published a concise yet powerful paper demonstrating that convolutional neural networks can not only see images but also read text. TextCNN's design is remarkably elegant:

- Input Representation: Each word in a sentence is converted into a word embedding vector (e.g., 300 dimensions), making the entire sentence an n x 300 matrix -- treating text as if it were an image

- 1D Convolution: Kernels of different sizes (e.g., windows of 3, 4, or 5 words) slide across the sentence, capturing n-gram patterns of varying lengths

- Global Max Pooling: The maximum value is taken from each kernel's output -- regardless of sentence length, it is compressed into a fixed-length vector

- Fully Connected Classification: The outputs from all kernels are concatenated and passed through an FC layer with Softmax for classification

The intuition behind TextCNN: kernels of different sizes act like searches for "key phrases" of different lengths in the text. A 3-gram kernel might capture "not very good," while a 4-gram kernel might capture "waste of my time" -- these local patterns, combined, are sufficient to determine sentiment polarity.

Although Transformers and BERT have surpassed CNNs on many NLP tasks, TextCNN still holds an irreplaceable position in industry: inference speeds 10-100x faster (no quadratic complexity from self-attention), lower deployment resource requirements, and accuracy on short text classification approaching that of BERT.

6. Interactive 3D Visualization: How CNNs Recognize Handwritten Digits

The best way to understand CNNs is to "see" them in action. We built an interactive 3D visualization using Three.js that demonstrates how a LeNet-style CNN processes MNIST handwritten digits:

In this visualization, you can imagine:

- Selecting different digits (0-9) to observe how the input changes

- Clicking on different layers (Conv1 -> Pool1 -> Conv2 -> Pool2 -> FC -> Output) to view information at each layer

- Dragging to rotate and observe the 3D network structure from different angles

- Activating the data flow animation to watch signals propagate from input to output

Key observations:

- Hierarchical progression of feature maps: The input layer is a clear 28x28 pixel image; Conv1 produces 6 blurred feature maps (edge detection); Conv2 produces 16 smaller, more abstract feature maps (pattern combinations)

- Compression of spatial dimensions: After each pooling operation, feature maps shrink by half (28 -> 14 -> 7 -> ...), while the number of channels increases (1 -> 6 -> 16) -- compensating for the loss of spatial information with more "types" of features

- The transition from spatial to semantic: Convolutional layers preserve spatial structure, while fully connected layers break it -- FC layers no longer care "where" a feature is, only "what" it is

7. Hands-on Lab 1: MNIST Handwritten Digit Recognition with Feature Map Visualization (Google Colab)

This lab builds a CNN from scratch to train on MNIST and visualizes the convolutional kernels and feature maps at each layer -- letting you see firsthand "what the CNN has learned."

Open Google Colab (CPU is sufficient, GPU is faster), create a new Notebook, and paste the following code blocks in sequence:

7.1 Data Preparation and Baseline Testing

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np

# Load the MNIST dataset

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_dataset = datasets.MNIST('./data', train=True, download=True, transform=transform)

test_dataset = datasets.MNIST('./data', train=False, transform=transform)

train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=1000, shuffle=False)

# Visualize sample training images

fig, axes = plt.subplots(2, 8, figsize=(16, 4))

for i in range(16):

img, label = train_dataset[i]

ax = axes[i // 8][i % 8]

ax.imshow(img.squeeze(), cmap='gray')

ax.set_title(f'{label}', fontsize=12)

ax.axis('off')

plt.suptitle('MNIST Training Samples', fontsize=14)

plt.tight_layout()

plt.show()

print(f"Training set: {len(train_dataset)} images")

print(f"Test set: {len(test_dataset)} images")

print(f"Image shape: {train_dataset[0][0].shape}")

7.2 Building a LeNet-5 Style CNN

# LeNet-5 style CNN (corresponds to the Three.js visualization)

class LeNetCNN(nn.Module):

def __init__(self):

super().__init__()

# Conv1: 1 -> 6 channels, 5x5 kernel

self.conv1 = nn.Conv2d(1, 6, kernel_size=5, padding=0)

# Conv2: 6 -> 16 channels, 5x5 kernel

self.conv2 = nn.Conv2d(6, 16, kernel_size=5, padding=0)

# FC layers

self.fc1 = nn.Linear(16 * 4 * 4, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

# Store intermediate feature maps (for visualization)

self.feature_maps = {}

def forward(self, x):

# Conv1 + ReLU + MaxPool

x = self.conv1(x) # [B, 6, 24, 24]

self.feature_maps['conv1'] = x.detach()

x = F.relu(x)

x = F.max_pool2d(x, 2) # [B, 6, 12, 12]

self.feature_maps['pool1'] = x.detach()

# Conv2 + ReLU + MaxPool

x = self.conv2(x) # [B, 16, 8, 8]

self.feature_maps['conv2'] = x.detach()

x = F.relu(x)

x = F.max_pool2d(x, 2) # [B, 16, 4, 4]

self.feature_maps['pool2'] = x.detach()

# Flatten + FC

x = x.view(x.size(0), -1) # [B, 256]

x = F.relu(self.fc1(x)) # [B, 120]

x = F.relu(self.fc2(x)) # [B, 84]

x = self.fc3(x) # [B, 10]

return x

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model = LeNetCNN().to(device)

total_params = sum(p.numel() for p in model.parameters())

print(f"Model: LeNet-5 style CNN")

print(f"Total parameters: {total_params:,}")

print(f"Device: {device}")

print(f"\nArchitecture:")

print(model)

7.3 Training the Model

# Train for 5 epochs

optimizer = optim.Adam(model.parameters(), lr=0.001)

criterion = nn.CrossEntropyLoss()

train_losses = []

test_accs = []

for epoch in range(5):

model.train()

running_loss = 0

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

avg_loss = running_loss / len(train_loader)

train_losses.append(avg_loss)

# Test

model.eval()

correct = 0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

pred = output.argmax(dim=1)

correct += pred.eq(target).sum().item()

acc = correct / len(test_dataset) * 100

test_accs.append(acc)

print(f"Epoch {epoch+1}: loss={avg_loss:.4f}, test_acc={acc:.2f}%")

print(f"\nFinal test accuracy: {test_accs[-1]:.2f}%")

7.4 Visualizing Convolutional Kernels

# Visualize the 6 convolutional kernels of Conv1

conv1_weights = model.conv1.weight.data.cpu()

fig, axes = plt.subplots(1, 6, figsize=(15, 3))

for i in range(6):

kernel = conv1_weights[i, 0] # [5, 5]

axes[i].imshow(kernel, cmap='RdBu_r', vmin=-0.5, vmax=0.5)

axes[i].set_title(f'Filter {i}', fontsize=11)

axes[i].axis('off')

plt.suptitle('Conv1 Learned Filters (5x5) — Red = positive weights, Blue = negative weights', fontsize=13)

plt.tight_layout()

plt.show()

# Key observations:

# What patterns have these 5x5 kernels learned?

# - Horizontal stripe pattern -> horizontal edge detector

# - Vertical stripe pattern -> vertical edge detector

# - Diagonal pattern -> diagonal edge detector

# - Bright center / dark surround -> blob detector

7.5 Visualizing Feature Maps: What the CNN "Sees"

# Forward pass on a test image, visualize feature maps at each layer

model.eval()

sample_img, sample_label = test_dataset[0]

sample_input = sample_img.unsqueeze(0).to(device)

with torch.no_grad():

output = model(sample_input)

pred = output.argmax(dim=1).item()

prob = F.softmax(output, dim=1)[0, pred].item()

print(f"True label: {sample_label}, Predicted: {pred} ({prob:.1%})")

# Plot feature maps at each layer

fig, axes = plt.subplots(4, 8, figsize=(20, 10))

# Row 0: Original input

axes[0][0].imshow(sample_img.squeeze().cpu(), cmap='gray')

axes[0][0].set_title('Input', fontsize=11)

for j in range(1, 8):

axes[0][j].axis('off')

axes[0][0].axis('off')

# Row 1: Conv1 feature maps (6 channels)

conv1_maps = model.feature_maps['conv1'][0].cpu()

for j in range(6):

axes[1][j].imshow(conv1_maps[j], cmap='viridis')

axes[1][j].set_title(f'Conv1-{j}', fontsize=10)

axes[1][j].axis('off')

for j in range(6, 8):

axes[1][j].axis('off')

# Row 2: Pool1 feature maps (6 channels, half size)

pool1_maps = model.feature_maps['pool1'][0].cpu()

for j in range(6):

axes[2][j].imshow(pool1_maps[j], cmap='viridis')

axes[2][j].set_title(f'Pool1-{j}', fontsize=10)

axes[2][j].axis('off')

for j in range(6, 8):

axes[2][j].axis('off')

# Row 3: Conv2 feature maps (first 8 of 16 channels)

conv2_maps = model.feature_maps['conv2'][0].cpu()

for j in range(8):

axes[3][j].imshow(conv2_maps[j], cmap='viridis')

axes[3][j].set_title(f'Conv2-{j}', fontsize=10)

axes[3][j].axis('off')

row_labels = ['Input', 'Conv1 (6ch, 24x24)', 'Pool1 (6ch, 12x12)', 'Conv2 (16ch, 8x8)']

for i, label in enumerate(row_labels):

axes[i][7].text(1.1, 0.5, label, transform=axes[i][7].transAxes,

fontsize=11, verticalalignment='center', color='#333')

plt.suptitle(f'Feature Maps — Digit "{sample_label}" → Predicted "{pred}"', fontsize=15)

plt.tight_layout()

plt.show()

# Key observations:

# Conv1: Each channel detects edges in different orientations; the digit's contour is clearly visible

# Pool1: Resolution decreases, but edge information is preserved with added position invariance

# Conv2: Features become more abstract — no longer simple edges, but "combinations of edges"

7.6 Comparing Feature Maps Across Different Digits

# Compare Conv2 feature maps across different digits

fig, axes = plt.subplots(4, 9, figsize=(22, 10))

# Find samples of 4 different digits

digits_to_show = [0, 1, 5, 8]

for row, digit in enumerate(digits_to_show):

# Find the first sample of this digit

for i in range(len(test_dataset)):

if test_dataset[i][1] == digit:

img, label = test_dataset[i]

break

inp = img.unsqueeze(0).to(device)

with torch.no_grad():

out = model(inp)

# Original image

axes[row][0].imshow(img.squeeze().cpu(), cmap='gray')

axes[row][0].set_title(f'Digit {digit}', fontsize=12, fontweight='bold')

axes[row][0].axis('off')

# First 8 of 16 Conv2 channels

fmaps = model.feature_maps['conv2'][0].cpu()

for j in range(8):

axes[row][j+1].imshow(fmaps[j], cmap='hot')

if row == 0:

axes[row][j+1].set_title(f'Ch-{j}', fontsize=10)

axes[row][j+1].axis('off')

plt.suptitle('Conv2 Feature Maps — Different digits activate different channels', fontsize=15)

plt.tight_layout()

plt.show()

# Core observations:

# "0" and "8" both have ring-like structures -> certain channels show high activation for both

# "1" is a vertical stroke -> only channels detecting vertical features are activated

# "5" has horizontal and curved elements -> channels with mixed patterns are activated

# This is the "visual basis" by which CNNs distinguish different digits

8. Hands-on Lab 2: TextCNN for Text Sentiment Classification (Google Colab)

This lab builds a TextCNN[8] from scratch to perform sentiment classification on movie reviews -- demonstrating how CNNs seamlessly transfer from the "image" domain to the "text" domain.

Open Google Colab (CPU is sufficient), create a new Notebook, and paste the following code blocks in sequence:

8.1 Data Preparation

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import numpy as np

import matplotlib.pyplot as plt

from collections import Counter

# Simple movie review dataset

train_texts = [

"this movie is great and wonderful",

"excellent film with brilliant acting",

"amazing story beautifully told",

"fantastic performances and stunning visuals",

"loved every moment of this film",

"one of the best movies i have seen",

"a masterpiece of modern cinema",

"incredibly moving and powerful",

"this movie is terrible and boring",

"worst film i have ever watched",

"awful acting and terrible script",

"complete waste of time and money",

"painfully bad from start to finish",

"disappointing and utterly forgettable",

"horrible movie with no redeeming qualities",

"dull uninspired and predictable",

]

train_labels = [1,1,1,1,1,1,1,1, 0,0,0,0,0,0,0,0] # 1=positive, 0=negative

test_texts = [

"a great and wonderful experience",

"terrible waste of my time",

"brilliant acting in this film",

"the worst movie this year",

"absolutely stunning and moving",

"boring and utterly terrible",

]

test_labels = [1, 0, 1, 0, 1, 0]

# Build vocabulary

all_words = ' '.join(train_texts + test_texts).split()

word_counts = Counter(all_words)

vocab = {word: idx + 2 for idx, (word, _) in enumerate(word_counts.most_common())}

vocab[''] = 0

vocab[''] = 1

def text_to_ids(text, max_len=12):

words = text.lower().split()

ids = [vocab.get(w, 1) for w in words]

# Padding or truncating to fixed length

if len(ids) < max_len:

ids += [0] * (max_len - ids.__len__())

else:

ids = ids[:max_len]

return ids

MAX_LEN = 12

X_train = torch.tensor([text_to_ids(t, MAX_LEN) for t in train_texts])

y_train = torch.tensor(train_labels, dtype=torch.long)

X_test = torch.tensor([text_to_ids(t, MAX_LEN) for t in test_texts])

y_test = torch.tensor(test_labels, dtype=torch.long)

print(f"Vocabulary size: {len(vocab)}")

print(f"Training samples: {len(train_texts)}")

print(f"Sequence length: {MAX_LEN}")

print(f"\nExample encoding:")

print(f" '{train_texts[0]}'")

print(f" → {X_train[0].tolist()}")

8.2 Building the TextCNN Model

# TextCNN — Kim (2014) style

class TextCNN(nn.Module):

def __init__(self, vocab_size, embed_dim=32, num_filters=16, filter_sizes=[2, 3, 4], num_classes=2):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embed_dim, padding_idx=0)

# Multi-scale 1D convolutions (capturing 2-gram, 3-gram, 4-gram respectively)

self.convs = nn.ModuleList([

nn.Conv1d(embed_dim, num_filters, kernel_size=fs)

for fs in filter_sizes

])

self.dropout = nn.Dropout(0.3)

self.fc = nn.Linear(num_filters * len(filter_sizes), num_classes)

# Store intermediate features

self.conv_outputs = {}

def forward(self, x):

# x: [batch, seq_len]

embedded = self.embedding(x) # [batch, seq_len, embed_dim]

embedded = embedded.permute(0, 2, 1) # [batch, embed_dim, seq_len]

conv_outs = []

for i, conv in enumerate(self.convs):

c = F.relu(conv(embedded)) # [batch, num_filters, seq_len - fs + 1]

self.conv_outputs[f'conv_{conv.kernel_size[0]}gram'] = c.detach()

pooled = F.max_pool1d(c, c.size(2)).squeeze(2) # [batch, num_filters]

conv_outs.append(pooled)

# Concatenate outputs from all convolutional kernels

cat = torch.cat(conv_outs, dim=1) # [batch, num_filters * 3]

cat = self.dropout(cat)

logits = self.fc(cat) # [batch, num_classes]

return logits

model = TextCNN(vocab_size=len(vocab), embed_dim=32, num_filters=16, filter_sizes=[2, 3, 4])

total_params = sum(p.numel() for p in model.parameters())

print(f"TextCNN Architecture:")

print(f" Embedding: {len(vocab)} × 32")

print(f" Conv filters: 16 × [2-gram, 3-gram, 4-gram]")

print(f" Total parameters: {total_params:,}")

print(f"\n{model}")

8.3 Training TextCNN

# Train TextCNN

optimizer = optim.Adam(model.parameters(), lr=0.005)

criterion = nn.CrossEntropyLoss()

losses = []

for epoch in range(80):

model.train()

optimizer.zero_grad()

output = model(X_train)

loss = criterion(output, y_train)

loss.backward()

optimizer.step()

losses.append(loss.item())

if (epoch + 1) % 20 == 0:

model.eval()

with torch.no_grad():

train_pred = model(X_train).argmax(dim=1)

test_pred = model(X_test).argmax(dim=1)

train_acc = (train_pred == y_train).float().mean().item()

test_acc = (test_pred == y_test).float().mean().item()

print(f"Epoch {epoch+1:3d}: loss={loss.item():.4f}, train_acc={train_acc:.0%}, test_acc={test_acc:.0%}")

plt.figure(figsize=(8, 3))

plt.plot(losses, color='#0077b6')

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.title('TextCNN Training Loss')

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

8.4 Visualizing N-gram Convolution Activations

# Visualize TextCNN n-gram activation patterns for each sentence

model.eval()

def visualize_text_cnn(text, label_name):

ids = torch.tensor([text_to_ids(text, MAX_LEN)])

with torch.no_grad():

logits = model(ids)

pred = logits.argmax(dim=1).item()

probs = F.softmax(logits, dim=1)[0]

words = text.split()[:MAX_LEN]

sentiment = "Positive" if pred == 1 else "Negative"

fig, axes = plt.subplots(1, 3, figsize=(18, 2.5))

for idx, (name, conv_out) in enumerate(model.conv_outputs.items()):

activations = conv_out[0].mean(dim=0).numpy() # Average across all filters

n = len(activations)

gram_size = int(name.split('_')[1][0])

# Build n-gram labels

ngram_labels = []

for i in range(n):

if i + gram_size <= len(words):

ngram_labels.append(' '.join(words[i:i+gram_size]))

else:

ngram_labels.append(f'pad-{i}')

colors = ['#b8922e' if v > 0 else '#0077b6' for v in activations[:len(ngram_labels)]]

axes[idx].barh(range(len(ngram_labels)), activations[:len(ngram_labels)], color=colors)

axes[idx].set_yticks(range(len(ngram_labels)))

axes[idx].set_yticklabels(ngram_labels, fontsize=9)

axes[idx].set_title(f'{gram_size}-gram activation', fontsize=11)

axes[idx].invert_yaxis()

plt.suptitle(f'"{text}" → {sentiment} (pos={probs[1]:.0%})', fontsize=13)

plt.tight_layout()

plt.show()

# Analyze several test samples

visualize_text_cnn("a great and wonderful experience", "Positive")

visualize_text_cnn("terrible waste of my time", "Negative")

visualize_text_cnn("brilliant acting in this film", "Positive")

# Key observations:

# - In positive reviews, n-grams like "great and wonderful" and "brilliant acting" show the highest activation

# - In negative reviews, n-grams like "terrible waste" and "waste of my" show the highest activation

# - Different kernel sizes capture key phrases of different lengths

# - This is the "feature detection" mechanism of CNNs in text classification

8.5 Testing with Custom Text

# Test with custom text

custom_texts = [

"this is a great movie",

"terrible and boring film",

"wonderful story with great acting",

"waste of time terrible movie",

]

model.eval()

print("Custom Text Predictions:")

print("=" * 50)

for text in custom_texts:

ids = torch.tensor([text_to_ids(text, MAX_LEN)])

with torch.no_grad():

logits = model(ids)

probs = F.softmax(logits, dim=1)[0]

pred = "Positive" if logits.argmax(dim=1).item() == 1 else "Negative"

print(f" [{pred:>8}] (pos={probs[1]:.0%}) {text}")

# TextCNN vs Image CNN comparison

print("\n" + "=" * 50)

print("TextCNN vs Image CNN Architecture Comparison:")

print(f" {'Image CNN':>12} | {'TextCNN':>12}")

print(f" {'2D Conv':>12} | {'1D Conv':>12}")

print(f" {'Pixel Nbrhd':>12} | {'Word Window':>12}")

print(f" {'Edge/Texture':>12} | {'n-gram':>12}")

print(f" {'Spatial Pool':>12} | {'Global MaxPool':>12}")

print(f" {'Cls/Detect':>12} | {'Sentiment/Topic':>12}")

9. From CNNs to Modern Architectures: The Vision Transformer Challenge

In 2021, Dosovitskiy et al.[11] launched a direct challenge to CNN dominance in the visual domain with the Vision Transformer (ViT). ViT divides images into 16x16 patches, treats each patch as a token, and feeds them into a standard Transformer -- entirely without convolution operations.

With large-scale pretraining data, ViT matched or even surpassed CNNs of comparable parameter counts. This raised a fundamental question: Is CNN's inductive bias (locality, translation equivariance) an advantage or a limitation?

Liu et al.[15] provided a profound answer in 2022: ConvNeXt modernized CNNs using Transformer design principles -- larger convolutional kernels (7x7), Layer Normalization replacing Batch Normalization, fewer activation functions -- and the result was a pure CNN architecture that once again matched ViT performance.

This tells us: it is not that CNNs are obsolete, but that certain design conventions of legacy CNNs have become outdated. The core strengths of CNNs -- locality and weight sharing -- remain effective; what needs updating are the specific engineering choices.

In practice, the choice between CNNs and Transformers depends on the use case:

- Edge Devices / Real-time Inference: CNNs (especially MobileNet, EfficientNet) remain the best choice -- with clear advantages in computational efficiency and model size

- Large-scale Pretraining / Global Context Required: ViT and Transformer variants have the edge -- the global receptive field of self-attention provides stronger learning capability with large datasets

- Hybrid Architectures: An increasing number of models incorporate attention mechanisms into CNN backbones (e.g., EfficientNetV2) or add convolutions to Transformers (e.g., CvT) -- the boundary between the two is blurring

10. Conclusion

From Hubel and Wiesel's 1962 cat visual cortex experiment, to LeCun's 1998 LeNet-5, to AlexNet's 2012 ignition of the deep learning revolution, to today's convergence of CNNs and Transformers -- the story of convolutional neural networks is one of the most elegant narratives in artificial intelligence.

The core principles that CNNs teach us -- local feature detection, hierarchical representation learning, and the power of inductive bias -- will not become obsolete with the rise of Transformers. On the contrary, these principles are being integrated into more modern architectures in new forms. To understand CNNs is to understand the most fundamental "first principles" of deep learning.

If your team is evaluating technical solutions for computer vision or text classification, or needs to choose between CNN, ViT, and hybrid architectures, we welcome a deep technical conversation. Meta Intelligence's research team can help you identify the most suitable architecture based on your specific deployment environment, data scale, and latency requirements.