- RNN's core innovation lies in temporal propagation of hidden states[1] — giving neural networks "memory" and the ability to process sequences of arbitrary length

- LSTM[2] solves the vanishing gradient problem[3] with a three-gate mechanism (forget gate, input gate, output gate); GRU[4] achieves comparable performance with a more streamlined two-gate structure

- From Seq2Seq[6] to the attention mechanism[7], RNN pioneered the Encoder-Decoder paradigm of modern NLP, eventually evolving into the Transformer architecture[11]

- This article includes two Google Colab labs: LSTM Shakespeare-style text generation and LSTM image sequence classification (treating MNIST images as 28-step time series)

1. The Power of Sequences: Why the World Needs RNN

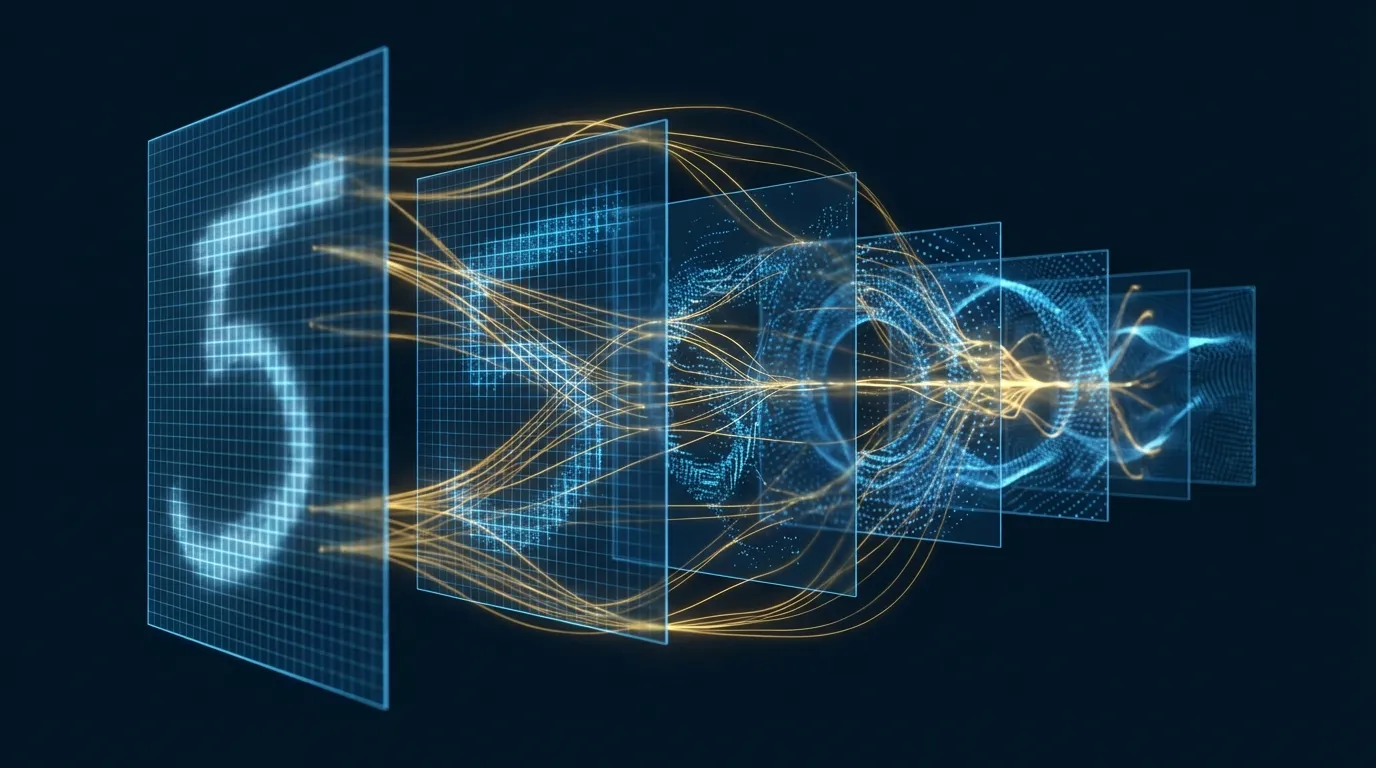

In the history of deep learning, there has been a fundamental challenge: how to make neural networks understand "order"? Traditional fully connected networks and convolutional neural networks process fixed-size inputs — an image, a set of features. But the real world is full of sequential data: language is a sequence of words, speech is a sequence of sound waves, stock prices are a sequence through time, and video is a sequence of frames.

In 1990, Jeffrey Elman proposed the Simple Recurrent Network (SRN)[1], introducing a seemingly simple but profoundly significant design: feeding the hidden state from the previous time step back into the current time step. This "loop" gave networks memory — they no longer only see the current input but can also "remember" what they've seen before.

RNN's core formulas are concise and elegant:

h_t = tanh(W_hh · h_{t-1} + W_xh · x_t + b_h)

y_t = W_hy · h_t + b_y

Where:

h_t = Hidden state at time step t (memory)

x_t = Input at time step t

y_t = Output at time step t

W_hh, W_xh, W_hy = Weight matrices (shared across all time steps)

This weight sharing design is a major advantage of RNNs: regardless of sequence length, the model parameter count stays the same. A trained RNN can process a 10-word sentence as well as a 1000-word article.

2. Vanishing Gradients: RNN's Fatal Weakness

In theory, RNNs can capture arbitrary long-range dependencies. But in practice, Bengio et al.'s 1994 research[3] revealed a harsh reality: the vanishing gradient problem makes it nearly impossible for standard RNNs to learn long-term dependencies beyond 10-20 steps.

The root of the problem lies in the mathematical nature of Backpropagation Through Time (BPTT)[12]. In a time-unrolled RNN, gradients must pass through multiple time steps of chain multiplication:

∂L/∂h_0 = ∂L/∂h_T · ∂h_T/∂h_{T-1} · ... · ∂h_1/∂h_0

Each ∂h_t/∂h_{t-1} term involves repeated multiplication by W_hh:

- If max eigenvalue of W_hh < 1 → Gradients decay exponentially (vanishing)

- If max eigenvalue of W_hh > 1 → Gradients grow exponentially (exploding)

Intuitively, if you want a standard RNN to remember "what the first word was" to predict the 100th word, the gradient must traverse 99 time steps. Multiplying by a value less than 1 at each step, after 99 multiplications the gradient is virtually zero — the network simply "cannot learn" this long-range dependency.

3. LSTM: The Revolution of Gated Memory

In 1997, Sepp Hochreiter and Jurgen Schmidhuber proposed Long Short-Term Memory (LSTM)[2], elegantly solving the vanishing gradient problem with a sophisticated gating mechanism. LSTM's core innovation is introducing a "memory highway" (cell state) that allows information to flow across multiple time steps without loss.

An LSTM unit contains three gates and one memory channel:

| Component | Formula | Function |

|---|---|---|

| Forget Gate f_t | σ(W_f · [h_{t-1}, x_t] + b_f) | Decides "what to forget" — removes outdated info from cell state |

| Input Gate i_t | σ(W_i · [h_{t-1}, x_t] + b_i) | Decides "what to remember" — writes new info to cell state |

| Candidate Memory C_t | tanh(W_C · [h_{t-1}, x_t] + b_C) | Generates new candidate memory content |

| Cell State C_t | f_t ⊙ C_{t-1} + i_t ⊙ C_t | Updates memory: forget old + add new |

| Output Gate o_t | σ(W_o · [h_{t-1}, x_t] + b_o) | Decides "what to output" — reads from cell state |

| Hidden State h_t | o_t ⊙ tanh(C_t) | Output for the current time step |

Why can LSTM solve the vanishing gradient problem? The key lies in the cell state update formula C_t = f_t ⊙ C_{t-1} + i_t ⊙ C_t. This is an additive structure rather than multiplicative — gradients can flow directly along the cell state without continuous multiplication. When the forget gate is close to 1, gradients propagate to the distant past with nearly no loss.

4. GRU: A More Streamlined Gating Design

In 2014, Cho et al. proposed the Gated Recurrent Unit (GRU)[4], which can be viewed as a simplified version of LSTM. GRU merges the forget and input gates into a single update gate and eliminates the independent cell state, reducing parameter count by approximately 25%.

# GRU Core Formulas

z_t = σ(W_z · [h_{t-1}, x_t]) # Update gate: how much old memory to retain

r_t = σ(W_r · [h_{t-1}, x_t]) # Reset gate: how much old memory to forget

h̃_t = tanh(W · [r_t ⊙ h_{t-1}, x_t]) # Candidate hidden state

h_t = (1 - z_t) ⊙ h_{t-1} + z_t ⊙ h̃_t # Update hidden state

Chung et al.'s empirical study[16] showed that GRU and LSTM perform comparably across most sequence tasks. GRU's advantage lies in faster training speed and lower memory consumption, making it suitable for resource-constrained scenarios.

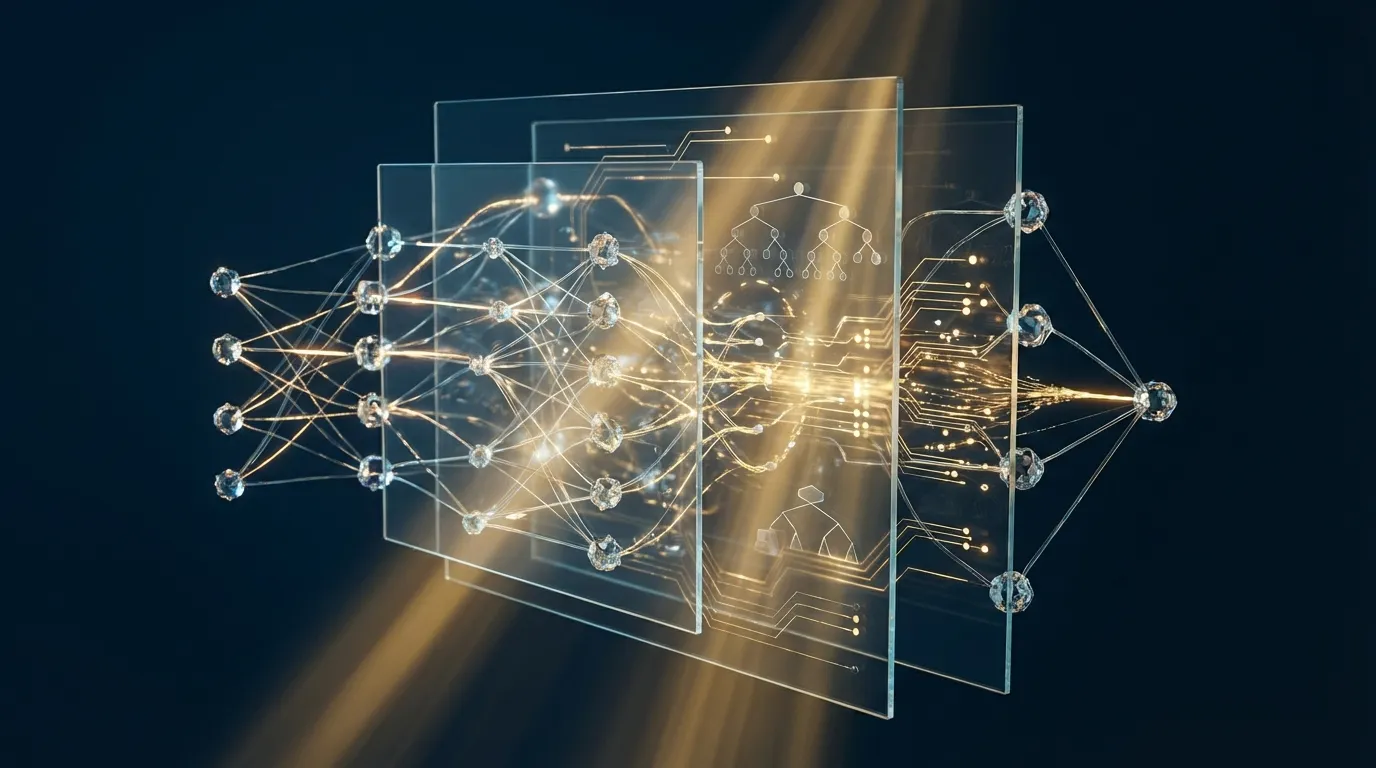

5. Advanced RNN Architectures: Bidirectional, Stacked, Seq2Seq

5.1 Bidirectional RNN

The bidirectional RNN proposed by Schuster and Paliwal[5] uses hidden states from two directions simultaneously: forward (reading past → present) and backward (reading future → present). The final hidden representation is the concatenation of both directions, allowing the model to leverage both past and future context. In tasks like named entity recognition and part-of-speech tagging, bidirectional LSTM has long been the standard configuration.

5.2 Deep / Stacked RNN

Graves et al.[8] demonstrated that stacking multiple RNN layers (where each layer's output serves as the next layer's input) can learn more abstract sequence representations. Combined with Dropout[14] regularization, deep LSTMs achieved breakthrough results in speech recognition.

5.3 Seq2Seq and the Attention Mechanism

In 2014, Sutskever et al.[6] proposed the Sequence-to-Sequence (Seq2Seq) architecture: an LSTM Encoder compresses the input sequence into a fixed-dimensional vector, and another LSTM Decoder generates the output sequence from this vector. This architecture achieved remarkable success in machine translation.

However, compressing an entire input sequence into a single fixed-length vector creates an information bottleneck. In 2015, Bahdanau et al.[7] introduced the attention mechanism, allowing the Decoder to "look back" at all of the Encoder's hidden states when generating each word, dynamically focusing on the most relevant input parts. The attention mechanism not only dramatically improved translation quality but also paved the way for the later Transformer[11].

6. The Application Spectrum of RNN

| Application Domain | Input→Output Pattern | Representative Architecture | Key Breakthrough |

|---|---|---|---|

| Speech Recognition | Sequence→Sequence | Deep BiLSTM + CTC[15] | CTC loss enables alignment-free end-to-end training |

| Machine Translation | Sequence→Sequence | Seq2Seq + Attention | Attention mechanism[7] resolves long sequence translation bottleneck |

| Text Generation | Sequence→Sequence | Character/word-level LSTM[10] | Karpathy demonstrated RNN can learn the structure of code and mathematical formulas |

| Image Captioning | Image→Sequence | CNN + LSTM[9] | Combining visual feature extraction with language generation |

| Sentiment Analysis | Sequence→Category | BiLSTM + Attention | Bidirectional context + attention focusing on key words |

| Time Series Forecasting | Sequence→Value | Stacked LSTM | Multi-layer abstraction captures long and short-term trends |

7. Hands-on Lab 1: LSTM Shakespeare-Style Text Generation (Google Colab)

In this lab, we will train a character-level LSTM model to generate Shakespeare-style text[10]. The model learns statistical patterns between characters, then generates entirely new text character by character.

# ============================================================

# Hands-on Lab 1: LSTM Character-Level Text Generation

# Environment: Google Colab (Free GPU)

# Estimated runtime: ~8 minutes

# ============================================================

import torch

import torch.nn as nn

import numpy as np

# ------ 1. Data Preparation: Download Shakespeare Text ------

import urllib.request

url = "https://raw.githubusercontent.com/karpathy/char-rnn/master/data/tinyshakespeare/input.txt"

urllib.request.urlretrieve(url, "shakespeare.txt")

with open("shakespeare.txt", "r") as f:

text = f.read()

print(f"Text length: {len(text):,} characters")

print(f"First 200 characters:\n{text[:200]}")

# Build character ↔ index mapping

chars = sorted(set(text))

vocab_size = len(chars)

char_to_idx = {c: i for i, c in enumerate(chars)}

idx_to_char = {i: c for i, c in enumerate(chars)}

print(f"Vocabulary size: {vocab_size} unique characters")

# Encode text

data = np.array([char_to_idx[c] for c in text])

# ------ 2. Build Training Dataset ------

seq_length = 100

batch_size = 64

def get_batch(data, seq_length, batch_size):

"""Randomly sample a batch of sequences"""

max_start = len(data) - seq_length - 1

starts = np.random.randint(0, max_start, size=batch_size)

x = np.array([data[s:s+seq_length] for s in starts])

y = np.array([data[s+1:s+seq_length+1] for s in starts])

return torch.tensor(x, dtype=torch.long), torch.tensor(y, dtype=torch.long)

# ------ 3. Define LSTM Model ------

class CharLSTM(nn.Module):

def __init__(self, vocab_size, embed_dim=128, hidden_dim=256, num_layers=2, dropout=0.3):

super().__init__()

self.hidden_dim = hidden_dim

self.num_layers = num_layers

self.embed = nn.Embedding(vocab_size, embed_dim)

self.lstm = nn.LSTM(embed_dim, hidden_dim, num_layers,

batch_first=True, dropout=dropout)

self.fc = nn.Linear(hidden_dim, vocab_size)

def forward(self, x, hidden=None):

emb = self.embed(x) # (batch, seq, embed)

out, hidden = self.lstm(emb, hidden) # (batch, seq, hidden)

logits = self.fc(out) # (batch, seq, vocab)

return logits, hidden

def init_hidden(self, batch_size, device):

h = torch.zeros(self.num_layers, batch_size, self.hidden_dim, device=device)

c = torch.zeros(self.num_layers, batch_size, self.hidden_dim, device=device)

return (h, c)

# ------ 4. Training ------

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"Using device: {device}")

model = CharLSTM(vocab_size).to(device)

optimizer = torch.optim.Adam(model.parameters(), lr=0.002)

criterion = nn.CrossEntropyLoss()

print(f"Model parameters: {sum(p.numel() for p in model.parameters()):,}")

num_steps = 3000

for step in range(1, num_steps + 1):

model.train()

x, y = get_batch(data, seq_length, batch_size)

x, y = x.to(device), y.to(device)

logits, _ = model(x)

loss = criterion(logits.view(-1, vocab_size), y.view(-1))

optimizer.zero_grad()

loss.backward()

nn.utils.clip_grad_norm_(model.parameters(), 5.0) # Prevent gradient explosion

optimizer.step()

if step % 500 == 0:

print(f"Step {step}/{num_steps}, Loss: {loss.item():.4f}")

# ------ 5. Text Generation ------

def generate(model, start_text="ROMEO:\n", length=500, temperature=0.8):

"""Generate text with the trained model"""

model.eval()

chars_idx = [char_to_idx[c] for c in start_text]

hidden = model.init_hidden(1, device)

# Build context using start_text

for ch_idx in chars_idx[:-1]:

inp = torch.tensor([[ch_idx]], device=device)

_, hidden = model(inp, hidden)

generated = list(start_text)

inp = torch.tensor([[chars_idx[-1]]], device=device)

with torch.no_grad():

for _ in range(length):

logits, hidden = model(inp, hidden)

logits = logits[0, -1] / temperature

probs = torch.softmax(logits, dim=0)

next_idx = torch.multinomial(probs, 1).item()

generated.append(idx_to_char[next_idx])

inp = torch.tensor([[next_idx]], device=device)

return "".join(generated)

# Effects of different temperature values

for temp in [0.5, 0.8, 1.2]:

print(f"\n{'='*60}")

print(f"Temperature = {temp}")

print(f"{'='*60}")

print(generate(model, temperature=temp))

The significance of the Temperature parameter: temperature < 1 makes output more conservative and "correct" but less varied; temperature > 1 increases randomness and creativity but may produce incoherent text. Temperature = 0.8 is typically the sweet spot between quality and diversity.

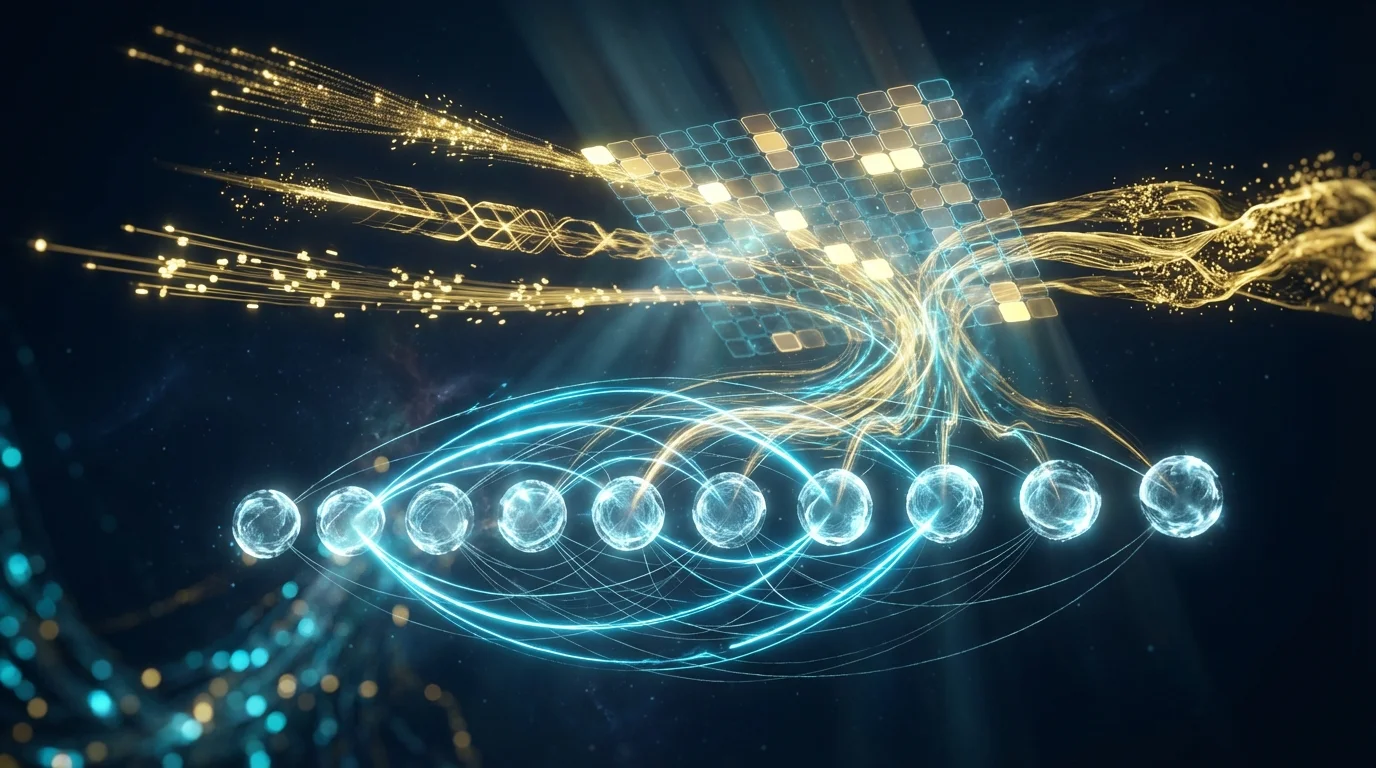

8. Hands-on Lab 2: LSTM Image Sequence Classification — Treating MNIST as Time Series (Google Colab)

RNN can handle more than just text — any data that can be represented as a sequence is RNN's stage. In this lab, we treat MNIST 28x28 images as 28 time steps, with each step inputting 28 pixel values. The LSTM scans the image row by row, then classifies based on the accumulated hidden state[13].

# ============================================================

# Hands-on Lab 2: LSTM Image Sequence Classification (MNIST as Sequence)

# Environment: Google Colab (Free GPU)

# Estimated runtime: ~5 minutes

# ============================================================

import torch

import torch.nn as nn

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np

# ------ 1. Load MNIST Dataset ------

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_data = datasets.MNIST('./data', train=True, download=True, transform=transform)

test_data = datasets.MNIST('./data', train=False, transform=transform)

train_loader = DataLoader(train_data, batch_size=128, shuffle=True)

test_loader = DataLoader(test_data, batch_size=256, shuffle=False)

print(f"Training set: {len(train_data)} images, Test set: {len(test_data)} images")

# ------ 2. Visualization: MNIST as Sequence ------

fig, axes = plt.subplots(1, 3, figsize=(14, 4))

sample_img = train_data[0][0].squeeze().numpy()

# Original image

axes[0].imshow(sample_img, cmap='gray')

axes[0].set_title("Original 28×28 Image", fontsize=12)

# Unrolled as sequence, row by row

seq_view = sample_img.copy()

for i in range(0, 28, 4):

axes[1].axhline(y=i, color='cyan', alpha=0.3, linewidth=0.5)

axes[1].imshow(seq_view, cmap='gray')

axes[1].set_title("LSTM Row-by-Row Scan (28 steps × 28 features)", fontsize=12)

for i, arrow_y in enumerate(range(2, 26, 3)):

axes[1].annotate('→', xy=(26, arrow_y), fontsize=8, color='cyan', alpha=0.6)

# Pixel values for the first few rows

axes[2].plot(sample_img[:8].T, alpha=0.7)

axes[2].set_xlabel("Pixel Position (0-27)")

axes[2].set_ylabel("Pixel Value")

axes[2].set_title("Pixel Value Sequences for First 8 Rows", fontsize=12)

axes[2].legend([f"row {i}" for i in range(8)], fontsize=7, ncol=2)

plt.tight_layout()

plt.savefig("mnist_as_sequence.png", dpi=150, bbox_inches='tight')

plt.show()

# ------ 3. Define LSTM Classification Model ------

class ImageLSTM(nn.Module):

"""

Treats a 28×28 image as a 28-step sequence, each step with 28-dim features.

LSTM reads row by row, then uses the last hidden state for classification.

"""

def __init__(self, input_size=28, hidden_size=128, num_layers=2,

num_classes=10, dropout=0.3, bidirectional=True):

super().__init__()

self.hidden_size = hidden_size

self.num_layers = num_layers

self.bidirectional = bidirectional

self.num_directions = 2 if bidirectional else 1

self.lstm = nn.LSTM(input_size, hidden_size, num_layers,

batch_first=True, dropout=dropout,

bidirectional=bidirectional)

self.dropout = nn.Dropout(dropout)

self.fc = nn.Linear(hidden_size * self.num_directions, num_classes)

def forward(self, x):

# x: (batch, 1, 28, 28) → (batch, 28, 28)

x = x.squeeze(1) # Remove channel dimension

# LSTM processes sequence: 28 steps, 28-dim each

out, (h_n, c_n) = self.lstm(x)

# Use last step's output for classification

if self.bidirectional:

# Concatenate forward and backward last hidden states

last_hidden = torch.cat([h_n[-2], h_n[-1]], dim=1)

else:

last_hidden = h_n[-1]

out = self.dropout(last_hidden)

logits = self.fc(out)

return logits

# ------ 4. Training ------

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model = ImageLSTM().to(device)

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

criterion = nn.CrossEntropyLoss()

print(f"Model parameters: {sum(p.numel() for p in model.parameters()):,}")

print(f"Using device: {device}")

num_epochs = 10

train_losses, test_accs = [], []

for epoch in range(1, num_epochs + 1):

model.train()

total_loss = 0

for images, labels in train_loader:

images, labels = images.to(device), labels.to(device)

logits = model(images)

loss = criterion(logits, labels)

optimizer.zero_grad()

loss.backward()

nn.utils.clip_grad_norm_(model.parameters(), 5.0)

optimizer.step()

total_loss += loss.item()

avg_loss = total_loss / len(train_loader)

train_losses.append(avg_loss)

# Testing

model.eval()

correct, total = 0, 0

with torch.no_grad():

for images, labels in test_loader:

images, labels = images.to(device), labels.to(device)

preds = model(images).argmax(dim=1)

correct += (preds == labels).sum().item()

total += labels.size(0)

acc = correct / total

test_accs.append(acc)

print(f"Epoch {epoch}/{num_epochs}, Loss: {avg_loss:.4f}, Test Acc: {acc:.4f}")

# ------ 5. Visualize Training Process ------

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(12, 4))

ax1.plot(train_losses, 'b-', linewidth=2)

ax1.set_xlabel("Epoch"); ax1.set_ylabel("Loss"); ax1.set_title("Training Loss")

ax1.grid(True, alpha=0.3)

ax2.plot([a*100 for a in test_accs], 'r-', linewidth=2)

ax2.set_xlabel("Epoch"); ax2.set_ylabel("Accuracy (%)"); ax2.set_title("Test Accuracy")

ax2.grid(True, alpha=0.3)

ax2.set_ylim([90, 100])

plt.tight_layout()

plt.savefig("lstm_mnist_training.png", dpi=150, bbox_inches='tight')

plt.show()

print(f"\nFinal test accuracy: {test_accs[-1]*100:.2f}%")

print("BiLSTM can still achieve ~98% accuracy treating images as sequences!")

# ------ 6. Visualize Hidden State Evolution ------

model.eval()

sample = test_data[0][0].unsqueeze(0).to(device)

# Extract hidden states at each time step

with torch.no_grad():

x = sample.squeeze(1) # (1, 28, 28)

h_states = []

h = None

for t in range(28):

step_input = x[:, t:t+1, :] # (1, 1, 28)

out, h = model.lstm(step_input, h)

h_states.append(h[0][-1].cpu().numpy()) # Take last layer's h

h_states = np.array(h_states).squeeze() # (28, hidden_size)

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(14, 5))

ax1.imshow(sample.cpu().squeeze(), cmap='gray')

ax1.set_title("Input Image", fontsize=12)

im = ax2.imshow(h_states.T[:32], aspect='auto', cmap='RdYlBu_r')

ax2.set_xlabel("Time Step (Row Scan)")

ax2.set_ylabel("Hidden Units (First 32)")

ax2.set_title("LSTM Hidden State Evolution Over Time Steps", fontsize=12)

plt.colorbar(im, ax=ax2)

plt.tight_layout()

plt.savefig("lstm_hidden_states.png", dpi=150, bbox_inches='tight')

plt.show()

Why does treating images as sequences make sense? The educational value of this experiment lies in: (1) proving that LSTM can "understand" spatial structure from a purely sequential perspective; (2) BiLSTM achieving ~98% accuracy, demonstrating that row-by-row scanning does capture sufficient spatial information; (3) hidden state visualization reveals the internal dynamics of LSTM when processing handwritten digits.

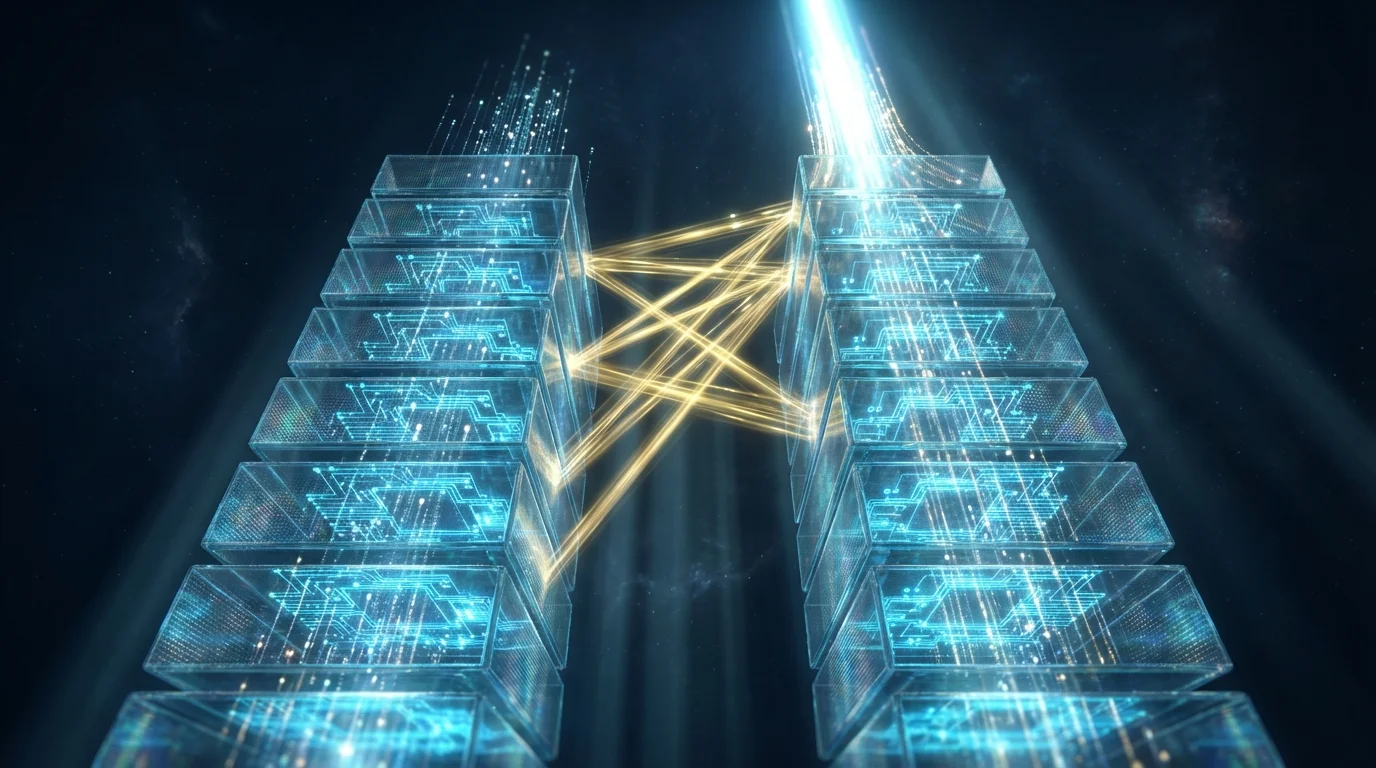

9. From RNN to Transformer: A Historical Relay

In 2017, Vaswani et al. published the landmark paper "Attention Is All You Need"[11], proposing the Transformer architecture based entirely on self-attention mechanisms, completely abandoning RNN's recurrent structure. Transformer's advantages include:

- Parallelization: RNN must process each time step sequentially; Transformer can process the entire sequence simultaneously

- Long-range dependencies: Self-attention gives any two positions O(1) distance, compared to O(n) for RNN

- Scalability: Transformer performance scales reliably with model size and data volume

However, RNN has not been entirely replaced. RNN still holds advantages in the following scenarios:

| Scenario | RNN Advantage | Transformer Advantage |

|---|---|---|

| Real-time streaming | Naturally suited for step-by-step input | Requires complete sequence or special design |

| Very long sequences (>10K tokens) | O(1) memory per step | Self-attention requires O(n²) memory |

| Embedded / edge devices | Small model, fast inference | Typically requires large parameter counts |

| Causal sequence modeling | Natural causal structure | Requires causal masking |

10. Conclusion: The Power of Memory

From Elman's Simple Recurrent Network[1] to Hochreiter and Schmidhuber's LSTM[2], to Bahdanau's attention mechanism[7], the history of RNN development is one of the most compelling chapters in deep learning. Each breakthrough originated from a deeper understanding of the fundamental problem of "memory":

- RNN answered "how to make networks remember the past"

- LSTM answered "how to selectively remember and forget"

- Attention mechanism answered "how to dynamically focus on relevant information"

- Transformer answered "how to let all positions communicate equally"

Understanding RNN is not just historical archaeology — it is the foundation for understanding modern deep learning. Many core concepts in Transformer (Encoder-Decoder, attention, sequence modeling) originate from the RNN research tradition. Master RNN, and you hold the key to the world of GPT, BERT, and LLMs[13].