- BCG reports that approximately 30% of new code at Google and Microsoft is now AI-generated, and McKinsey's tracking of 600+ organizations found that high-performing teams achieved 16-30% productivity gains and 31-45% software quality improvements — but only in enterprises that simultaneously reformed processes and organization

- MIT Sloan Management Review reveals the hidden costs of AI-assisted development: duplicate code blocks increased eightfold, code churn doubled, and rapid output actually accelerated technical debt accumulation

- Brooks' 1987 framework of "essential complexity vs. accidental complexity" provides the most precise analytical lens for the current software engineering transformation: generative AI is dramatically eliminating accidental complexity, but essential complexity — requirements understanding, architecture judgment, domain modeling — still requires deep human expertise

- Our practice shows that systematically structuring team knowledge into machine-readable formats (Markdown documents, AI skill definitions) enables AI tools to automatically follow best practices in every execution, forming a sustainably iterative "organizational intelligence infrastructure"

1. Software Engineering Is Undergoing a Methodology-Level Transformation

Software engineering as a discipline has undergone several fundamental methodological shifts over the past half century. From Dijkstra's 1968 publication of "Go To Statement Considered Harmful"[1] that launched the structured programming revolution, through object-oriented programming, agile development, and cloud-native architectures, each transformation redefined "how good software should be built."

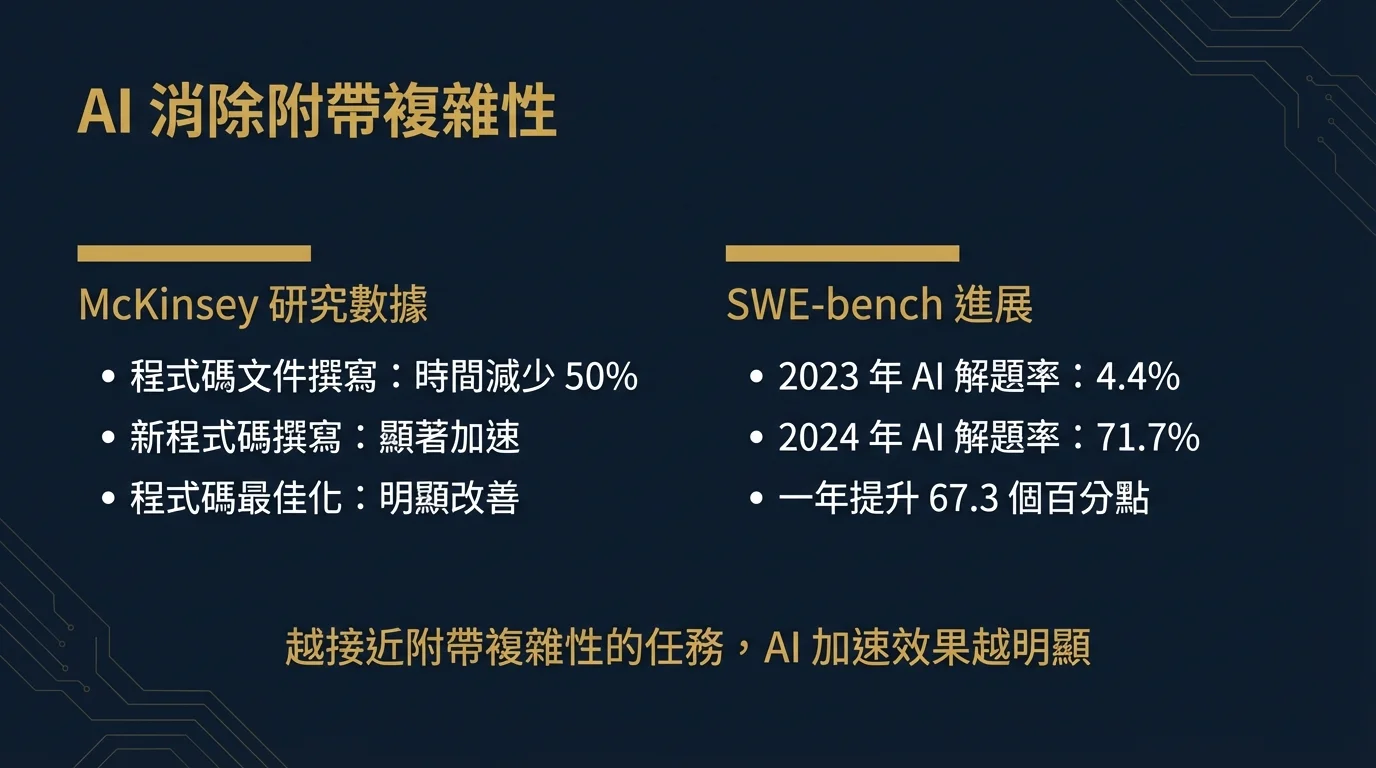

In 2025-2026, we stand at the tipping point of yet another methodological transformation. BCG's research[2] indicates that approximately 30% of new code at Google and Microsoft is already AI-generated, and existing AI-assisted coding tools have delivered 30-50% development productivity improvements. The Stanford AI Index 2025 report[3] recorded an astonishing advancement: on SWE-bench (a benchmark based on real GitHub software engineering problems), AI system solve rates leaped from 4.4% in 2023 to 71.7% in 2024 — a 67.3 percentage point increase within a single year.

However, these numbers tell only one side of the story. The truly important question is not "can AI write code" but rather: when AI takes on an ever-increasing share of code generation work, how should software engineering methodology, team structures, and architecture decisions adjust accordingly?

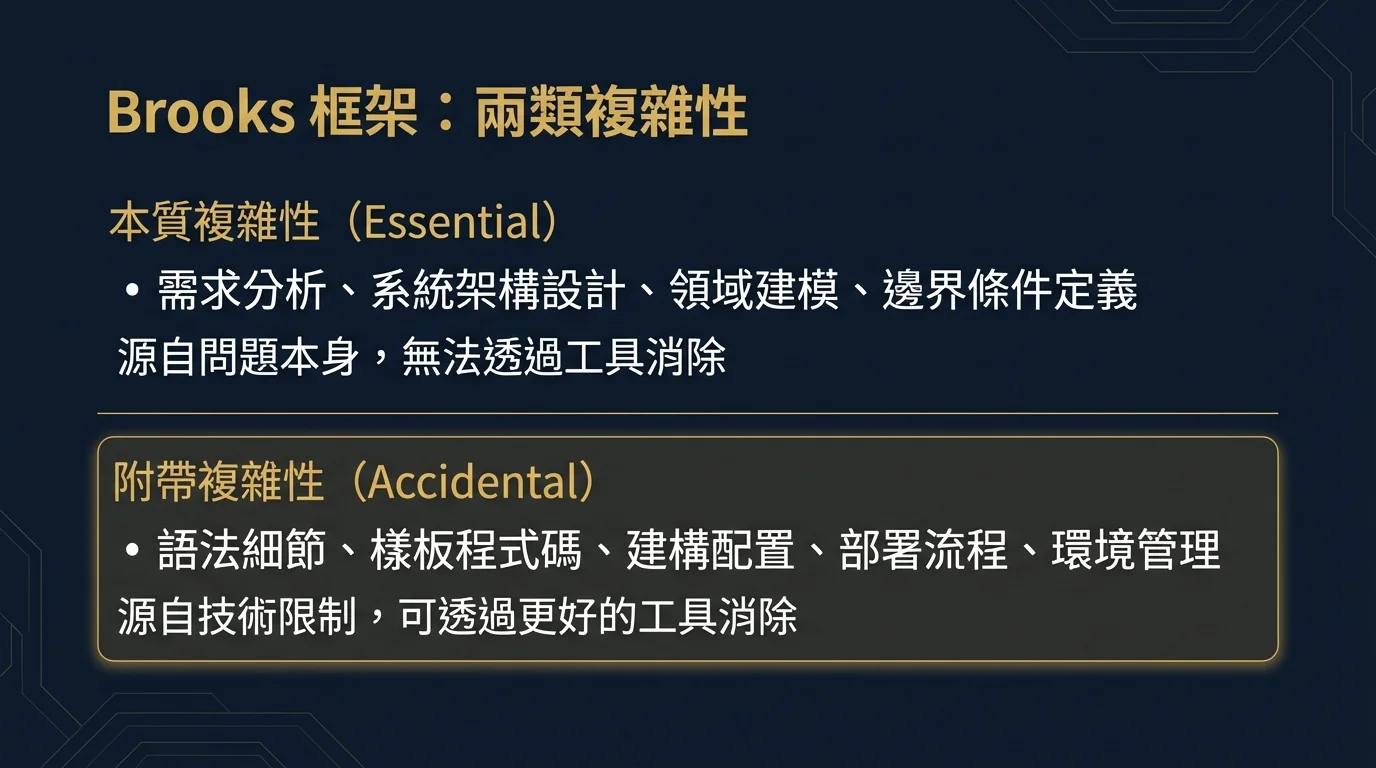

2. The Contemporary Relevance of Brooks' Framework: Essential Complexity and Accidental Complexity

To systematically understand AI's impact on software engineering, Frederick Brooks' 1987 paper "No Silver Bullet"[4] remains the most explanatory analytical framework. Brooks distinguished two types of complexity in software development:

- Essential Complexity: Arising from the problem domain itself — requirements analysis, system architecture design, domain modeling, boundary condition definition. This complexity cannot be eliminated through better tools because it is an inherent characteristic of the problem itself

- Accidental Complexity: Arising from tools, processes, and technical limitations — syntax details, boilerplate code, build configuration, deployment workflows, environment management. This complexity is a product of current technical conditions and can theoretically be eliminated through better tools

Viewed through this framework, generative AI's value proposition becomes clear: it is systematically eliminating accidental complexity in software development. Code generation, documentation writing, test writing, boilerplate code — these tasks that historically consumed vast amounts of developer time are essentially in the realm of accidental complexity. McKinsey's research[5] confirmed this: generative AI's time savings are most significant in code documentation writing (reduced by half), followed by new code writing, and then code optimization. The closer a task is to "accidental complexity," the more pronounced AI's acceleration effect.

But Brooks' framework simultaneously issues a warning: essential complexity does not disappear with tool improvements. Whether requirements are correctly understood, whether the architecture fits the problem scale, whether system boundaries are properly defined — these judgments still require deep domain expertise and engineering experience.

3. The Productivity Paradox: Hidden Costs Behind Speed Improvements

A significant 2025 study from MIT Sloan Management Review[6] reveals a phenomenon that all technology decision-makers should pay attention to: the productivity gains from AI-assisted development come with costs.

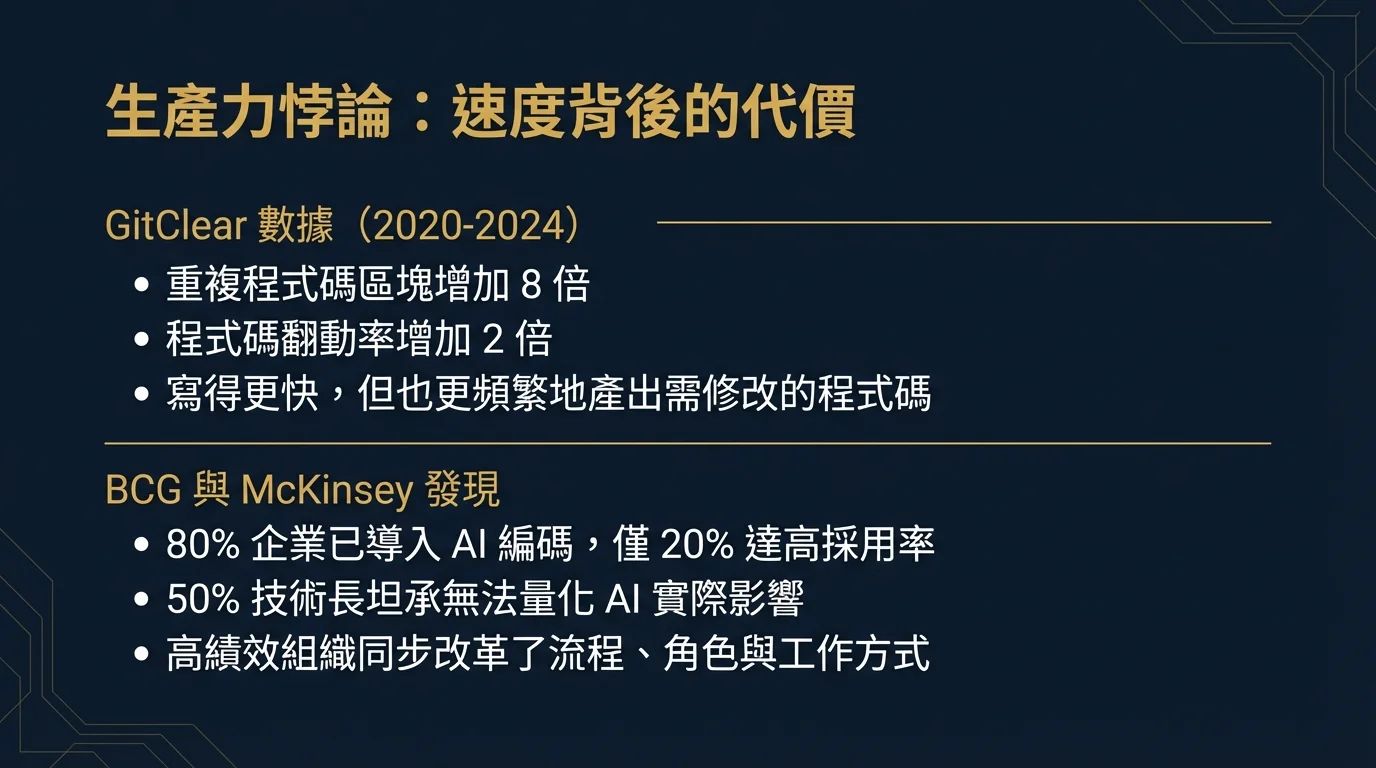

The study cited GitClear's analysis of millions of lines of code from 2020 to 2024, uncovering two alarming trends: duplicate code blocks increased eightfold, and code churn (the proportion of code that is modified or deleted shortly after being written) doubled. In other words, developers are indeed writing faster, but they are also more frequently writing code that needs to be modified or discarded.

BCG's research[7] further found that while over 80% of enterprises have integrated generative AI into their coding workflows, only 20% have achieved more than 75% developer adoption rates, and 50% of CIOs admit they cannot quantify AI's actual impact on engineering effectiveness. More critically: outdated system architectures and immature DevOps practices severely diminish AI tool effectiveness — in other words, AI is no silver bullet; it needs to work in conjunction with modern engineering practices to deliver value.

McKinsey's research tracking over 600 organizations[8] presents a more complete picture: the highest-performing AI adoption organizations did achieve 16-30% team productivity improvements and 31-45% software quality improvements. But the common characteristic of these organizations is that they not only adopted tools but also simultaneously comprehensively reformed their processes, role definitions, and ways of working. Organizations that adopted tools without changing methodology saw minimal improvement.

4. Structural Shifts in R&D Team Roles

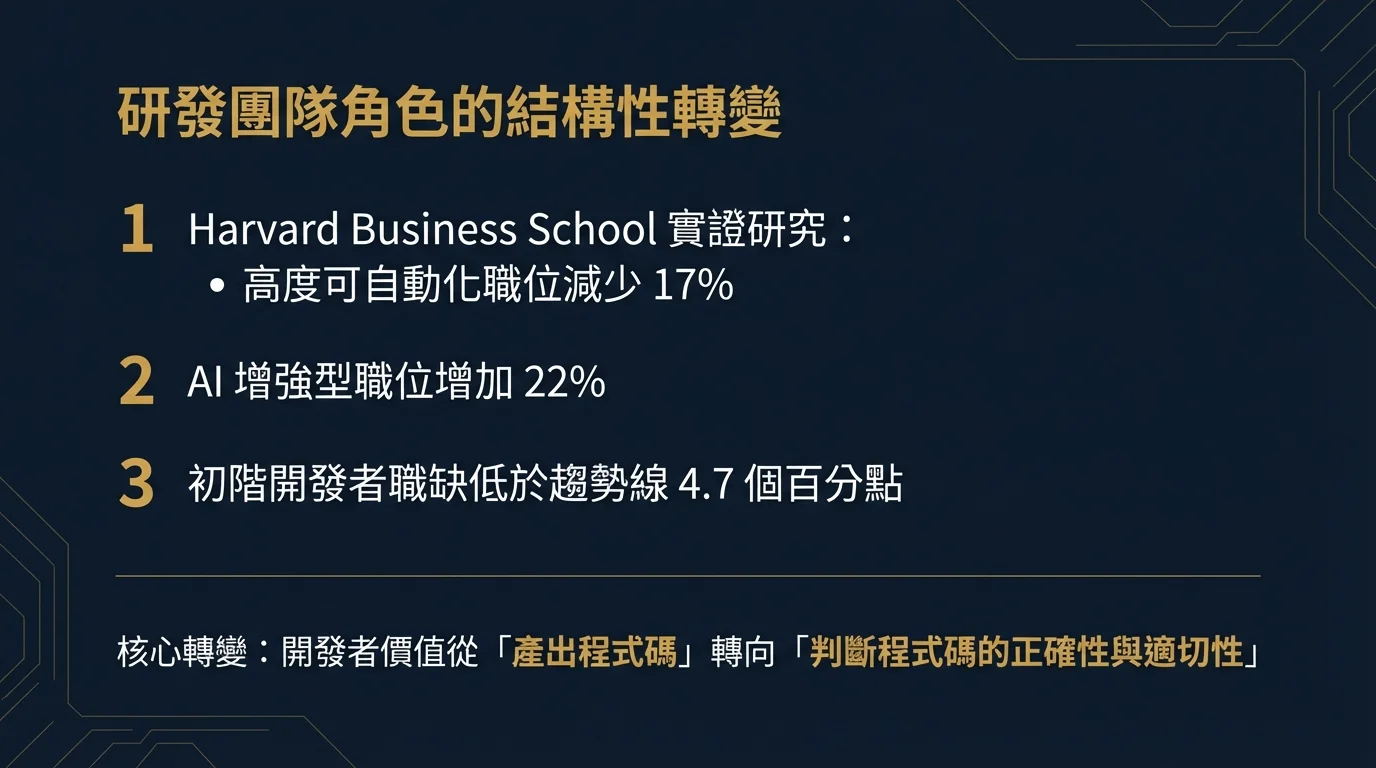

An empirical study from Harvard Business School[9] reveals AI's impact on the software development workforce structure through labor market data: positions involving highly automatable structural cognitive tasks decreased by 17%, while positions where AI serves as an augmentation tool actually increased by 22%. Notably, entry-level software developer job postings are 4.7 percentage points below trend — this is not caused by layoffs but by enterprises reducing new hire recruitment.

These data point to a structural shift: the core value of developers is shifting from "producing code" to "judging the correctness and appropriateness of code." A 2025 survey paper in ACM Transactions on Software Engineering and Methodology[10] positions this shift as a discipline-level redefinition of software engineering — from requirements analysis and code generation to testing and maintenance, AI is permeating the entire development lifecycle, and the human role is migrating toward higher-level design judgment and quality assurance.

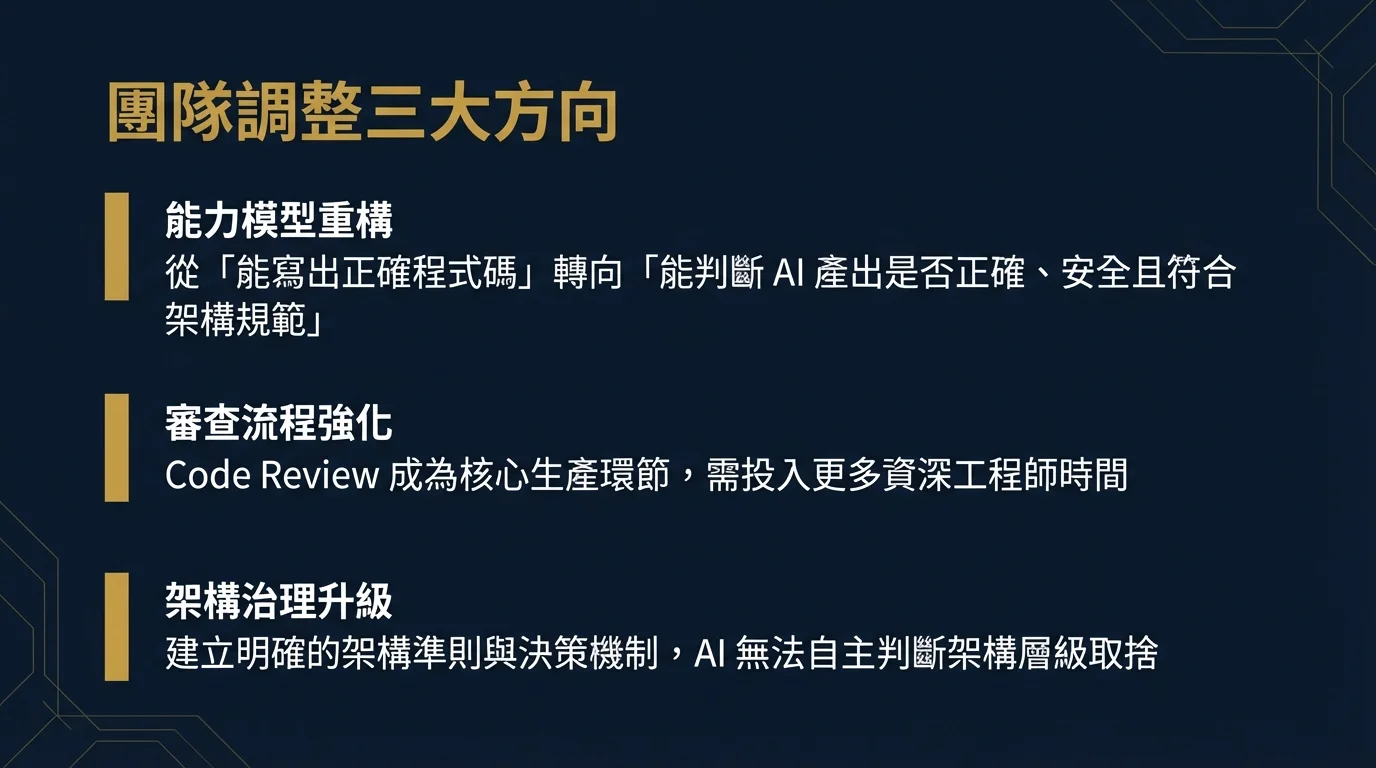

For R&D team managers, this means three specific adjustment areas:

- Capability Model Restructuring: Recruitment and training priorities need to shift from "ability to write correct code" to "ability to judge whether AI-generated code is correct, secure, and compliant with architectural standards"

- Review Process Strengthening: When a large volume of code is AI-generated, Code Review is no longer optional but a core production activity, requiring more senior engineer time investment

- Architecture Governance Upgrade: AI can rapidly produce code but cannot autonomously make architecture-level trade-offs — this requires clear architectural guidelines and decision-making mechanisms

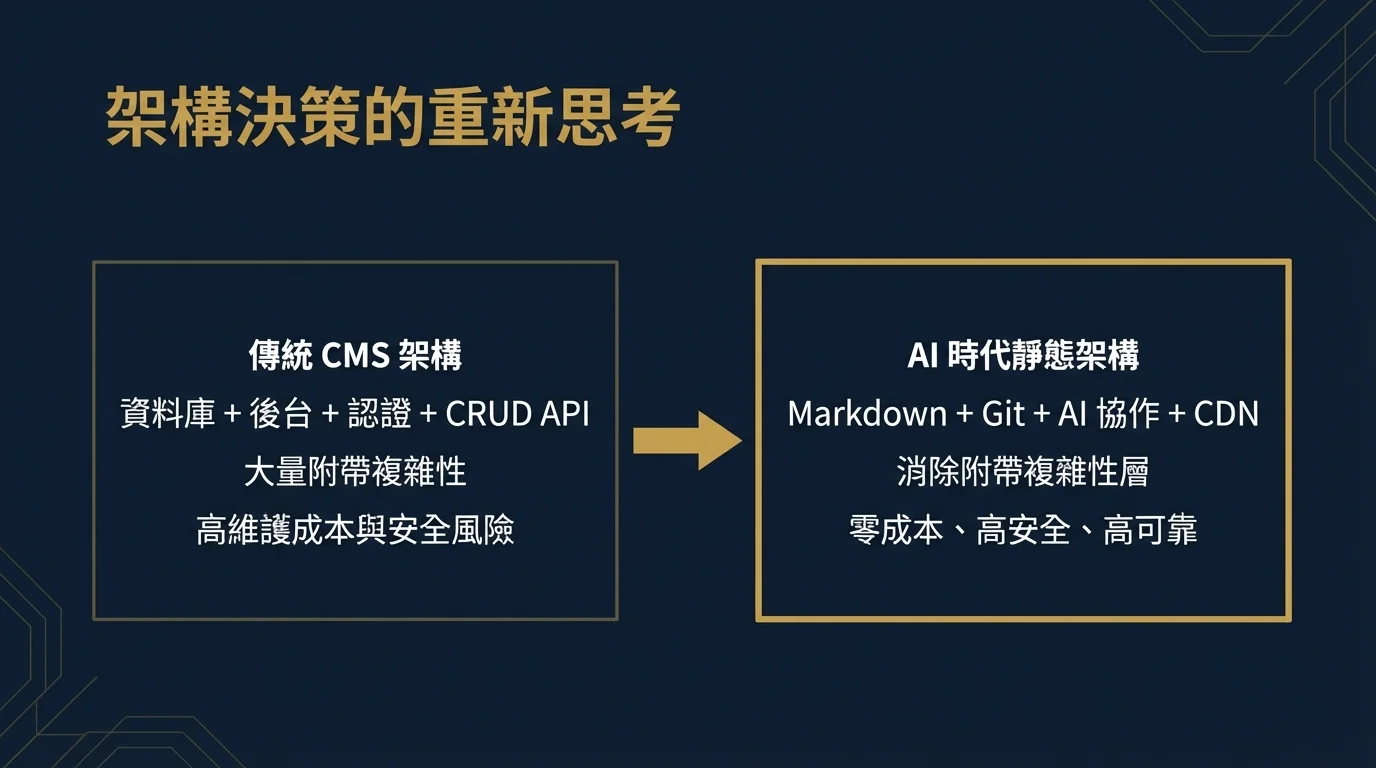

5. Rethinking Architecture Decisions: Eliminating Unnecessary Accidental Complexity

AI's impact on software development should not remain at the "faster code writing" level. The deeper insight is that it forces us to reexamine many architecture decisions previously taken for granted.

Take content management as an example. Traditional website content publishing almost invariably relies on a full CMS backend system: database design, user authentication, CRUD APIs, admin interfaces, image upload and storage, server maintenance. Building and maintaining these components constitutes significant engineering overhead — and they all fall under what Brooks called "accidental complexity": they are unrelated to the essential goal of "publishing high-quality content" yet consume substantial development and operational resources.

In the context of AI-assisted development, a fundamentally different architecture becomes possible: a static site generator with structured Markdown files, using AI collaboration for content writing, metadata generation, and image creation, with version control (Git) managing content changes, and CDN (such as Cloudflare Pages) handling automatic deployment. This architecture eliminates all accidental complexity layers including databases, backends, and authentication systems, while gaining higher security (no backend to attack), lower operational costs (static resources at near-zero cost), and better reliability (no database downtime risk).

This is not cutting corners but a rational architecture decision in the AI era. When generative AI can efficiently handle content formatting, metadata generation, and other structural work, backend systems specifically built for these tasks become unnecessary accidental complexity. Just as the advent of compilers made handwriting assembly language unnecessary accidental work, AI's maturation renders many previously "necessary" architecture layers obsolete.

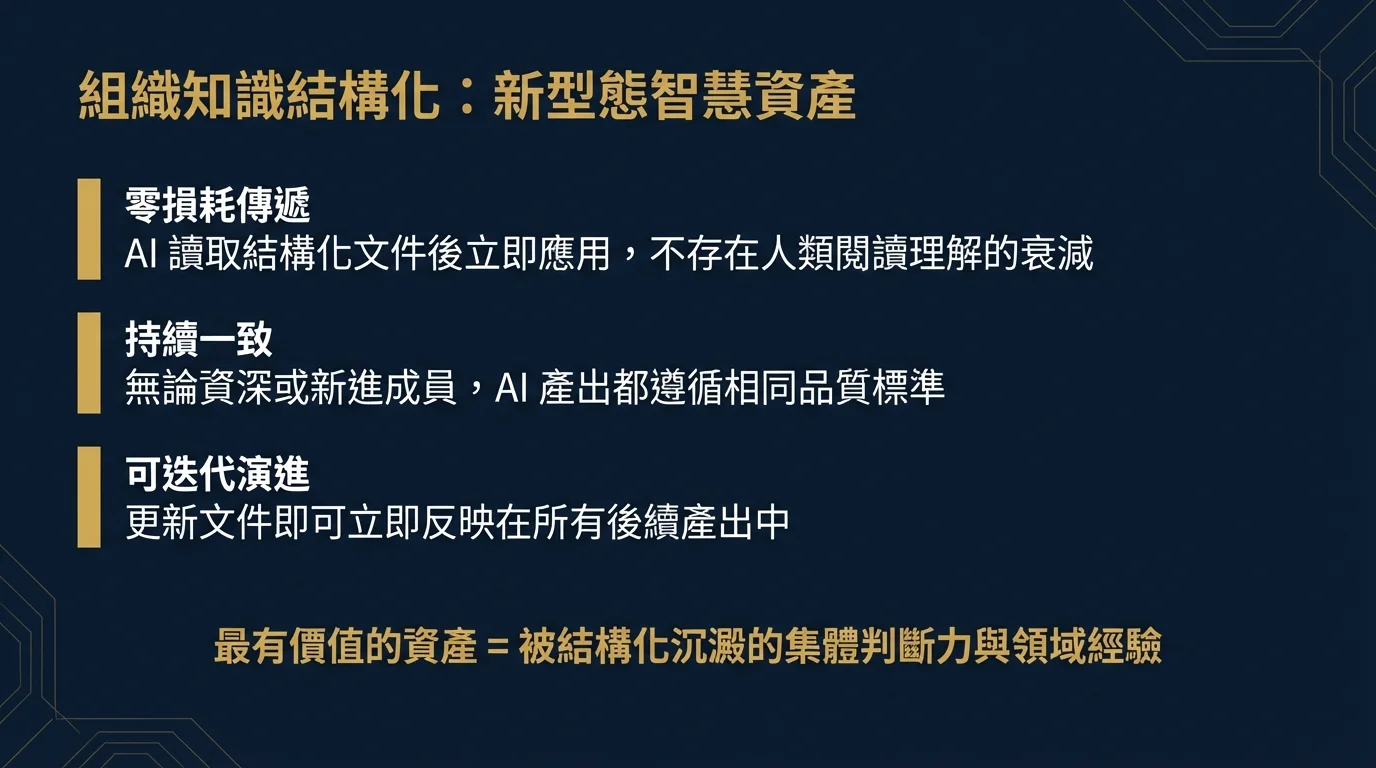

6. Structured Codification of Organizational Knowledge: A New Type of Intellectual Asset

Another important trend we have observed in practice is a fundamental change in how teams manage enterprise knowledge.

Traditional software engineering knowledge management relies on documentation (wikis, Confluence), code comments, oral tradition, and experience transfer through Code Reviews. The common limitation of these methods is that the knowledge consumer is human, and human reading, comprehension, and application all involve loss. A thorough coding standards document might be written and then never read again, and the same types of issues repeatedly raised in Code Reviews illustrate the inefficiency of knowledge transfer.

In AI-assisted development workflows, a new possibility emerges: structuring organizational knowledge into formats that AI can directly consume.

Specifically, our team systematically organizes development standards, architecture decision records, common pitfalls, quality standards, and other experience into structured Markdown documents or AI skill definitions (Skills). When AI tools execute tasks, these documents are automatically loaded as context, enabling AI to follow team best practices in every code generation — without relying on developers to remember or look up documentation.

This is essentially a new type of "organizational intelligence infrastructure":

- Zero-Loss Transfer: AI reads structured documents and immediately applies them, without the decay inherent in human reading comprehension

- Consistent Quality: Whether a senior engineer or a new team member uses AI tools, the output follows the same quality standards

- Iterative Evolution: Every time a new best practice or pitfall is discovered, updating the document immediately reflects in all subsequent outputs

Taking this concept deeper: in the AI era, an R&D team's most valuable asset is not just its members' technical capabilities but the collective judgment and domain experience that has been structurally codified. People move on, memories fade, but properly structured knowledge documents enable AI tools to consistently perform at the team's highest level.

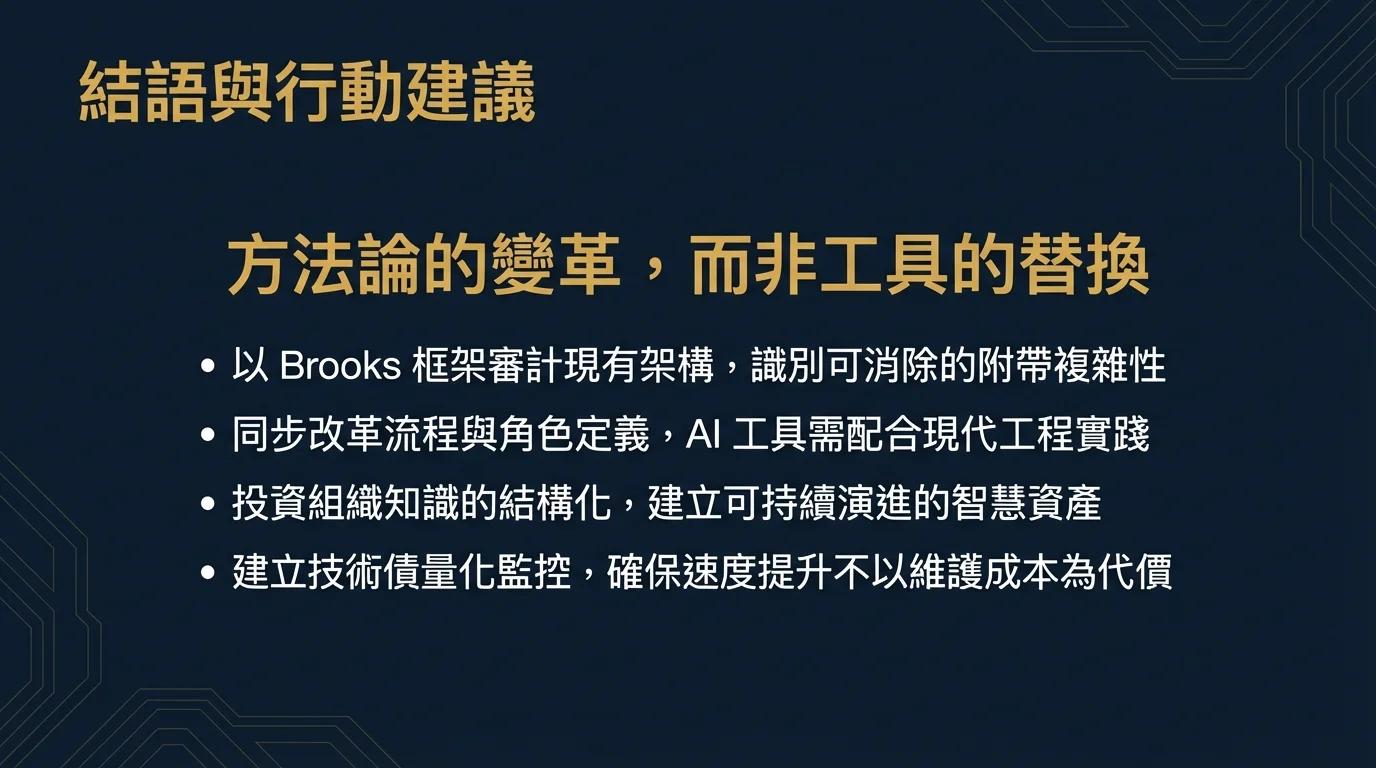

7. Systematic Recommendations for Technology Decision-Makers

Based on the above analysis and our practical experience, we offer the following recommendations to technology decision-makers planning R&D strategies for the AI era:

7.1 Audit Existing Architecture Using Brooks' Framework

Systematically review the existing technology architecture, distinguishing essential complexity from accidental complexity. For areas with high accidental complexity (boilerplate code, build configuration, content management backends, repetitive CRUD layers), evaluate whether they can be eliminated through AI assistance or architecture simplification. MIT Sloan's research[6] reminds us that in brownfield (legacy system) environments, AI-generated code may exacerbate existing problems, so simplifying architecture should precede AI tool adoption.

7.2 Simultaneously Reform Processes and Role Definitions

McKinsey's data[8] clearly shows that organizations that only adopt tools without changing their ways of working see almost no improvement. AI tool adoption should be accompanied by strengthened Code Review processes, adjusted developer capability models, and established architecture governance mechanisms. Senior engineers' time should be reallocated from "writing code" to "reviewing code" and "defining architectural guidelines."

7.3 Invest in Knowledge Structuring

Systematically convert the team's coding standards, Architecture Decision Records (ADRs), common error patterns, and quality standards into structured documents. This is one of the highest AI ROI engineering investments in the AI era — it not only improves AI tool output quality but also builds a sustainably evolving intellectual asset for the organization.

7.4 Establish Quantitative Technical Debt Monitoring

Given that AI-assisted development can accelerate technical debt accumulation[6], establishing quantitative code quality monitoring mechanisms is crucial. Track key metrics such as duplication rate, churn rate, and complexity indicators to ensure that development speed improvements do not come at the expense of long-term maintenance costs.

8. Conclusion: A Methodological Transformation, Not Just a Tool Replacement

Returning to Brooks' insight from nearly four decades ago: the fundamental challenge of software engineering lies not in tools but in the complexity of the problem itself. Generative AI is a powerful tool that is eliminating accidental complexity at an unprecedented pace, but essential complexity — understanding requirements, designing architecture, making correct engineering judgments — remains an irreplaceable domain for humans.

What truly matters is not "whether to use AI for coding" but whether enterprises have the capability to, with AI's assistance, reexamine architecture decisions, redefine team roles, and rethink knowledge management approaches. Organizations that view AI merely as "a faster typist" will ultimately be surpassed by those that understand this is a methodological transformation.

If your R&D team is evaluating how to systematically integrate AI tools, restructure development processes, or build organizational knowledge infrastructure, our team has complete experience from academic research to engineering implementation and welcomes an in-depth technical dialogue.