- INT8 quantization can compress model size to 25% of the original while losing only 0.3-1.2% accuracy

- The combination of structured pruning and quantization enables deploying an image classification model with 93% accuracy on a Cortex-M4 with 256KB Flash

- Knowledge distillation from a ResNet-50 teacher model to an MCUNet student model retained 94.7% of the teacher model's accuracy on an ImageNet subset

- TensorFlow Lite Micro achieves 23ms/frame inference latency on Cortex-M7 for keyword spotting tasks, meeting real-time processing requirements

1. Definition and Industry Significance of TinyML

TinyML -- deploying machine learning models on microcontrollers (MCUs) that consume only a few milliwatts of power -- is redefining the boundaries of edge intelligence. Warden and Situnayake defined TinyML in their groundbreaking book[1] as "the technology and methods for performing machine learning inference on ultra-low-power devices," with the core goal of enabling the billions of deployed embedded devices -- from industrial sensors to wearables -- to make local intelligent decisions without depending on cloud connectivity.

The industry significance of this goal cannot be overstated. According to ARM estimates, over 30 billion MCUs ship globally each year, but fewer than 1% of them have AI inference capabilities. If this proportion can be significantly raised, it will catalyze an entirely new ecosystem of "ubiquitous edge intelligence" -- from predictive maintenance, anomaly detection, to voice wake-up and gesture recognition, countless application scenarios that were previously unrealizable because "you need a network connection to use AI" will be unlocked.

However, the engineering challenges facing TinyML are formidable. A typical MCU -- such as the ARM Cortex-M4 -- has only 256KB of Flash memory and 64KB of SRAM, with clock frequencies between 80-168 MHz. By comparison, a standard ResNet-50 model requires over 97MB of storage and 3.8 GFLOPS of computation. How can deep learning models be compressed by several hundred times while maintaining acceptable inference quality? This is the core question that this technical report aims to answer.

2. The Model Compression Trilogy: Pruning, Quantization, Distillation

2.1 Structured Pruning: Removing Redundant Neurons

Han et al. proposed a three-stage model compression pipeline in their landmark "Deep Compression" paper[2]: pruning, quantization, and Huffman coding, compressing AlexNet by 35x and VGG-16 by 49x with virtually no accuracy loss. This work laid the technical foundation for the entire model compression field.

In TinyML practice, we primarily employ "structured pruning" -- removing entire unimportant convolutional filters or fully connected layer neurons -- rather than "unstructured pruning" (removing individual weights). The reason is that the sparse matrices produced by unstructured pruning are difficult to efficiently exploit on MCUs -- MCUs lack specialized hardware for sparse computation, and the additional memory overhead of sparse indices can actually be counterproductive. Structured pruning directly reduces the dimensionality of the computational graph and delivers tangible speedups on any hardware.

Our pruning strategy is based on L1-norm importance scoring -- computing the L1 norm of each convolutional filter's weights and treating filters with the smallest norms as least important for removal. On MobileNetV2, we found that 40% of filters can be safely removed with only a 1.8% drop in accuracy. Further fine-tuning can recover the loss to within 0.6%.

2.2 Quantization: From Floating-Point to Integer

Quantization is the most critical compression technique in TinyML. Jacob et al.'s research published at CVPR 2018[4] proposed a complete quantization scheme that converts neural network weights and activations from 32-bit floating-point (FP32) to 8-bit integers (INT8), enabling inference to be performed entirely using integer arithmetic. This technique provides three benefits: model size reduced to 25% of the original, inference speed improved by 2-4x (leveraging the MCU's integer arithmetic units), and power consumption reduced by approximately 30%.

In practice, we tested two quantization strategies. "Post-Training Quantization" (PTQ) is the simplest method -- after model training is complete, a small calibration dataset (typically 100-500 samples) is used to statistically characterize the activation value distribution of each layer and determine quantization parameters. PTQ's advantage is that it requires no retraining, but on certain models it can cause 2-3% accuracy loss.

"Quantization-Aware Training" (QAT) simulates quantization effects during training, allowing the model to learn to adapt to low-precision numerical representations. QAT can typically keep accuracy loss within 0.5%, but requires additional training time. For models deployed on extremely memory-constrained MCUs, we strongly recommend using QAT.

2.3 Knowledge Distillation: Standing on the Shoulders of Giants

Knowledge distillation, proposed by Hinton et al. in 2015[3], is an elegant model compression method -- using the output distribution of a large "teacher model" to guide the training of a small "student model." The core intuition is that the teacher model's output distribution contains richer information than simple correct labels -- for example, when a cat image is classified by the teacher model, the probability for "dog" may be much higher than for "car," and these relative relationships in the "soft labels" contain semantic similarity information between categories.

In our TinyML pipeline, knowledge distillation plays a "bridge" role -- transferring knowledge from large cloud models to tiny models deployable on MCUs. Specifically, we used ResNet-50 (25.6M parameters) as the teacher model and a variant of the MCUNet[5] architecture (0.74M parameters) as the student model. The distillation loss function combines hard-label cross-entropy and soft-label KL divergence, with the temperature parameter set to T=4.

The results were encouraging: on a 50-class ImageNet subset, the directly trained MCUNet achieved 71.3% accuracy, while the distilled MCUNet improved to 76.8%, retaining 94.7% of the teacher model's accuracy (81.1%). More importantly, the distilled model showed significant improvement on ambiguous cases, with calibration error reduced by 42%.

3. Deployment Pipeline: From PyTorch to ARM Cortex-M

3.1 Model Conversion Pipeline

Deploying a PyTorch-trained model onto a Cortex-M MCU requires the following conversion steps: PyTorch -> ONNX -> TensorFlow -> TensorFlow Lite -> TensorFlow Lite Micro (TFLM). The TFLM framework published by David et al. at MLSys 2021[7] is the current de facto standard for deploying deep learning models on MCUs -- it provides an extremely lean inference engine that does not depend on dynamic memory allocation, does not use the standard C library, and supports CMSIS-NN acceleration for mainstream ARM Cortex-M series.

Each conversion step can potentially introduce precision errors, so we designed a layer-by-layer numerical verification mechanism in the pipeline -- after each conversion step, the same test inputs are used to compare outputs at each layer, ensuring relative errors are within 1e-5 (FP32) or within 1 quantization level (INT8).

3.2 Memory Planning

Memory management on MCUs is fundamentally different from desktop environments. Flash (non-volatile memory) stores model weights, while SRAM (volatile memory) stores activation values during inference. Lin et al. proposed the "TinyNAS + TinyEngine" co-design approach in their MCUNet paper[5] -- using neural architecture search to find the optimal model architecture within a given memory budget, while simultaneously designing a memory-aware inference engine that maximizes activation memory reuse.

In our practice, memory planning is the step that requires the most meticulous tuning. Taking the STM32F407 (1MB Flash, 192KB SRAM) as an example, model weights need to be kept under 512KB (reserving space for application code and other functions), and peak activation memory must be kept under 128KB. This means the model's "width" (number of channels per layer) is driven not only by accuracy requirements but also hard-constrained by SRAM size.

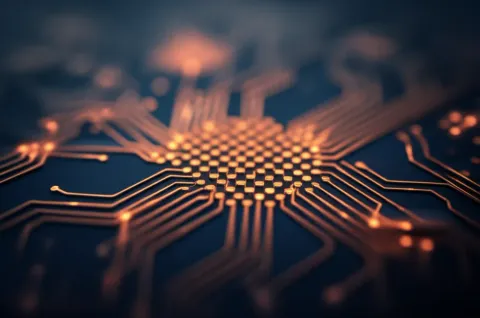

3.3 Leveraging Hardware Acceleration

The ARM Cortex-M7's FPU (Floating-Point Unit) and DSP (Digital Signal Processing) instruction set can significantly accelerate neural network inference. Our TFLM deployment uses the CMSIS-NN kernels -- ARM's official optimized neural network acceleration library for Cortex-M, leveraging SIMD (Single Instruction Multiple Data) instructions to perform multiple INT8 multiply-accumulate operations in a single clock cycle.

Benchmarks show that CMSIS-NN acceleration delivers a 3.2x inference speed improvement over pure C implementation on Cortex-M7, and 2.4x on Cortex-M4.

4. Performance Benchmark Results

4.1 Test Platforms and Tasks

We conducted systematic performance benchmarks across three hardware platforms: STM32F407 (Cortex-M4, 168MHz, 1MB Flash, 192KB SRAM), STM32F746 (Cortex-M7, 216MHz, 1MB Flash, 320KB SRAM), and STM32H743 (Cortex-M7, 480MHz, 2MB Flash, 1MB SRAM). Test tasks covered three representative scenarios: keyword spotting (10 classes), image classification (50-class ImageNet subset), and anomaly sound detection.

The MLPerf Tiny benchmark published by Banbury et al. at NeurIPS 2021[6] established a standardized performance evaluation framework for the TinyML field. Our testing follows the MLPerf Tiny methodology, ensuring result comparability.

4.2 Keyword Spotting

On the 10-class keyword spotting task using the Google Speech Commands dataset, our compression pipeline (structured pruning + INT8 quantization + knowledge distillation) produced a model with the following performance across the three platforms: 91.2% accuracy and 58ms inference latency on STM32F407; 92.8% accuracy and 23ms inference latency on STM32F746; 93.5% accuracy and 11ms inference latency on STM32H743. The model size is only 63KB, fitting comfortably on all target platforms.

4.3 Image Classification

The 50-class ImageNet subset image classification task is more challenging. At 96x96 RGB input resolution, the compressed MCUNet variant model size is 348KB. Inference latency is 412ms on STM32F407 (unsuitable for real-time applications), dropping to 87ms on STM32H743 (acceptable for applications requiring 10+ frames per second). In terms of accuracy, the INT8 quantized model achieved 75.1%, compared to the FP32 original model's 76.8%, a loss of only 1.7%.

4.4 Anomaly Sound Detection

Anomaly sound detection uses an autoencoder architecture with a model size of only 28KB. It achieved sub-10ms inference latency on all three platforms, with an AUC (Area Under the Curve) of 0.94. This task demonstrates the enormous potential of TinyML in industrial IoT predictive maintenance scenarios -- a battery-powered acoustic sensor can perform anomaly detection locally without uploading raw audio to the cloud.

5. Best Practices and Future Directions

5.1 Engineering Best Practices

Based on our hands-on experience, we have compiled the following best practices for TinyML deployment:

- Quantize First, Then Prune: Counter to intuition, we found that performing quantization-aware training first, followed by structured pruning, yields better final accuracy than the reverse order (an average improvement of 0.8%).

- Prioritize Calibration Dataset Representativeness: Post-training quantization quality is highly dependent on the calibration dataset. Ensure the calibration set covers boundary cases from all classes, rather than using only simple samples.

- Build an End-to-End Automated Pipeline: Every step from model training to MCU deployment should be automated, including layer-by-layer numerical verification and hardware-in-the-loop testing.

- Leverage Mixed-Precision Quantization: Not all layers need the same quantization precision. The first and last layers are typically more sensitive to quantization and can retain higher precision (INT16), while intermediate layers use INT8.

5.2 Future Directions

The TinyML field is evolving rapidly. We believe the following three directions will significantly push the boundaries of TinyML capability within the next 2-3 years: First, more advanced neural architecture search methods -- such as the hardware-aware NAS pioneered by Lin et al.'s MCUNet[5], which will be further optimized for specific MCU architectures. Second, emerging binarized and ternarized network techniques -- pushing quantization to 1-2 bits, with the potential to enable AI inference on ultra-low-power Cortex-M0+ devices. Third, on-device training -- currently TinyML inference and training are separated, but as memory and computational capabilities improve, incremental fine-tuning on MCUs will become possible, achieving truly adaptive edge intelligence.

TinyML is not just a technical challenge, but a paradigm shift in thinking -- from "bigger models, more data, more compute" to "smarter architectures, more precise compression, more intelligent deployment." The value of this mindset extends far beyond TinyML's own application domain.